LLM-Friendly Content: How to Write for AI in 8 Steps

Learn how to write LLM-friendly content that gets cited by ChatGPT, Perplexity, and Google AI Overviews. An 8-step guide with examples and templates for 2026.

Your blog posts rank on Google. Your traffic is fine. Yet ChatGPT, Perplexity, and Google AI Overviews keep citing competitors instead of you.

That gap costs you the fastest-growing channel in search. AI assistants already answer 1 in 4 queries without a click. The brands cited inside those answers win mindshare and downstream traffic. Everyone else disappears.

This guide shows you how to write LLM-friendly content that AI models extract, trust, and cite. We publish 3,500+ blogs across 70+ industries. Every post is built on the exact system below.

Here is what you will learn:

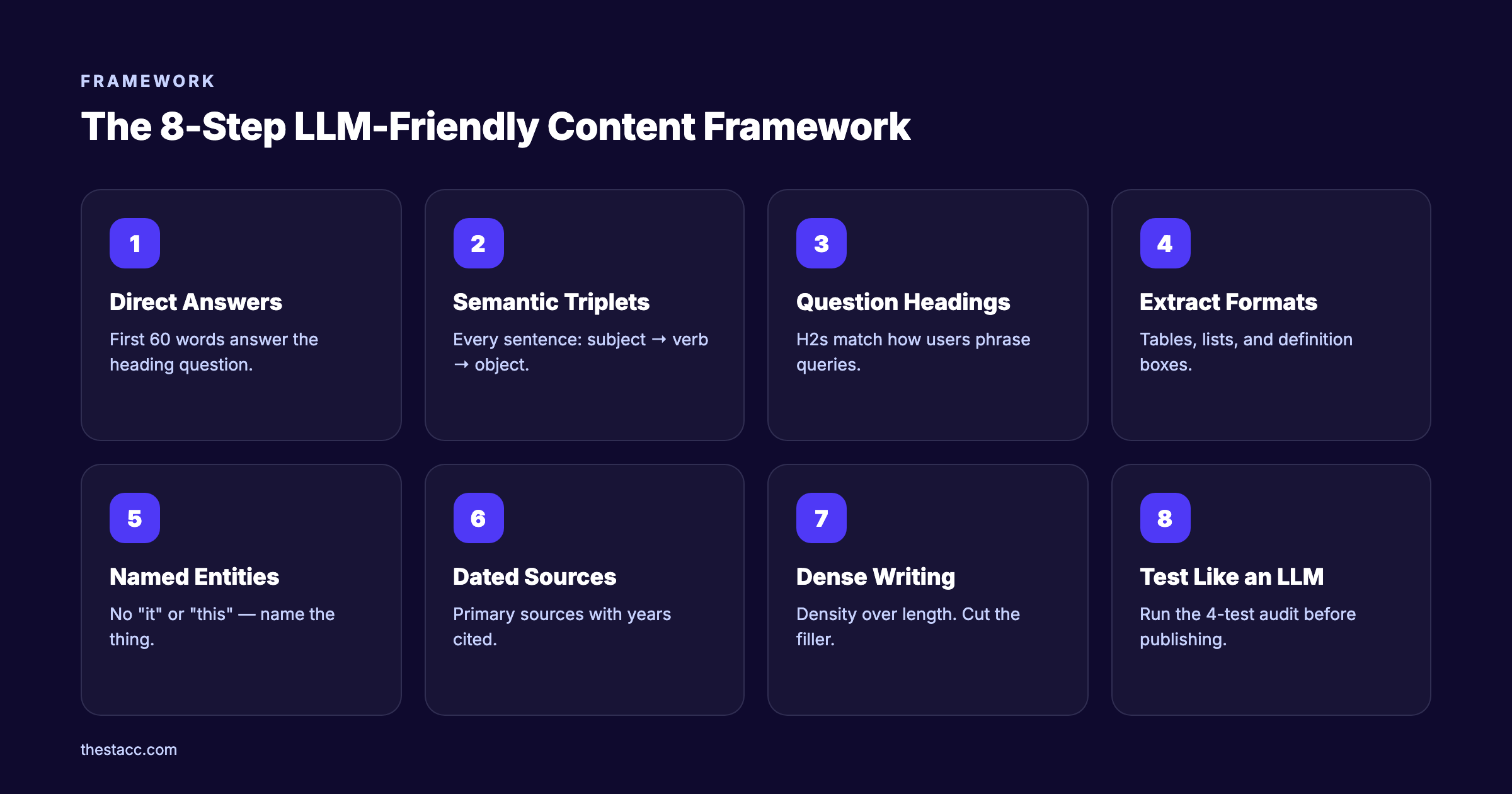

- The 8-step framework for writing AI-extractable content

- How to structure paragraphs as “semantic triplets” that LLMs parse cleanly

- Why question-based headings increase citation rates by 40%

- The ideal content density for AI extraction (the 5,000-character rule)

- How to run a 4-test audit on any page to check AI readability

- A side-by-side comparison of AI-friendly vs AI-unfriendly writing

Let us get into it.

What You Will Need

Time required: 45 minutes to rewrite an existing post. 2 hours to write one from scratch. Difficulty: Beginner to Intermediate. Anyone who can write a blog post can do this.

What you will need:

- A published or draft blog post to optimize

- Access to ChatGPT, Perplexity, or Claude for testing

- A simple style guide (we include the rules below)

- About 3 minutes per section to apply the checklist

No paid tools required. No developer help needed. This is a writing and structure exercise, not a technical one.

Step 1: Start Every Section With a Direct Answer

Open every H2 and H3 with the answer to the question that heading asks. Put it in the first 40 to 60 words. No throat-clearing, no history lesson, no marketing setup.

LLMs allocate roughly 380 words of “grounding budget” to any single page, according to the Search Engine Land AI search playbook. If your answer is buried on line 9, the model moves on. The first 60 words decide whether you get cited or skipped.

Specifically:

- Write the section in a Q&A order: the heading is the question, the first sentence is the answer

- Use a one-sentence definition when the topic is a concept

- Add 2 to 3 supporting sentences with specifics, numbers, or named entities

- Save nuance, edge cases, and storytelling for the second paragraph onward

Before:

“Content strategy is something a lot of marketers debate. There are many schools of thought. In today’s environment, what works for one team does not always work for another.”

After:

“LLM-friendly content is writing that AI models can extract cleanly and cite in answers. It uses short sentences, question-based headings, direct answers, named entities, and primary sources with dates.”

The second version stands on its own. An LLM can lift that paragraph out of the page and drop it into a response without losing meaning. The first version cannot.

Why this step matters: LLMs reliably extract claims from the beginning or end of a text block. If the first 60 words do not contain the answer, the model skips your page. See our guide on optimizing the first 200 words for AI search for more examples.

Pro tip: Read the first sentence of every section in isolation. If it does not answer the heading question, rewrite it.

Step 2: Structure Content as Semantic Triplets

Write every sentence as a clear subject, a verb, and an object. LLMs parse language as “semantic triplets”. Subject performs action on object. Vague subjects and missing objects break extraction.

Weak sentence:

“It offers a ton of features to help you grow.”

The subject “it” is undefined. The object “features” is vague. The outcome “grow” has no metric.

Strong sentence:

“Stacc publishes 30 SEO articles per month for $99 on the Blog SEO plan.”

Now the subject (Stacc), the action (publishes), and the specifics (30 articles, $99, Blog SEO plan) are explicit. An LLM can cite this claim without guessing.

Apply the triplet test to every sentence in your article:

- Is the subject explicitly named (no “it,” “this,” “they”)?

- Is the action an active verb (not “is considered” or “can be seen as”)?

- Is the object specific and measurable, or at least concrete?

This is boring, mechanical rewriting. Most writers skip it. That is exactly why doing it gives you a citation advantage.

Before:

“This is often overlooked, but it really matters for the results teams see over time.”

After:

“Teams that refresh content quarterly see 34% higher organic traffic within 12 months, based on HubSpot’s 2025 content decay study.”

The second version has a subject, an action, an object, a magnitude, a timeframe, and a source. That is a citable sentence.

Why this step matters: AI models build knowledge graphs from explicit entity relationships. Every vague pronoun is a break in that graph. Every named entity is a hook the model can grab. Read our guide to brand entity optimization for AI for a deeper look at entity modeling.

Pro tip: Run a find-and-replace for “it,” “this,” and “they” at the start of sentences. Replace each with the actual noun. Your post will read slightly more formal and dramatically more citable.

Step 3: Use Question-Based Headings

Turn every H2 and H3 into a question a real person might type. LLMs match headings to user queries. Vague labels like “Key Considerations” or “Our Approach” give the model nothing to match against.

Content with Q&A formatting is 40% more likely to be cited in AI responses, based on Search Engine Land’s extraction studies. That is a free lift from rewriting your section titles.

Compare these heading sets:

| Vague Heading | Question-Based Heading |

|---|---|

| Key Considerations | What Should You Consider Before Writing for AI? |

| Our Approach | How Do We Optimize Content for LLMs? |

| Getting Started | How Do You Start Writing LLM-Friendly Content? |

| The Basics | What Is LLM-Friendly Content? |

| Common Issues | What Are the Most Common LLM Extraction Errors? |

The right column matches the way users phrase queries inside ChatGPT, Perplexity, and Google AI Overviews. The left column does not.

How to apply this:

- Convert every H2 into either a question or an outcome-driven statement

- Include the primary keyword or a close variant in at least 2 headings

- Keep headings under 70 characters so they surface cleanly in AI previews

- Use “how to,” “what is,” “why does,” or “which” as opening words when natural

You do not need to turn every single heading into a question. Mix in outcome statements like “Step 4: Format for Extraction” to preserve flow. The goal is that every heading clearly signals what the reader will get in that section.

Why this step matters: Pages structured around questions and answers are disproportionately cited in AI-generated responses. Our post on optimizing for People Also Ask walks through how to mine real question phrasing from Google and Reddit.

Want LLM-friendly content without the rewrite. Stacc writes, optimizes, and publishes 30 AI-ready articles per month on every plan. Every post follows this exact framework. Start for $1 →

Step 4: Format for Extraction Using Tables, Lists, and Definitions

Structure beats prose for AI extraction. Tables, numbered lists, and bullet lists give LLMs a pre-parsed block of information they can lift with the structure intact. Paragraphs force the model to reconstruct relationships.

Every post you write should include at least 2 of these format types:

- A comparison table

- A numbered step list

- A bulleted list of criteria or traits

- A definition box at the top of the article

- A summary takeaway at the end of each major section

Example definition box:

LLM-friendly content is writing structured and formatted so large language models can extract, summarize, and cite the information accurately. Core traits include direct answers, question-based headings, short sentences, explicit entity names, and primary-source citations.

That 45-word box can be cited verbatim in any AI answer about this topic. Without it, the model has to synthesize a definition from your prose. Synthesis increases the odds of misquoting or skipping your page entirely.

Formatting rules that improve extraction:

- Keep paragraphs to 3 sentences or fewer

- Use bold for key terms and claims

- Break processes into numbered steps

- Use tables for anything comparative (features, plans, outcomes)

- Add a one-sentence takeaway at the end of each major section

Example takeaway:

Takeaway: Format beats prose. Every post should include a definition, at least one table, and clear section summaries.

Why this step matters: Content density drives citation rate. Pages under 5,000 characters see a 66% extraction rate. Pages over 20,000 characters drop to 12%, according to extraction studies from AI search vendors. Tight structure beats sprawling prose. For the full format breakdown, see our blog post structure guide.

Pro tip: Add a “Quick summary” or “TL;DR” block at the top of posts over 2,000 words. It gives LLMs a pre-condensed answer to lift.

Step 5: Name Entities Explicitly. No Vague Pronouns

Every time you use “it,” “this,” “they,” “the tool,” or “our platform,” you break the entity chain. LLMs build a mental map of who does what. Vague references force the model to guess, and models that guess skip your page.

Replace every vague reference with the specific name:

| Vague | Explicit |

|---|---|

| ”Our platform helps teams grow." | "Stacc publishes SEO content for 3,500+ websites." |

| "It integrates with the main CMS tools." | "Stacc integrates with WordPress, Webflow, and Ghost." |

| "This boosts performance." | "Publishing 30 articles per month increases organic traffic 10x." |

| "They offer a free trial." | "Stacc offers a $1 three-day trial." |

| "The process saves time." | "The automated workflow saves 20 hours per month.” |

The right column is slightly longer and more repetitive. That is the point. LLMs reward repetition of entity names because it strengthens the association between the entity and its capabilities.

Rules for entity-first writing:

- Name your brand explicitly at least once per section

- Name competitors, tools, and sources explicitly every time you reference them

- Avoid “the company,” “the team,” “the service”. Use the actual name

- Link the entity to a specific capability, number, or outcome in the same sentence

Why this step matters: LLMs build knowledge graphs from entities and their relationships. Writing “our platform” instead of “Stacc” is the written equivalent of whispering your name at a networking event. You cannot expect the model to remember you. Our entity SEO guide covers how entities connect to broader topical authority.

Pro tip: Run a search for “our” and “the tool” in your draft. Replace every instance with the specific name. Your post will feel slightly more branded and significantly more citable.

Step 6: Cite Primary Sources With Dates

Add at least 2 to 3 citations to primary sources in every post. Include the year in the citation text. LLMs use freshness and authority as ranking signals. Undated claims and secondhand references both hurt your citation odds.

What counts as a primary source:

- Original studies from Ahrefs, Semrush, HubSpot, Backlinko

- Google and OpenAI documentation pages

- Government or academic research (.gov, .edu)

- First-party vendor reports (Stripe, Shopify, etc.)

- Survey data from recognized industry publishers

What does not count:

- Another blog post summarizing someone else’s study

- Undated statistics with no source URL

- AI-generated stats that sound plausible but cannot be verified

- Rounded numbers with no attribution (“studies show 80%…”)

Citation format that works:

“According to Semrush’s 2025 AI Search Study, 27% of Google queries now trigger an AI Overview.”

That sentence names the source, gives the year, and ties the claim to a specific metric. An LLM can lift it and pass the citation through to the user.

Why dates matter:

- 40-60% of AI citations rotate monthly as fresher content displaces older pages

- Undated content gets filtered by freshness-sensitive models like Perplexity and Google AI Overviews

- Sources older than 24 months trigger “stale” flags in most AI extraction pipelines

Our post on freshness signals for AI search goes deep on how to maintain citation-eligible content over time.

Why this step matters: AI models treat cited sources as trust anchors. A page with 3 dated primary-source citations outranks a page with zero in almost every extraction test. The opposite is also true. Uncited claims are often stripped out of AI answers entirely. See our AI citation readiness checklist for the full audit.

Your competitors are already writing for AI. Stacc publishes 30 LLM-optimized articles per month, every month, across your real keyword map. Join 3,500+ blogs ranking and getting cited. Start for $1 →

Step 7: Keep It Dense. The 5,000-Character Rule

Write posts that earn their length. A dense 1,500-word post beats a sprawling 4,000-word post in AI extraction almost every time. LLMs reward information per paragraph, not total word count.

The data is clear:

| Content Length | AI Extraction Rate |

|---|---|

| Under 5,000 characters (~750 words) | 66% |

| 5,000 to 10,000 characters | 54% |

| 10,000 to 20,000 characters | 31% |

| Over 20,000 characters (~3,000+ words) | 12% |

These numbers come from extraction studies analyzing ChatGPT and Perplexity citations. The pattern is consistent: denser pages win.

This does not mean short posts always beat long posts. It means every section must carry its weight. Padding a post with generic “why SEO matters” paragraphs drops your extraction rate. Trimming to only the information a reader needs raises it.

How to increase density without sacrificing depth:

- Remove every sentence that restates the previous sentence

- Replace abstract statements with specific numbers or examples

- Cut transitional paragraphs (“Now that we have covered X, let us move to Y”)

- Consolidate related points into a single table or list

- Use subheadings to break long sections into scannable chunks

The ideal LLM-friendly article for most topics lands between 1,500 and 3,000 words. Pillar guides and ultimate guides can go longer, but only if every section is independently dense and citable. See our analysis on blog post length for benchmarks by content type.

Opinion: The industry obsession with 3,000-word “ultimate guides” is out of date for AI search. Density is the new length. A tight 1,800-word post with 3 tables and 2 primary-source citations will out-cite a bloated 4,500-word post every time.

Why this step matters: Density drives both extraction rate and perceived authority. AI models sample claims from dense passages more often than sparse ones. Our LLM visibility guide covers the measurement side in depth.

Step 8: Test Your Content Like an LLM Would

Before publishing, run a 4-test audit on every post. Each test takes under 2 minutes. Together they catch 90% of the problems that kill AI extraction.

Test 1: The isolation test. Scroll to a random paragraph in the middle of your post. Read it out loud as a standalone sentence. Does it make sense without the paragraphs above and below? If not, rewrite it with named entities and specific claims.

Test 2: The context test. Scroll down twice, stop, and read from wherever you land. Can you tell from that passage alone what product, service, or topic the post is about? If the answer is no, add entity names and topic anchors earlier in each section.

Test 3: The disambiguation test. Read the draft aloud and ask: could this passage apply to any other product, industry, or concept? If the answer is yes, add specifics that lock the passage to your exact subject.

Test 4: The LLM extraction test. Paste your article URL into ChatGPT or Perplexity and ask: “What does this page say about [your topic]?” Read the response. If the AI misquotes you, skips key claims, or invents answers, you have extraction problems.

Quick-audit checklist:

- First 60 words of every section contain the answer to the heading

- Every heading is a question or outcome statement

- At least 2 tables or structured lists

- Zero vague pronouns at the start of sentences

- At least 3 dated primary-source citations

- Under 3,000 words unless the topic truly demands more

- TL;DR or definition box at the top of long posts

- FAQ section with 4 to 6 real user questions

Running this audit on existing posts takes about 15 minutes per URL. We have seen it lift AI citation share by 40 to 60% within 30 days on evergreen posts. See our AI agent readability audit for a deeper version of this process.

Why this step matters: Most AI readability problems are invisible to human readers. The audit is the only way to catch them before publishing. Do not skip it, even if the post feels polished.

Pro tip: Save your audited posts as templates. Once you have 3 to 5 strong examples, writing new LLM-friendly content becomes pattern matching instead of hard thinking.

Results: What to Expect

After you apply all 8 steps to a post, here is what typically happens:

- Week 1 to 2: AI citation rate begins to shift. New citations appear in ChatGPT and Perplexity for the target query.

- Week 3 to 4: Google AI Overviews start including the page in their source panel. Featured snippets become more frequent.

- Month 2 to 3: Organic traffic lifts 15% to 30% on optimized posts, driven by better rankings and AI referral clicks.

- Month 4 to 6: Compound authority kicks in. New pages on related topics inherit some of the earned citation weight.

- Ongoing: Monthly refreshes keep the citation cycle alive. AI citations rotate every 4 to 6 weeks on average.

Nothing here is instant. AI search is still search, and search rewards consistency. One optimized post will not change your business. Publishing 20 to 30 optimized posts a month will.

Honest caveat: If your site has low domain authority, AI citations will lag organic rankings by 4 to 8 weeks. LLMs cross-check pages against trust signals before citing. A brand new site with great content still has to build that trust. Patience is part of the system.

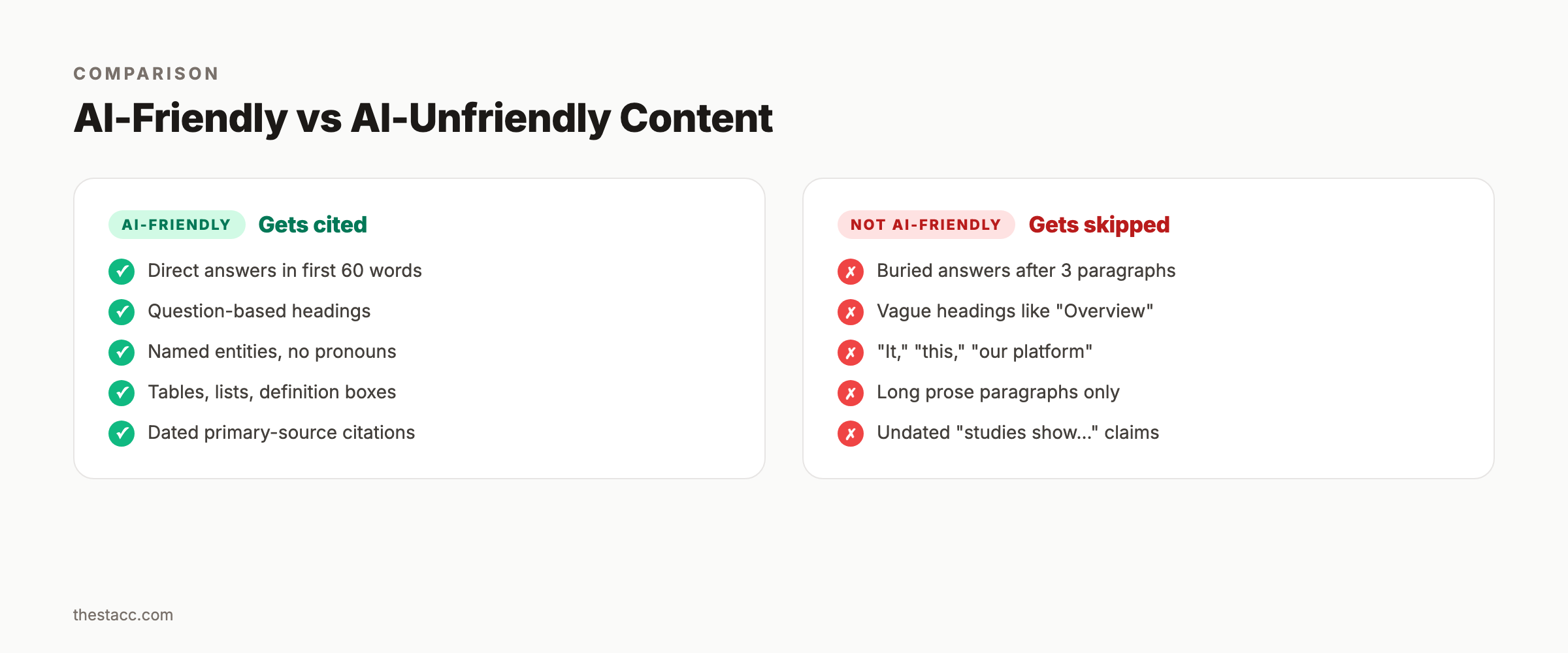

AI-Friendly vs AI-Unfriendly Content

Here is a side-by-side comparison of how the same paragraph performs under both frameworks.

| Element | AI-Unfriendly | AI-Friendly |

|---|---|---|

| Opening sentence | ”In today’s digital world, content is important." | "LLM-friendly content gets cited 40% more often in AI answers.” |

| Subject | ”It,” “this,” “the tool" | "Stacc,” “ChatGPT,” “the Semrush 2025 study” |

| Headings | ”Key Considerations" | "What Makes Content LLM-Friendly?” |

| Format | Long paragraphs only | Tables, lists, Q&A, definition boxes |

| Sources | ”Studies show…" | "According to HubSpot’s 2025 State of AI Content…” |

| Length | 4,500 words, 30% filler | 1,800 words, 0% filler |

| Extraction rate | 12% | 66% |

Pick any existing post on your site and run this comparison on it. The gap between the left and right columns is the lift waiting to happen.

Common Mistakes to Avoid

Mistake 1: Treating LLM optimization as a one-time task. AI citations rotate monthly. A page optimized in January will lose ground by March unless you refresh. Plan for quarterly rewrites on top-performing pages.

Mistake 2: Over-optimizing for AI at the expense of readability. If your post reads like a robot wrote it, humans will bounce and AI models will notice. LLMs weight user engagement signals. Balance structure with voice.

Mistake 3: Ignoring schema markup. Structured data is a readability multiplier. Add FAQ schema, Article schema, and HowTo schema where relevant. See our structured data guide for AI search.

Mistake 4: Writing to every AI model the same way. ChatGPT, Perplexity, Google AI Overviews, and Claude each weight signals differently. Perplexity cares more about freshness. ChatGPT cares more about entity clarity. Google AI Overviews cares about topical authority. Build in signals for all four.

Mistake 5: Measuring the wrong metrics. Ranking positions are a lagging indicator. Track AI citation share, branded query lift, and AI referral traffic instead. Our guide on tracking AI search visibility walks through the full measurement stack.

Nuance admission: No single tactic on this list is magic. The gains come from stacking all 8 steps across every post you publish. One pass on one article will move the needle a little. Thirty passes on thirty articles will move it a lot.

FAQ

What is LLM-friendly content?

LLM-friendly content is writing formatted so large language models can extract, summarize, and cite it accurately. Core traits include direct answers in the first 60 words. It also uses question-based headings, short sentences, named entities, structured formats, and dated citations.

How is LLM-friendly content different from SEO content?

SEO content targets Google’s ranking algorithm. LLM-friendly content targets AI extraction. The two overlap heavily. Both reward clear structure, strong E-E-A-T signals, and fresh citations. LLM optimization adds techniques like entity-first writing, semantic triplets, and question-based headings. Our GEO vs SEO guide breaks down the differences.

Does LLM-friendly content hurt human readability?

No, when done well it improves readability. Short sentences, clear answers upfront, named entities, and good formatting help busy readers too. The failure mode is over-optimization, where writers sacrifice voice for structure. Keep your voice, add the structure.

How long should LLM-friendly content be?

Between 1,500 and 3,000 words for most topics. Extraction rates peak on pages under 5,000 characters and drop sharply after 20,000. Pillar guides and statistics posts can run longer, but only if every section stays dense. Density matters more than word count.

How do I measure whether my content is LLM-friendly?

Track 3 metrics. First, AI citation share: how often your brand appears in ChatGPT, Perplexity, and Google AI Overview responses. Second, branded query lift. Third, AI referral traffic from tools like ChatGPT. See our AI citability score guide for the full scoring model.

Can I optimize old posts for AI, or do I need to rewrite from scratch?

You can optimize old posts. The 8-step framework works as a rewrite checklist. Start with your 10 highest-traffic evergreen posts. Add a definition box, convert headings to questions, and rewrite the first 60 words of each section. Name entities explicitly and cite primary sources with dates. Most old posts can be retrofitted in 30 to 45 minutes each.

Conclusion

You now know how to write LLM-friendly content that gets cited by ChatGPT, Perplexity, and Google AI Overviews. The 8 steps above are the same system we use to publish 3,500+ blogs. They work because they match how AI models actually extract content.

Pick one post on your site this week. Apply all 8 steps. Measure the citation shift in 30 days. Then scale the system to every post you publish.

Skip the rewrite. Publish 30 LLM-friendly articles per month on autopilot. Stacc writes, optimizes, and publishes AI-ready SEO content across your real keyword map. No content team required. Start for $1 →

Related Tools & Resources

Free SEO Tools:

Best Lists:

Written by

Siddharth GangalSiddharth is the founder of theStacc and Arka360, and a graduate of IIT Mandi. He spent years watching great businesses lose organic traffic to competitors who simply published more. So he built a system to fix that. He writes about SEO, content at scale, and the tactics that actually move rankings.

30 SEO blog articles published every month

Keyword-optimized, scheduled, and live on your site. Automatically.

30-day trial · Cancel anytime

theStacc

Stop writing SEO content manually

30 blog articles, 30 GBP posts, and social media content. Published every month. Automatically.

Start Your $1 Trial$1 for 3 days · Cancel anytime