How to Detect AI Generated Content (2026)

Learn how to detect AI generated content with 7 manual signals, tool comparisons, and accuracy data. Covers text, images, and video. Updated March 2026.

Siddharth Gangal • 2026-03-28 • Content Strategy

In This Article

You just read an article that felt off. The grammar was flawless. The structure was rigid. Every paragraph said something without saying anything specific. Was it written by a human or a machine?

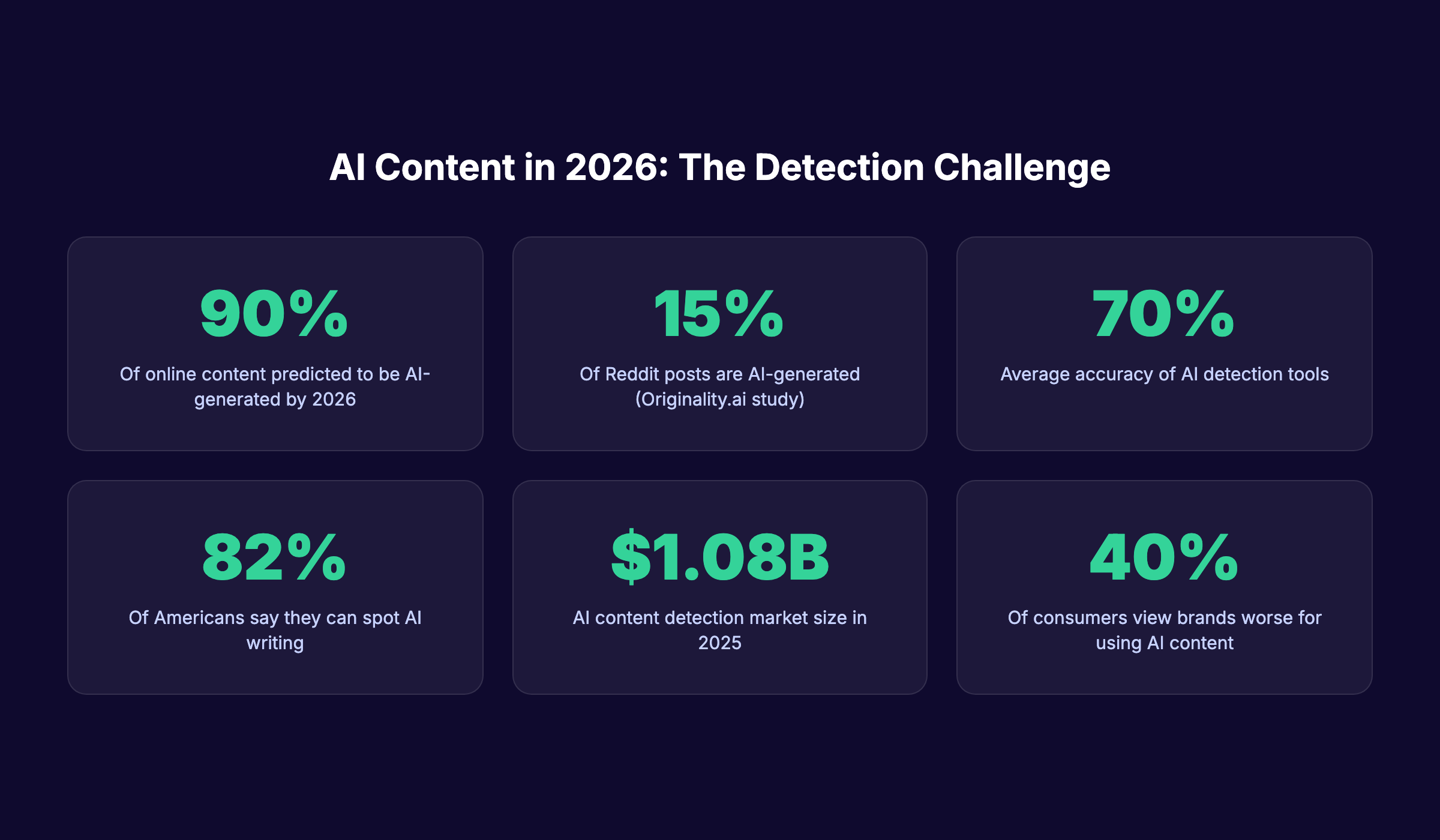

That question matters more now than ever. An estimated 90% of online content will be synthetically generated by 2026. Knowing how to detect AI generated content is no longer optional for marketers, editors, educators, and business owners.

The problem is that detection is hard. AI writing has improved dramatically. The best models produce text that passes casual reading without a second glance. And detection tools still average only 70% accuracy.

We publish 3,500+ blog posts across 70+ industries. We see AI-generated drafts every day. We also see what separates content that ranks from content that reads like a machine wrote it.

Here is what you will learn in this guide:

- 7 manual signals that reveal AI-generated text

- How detection tools measure perplexity and burstiness

- Which detection tools are most accurate in 2026

- How to spot AI-generated images and video

- What the accuracy data actually shows (and its limits)

- Why detection matters for SEO and brand trust

What AI Generated Content Looks Like in 2026

AI-generated content in 2026 is not the clunky, obviously robotic text from 2022. Models like GPT-5, Claude 4, and Gemini 2.5 produce writing that mimics human tone, structure, and even personality.

The output is polished. Sentences flow. Grammar is correct. On the surface, it reads well.

But patterns remain. AI models predict the next most probable word in a sequence. That statistical foundation creates detectable footprints, even in the best outputs.

The Scale of AI Content

The numbers tell the story. According to research from Originality.ai, 15% of Reddit posts are now AI-generated. That is up from 13% in 2024.

Analysts estimate that 30 to 40% of text on active web pages originates from AI systems. The AI content detection market reached $1.08 billion in 2025 and is still growing.

These are not projections. These are current measurements. The need to identify synthetic content is real, whether you are hiring writers, reviewing student work, or auditing your own content marketing strategy.

Why Casual Reading Fails

Most people believe they can spot AI writing. A 2026 consumer study from SchemaNinja found that 82.1% of Americans say they notice AI-generated text. But self-reported detection and verified detection are not the same thing.

The same study found that only 71.63% of respondents correctly identified AI-generated images. For certain categories (like AI-generated Eiffel Tower photos), the detection rate dropped to just 18%.

Casual reading catches obvious AI. It misses the rest. A structured detection approach works better.

Publish content that does not need detection workarounds. Stacc writes and publishes 30 SEO articles per month that read like a human expert wrote them. Start for $1 →

7 Manual Signs of AI-Generated Text

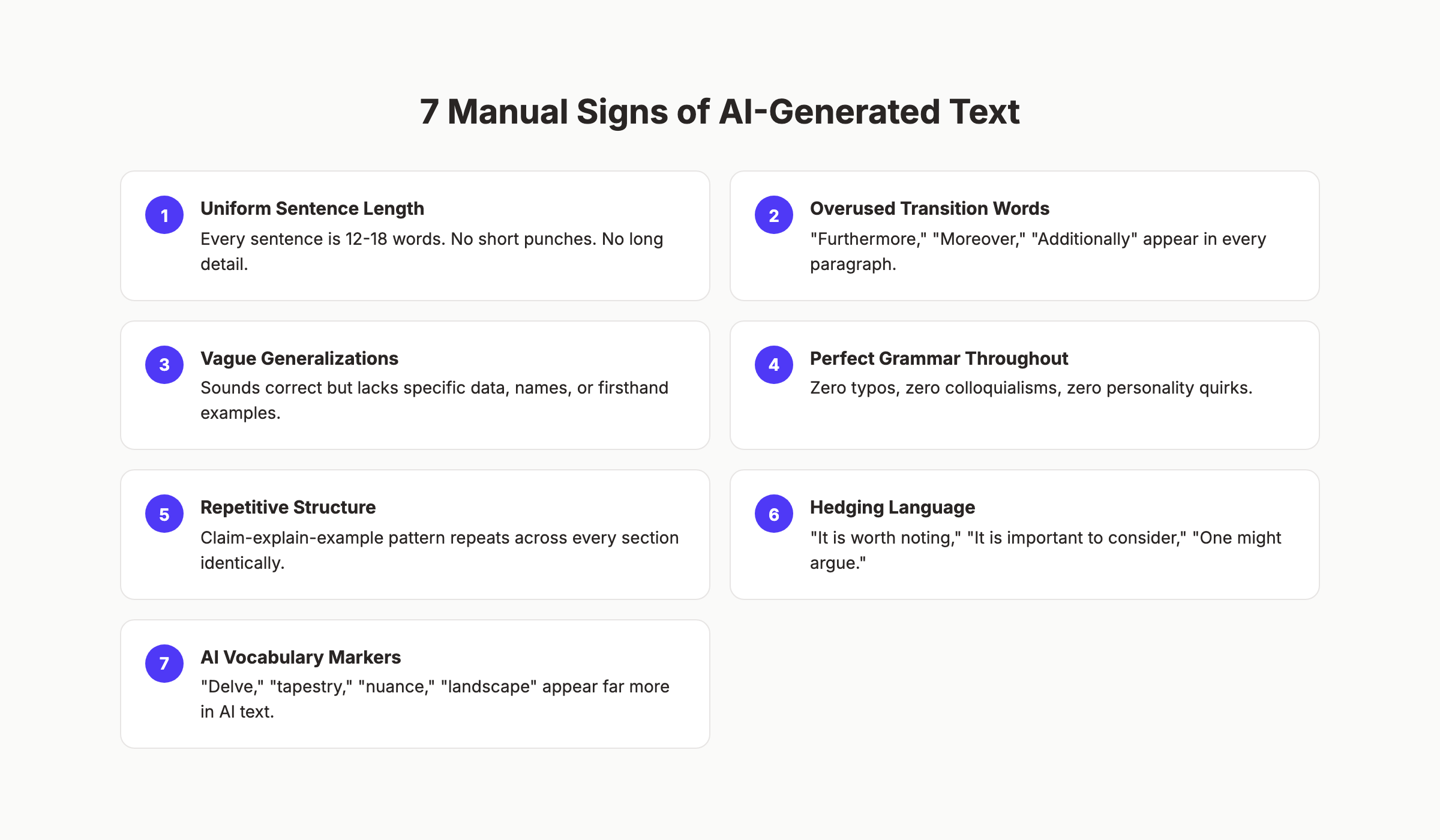

Before opening any tool, train your eye. These 7 signals appear in most unedited AI text. Each one is common in isolation. Finding 3 or more together is a strong indicator.

1. Uniform Sentence Length

Human writers vary sentence length naturally. Some sentences are 4 words. Others stretch to 20.

AI models produce text with low burstiness. Sentences cluster between 12 and 18 words. The rhythm is monotonous. Read a paragraph out loud. If every sentence hits the same beat, that is a signal.

2. Overused Transition Words

AI text overuses “Furthermore,” “Moreover,” “Additionally,” and “In conclusion.” These words appear at the start of paragraphs with predictable regularity.

Human writers use transitions sparingly. They rely on logical flow rather than explicit connectors. If every new paragraph begins with a transition word, question the source.

3. Vague Generalizations

AI excels at sounding correct. It struggles with being specific.

Statements like “many businesses find this approach effective” or “research shows positive results” are classic AI patterns. The claims are technically defensible. They include zero specifics.

Human experts name the study. They cite the number. They reference a client or example. Vague authority claims without sources point to AI.

4. Perfect Grammar Throughout

Real human writing contains minor imperfections. A sentence fragment for emphasis. A slightly informal construction. A word choice that reflects regional speech.

AI produces grammatically perfect prose. Every subject agrees with its verb. Every comma is placed correctly. This level of consistency is rare in natural writing, even from skilled authors.

5. Repetitive Paragraph Structure

AI defaults to a repeating pattern: claim, explanation, example. Every section uses the same template. The result reads like a textbook summary.

Human writers break structure. They open with a question. They use a one-sentence paragraph for impact. They place the example first and the claim after. Structural monotony flags AI involvement.

6. Hedging Language

AI protects itself from being wrong. Phrases like “it is worth noting,” “one might argue,” and “it is important to consider” are overrepresented in AI text. These phrases add words without adding meaning.

Confident human writers make direct claims. They say “this works” instead of “this could potentially be effective.” Excessive hedging suggests machine origin. The difference between content that ranks and content that flounders often comes down to directness.

7. AI Vocabulary Markers

Certain words appear far more frequently in AI-generated text than in human writing. “Delve,” “tapestry,” “nuance,” and “landscape” are statistically overrepresented in outputs from large language models.

Linguists have identified these as vocabulary markers. One or two appearances are fine. But if “delve” appears 3 times in a 1,500-word article, that is not a coincidence.

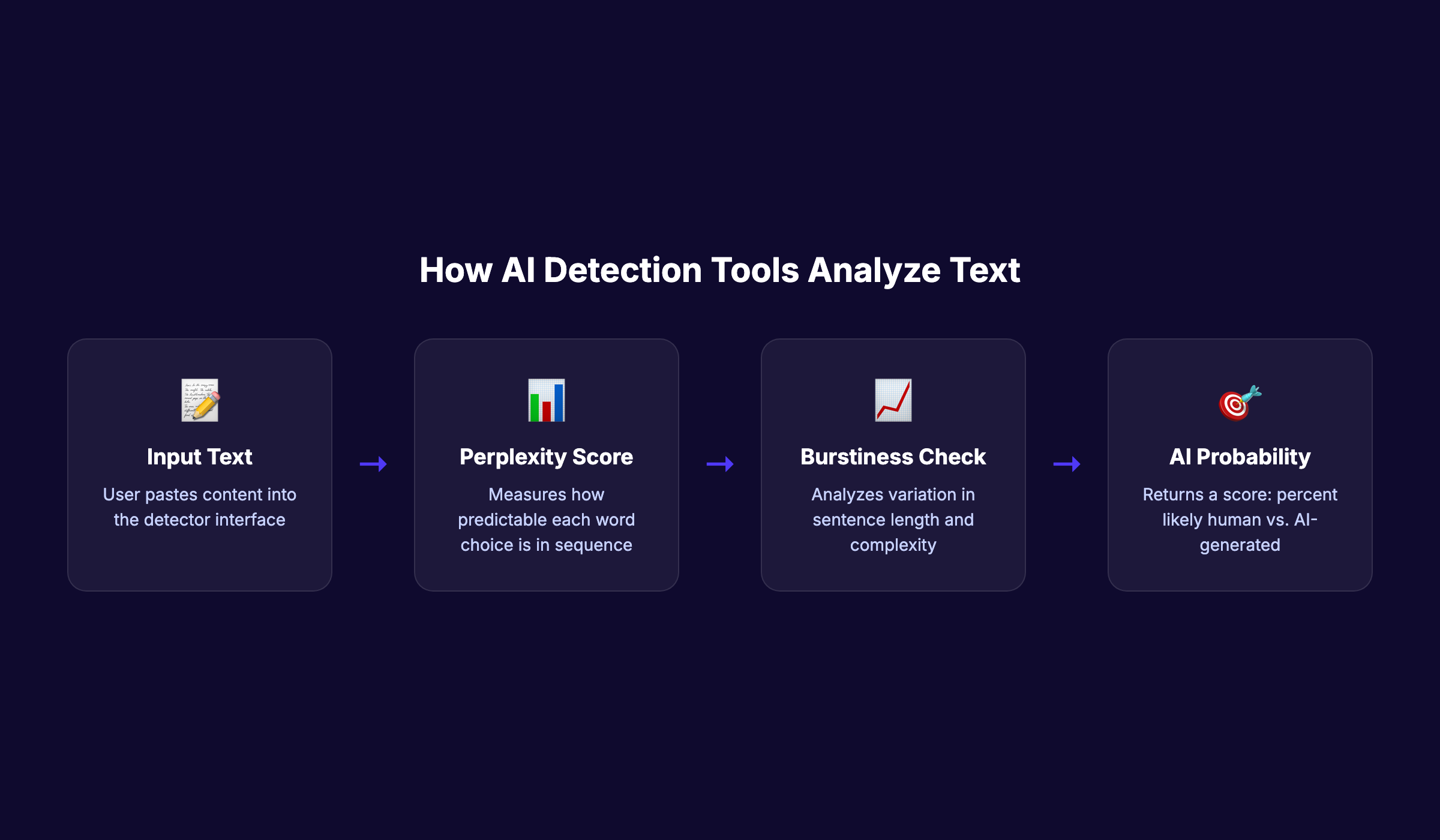

How AI Content Detection Tools Work

AI detection tools do not use magic. They measure 2 primary signals: perplexity and burstiness. Understanding these signals helps you interpret results accurately.

Perplexity: Word Predictability

Perplexity measures how surprising each word choice is in context. AI models select the most statistically probable next word. This creates text with low perplexity.

Human writers make unexpected word choices. They use metaphors. They reference personal experiences. They pick a slightly unusual synonym. These choices raise perplexity.

A low perplexity score across a full document suggests that the text was generated by a model optimizing for probability. High perplexity suggests human authorship.

Burstiness: Sentence Variation

Burstiness measures the variation in sentence complexity and length. Human writing is naturally bursty. A 6-word sentence follows a 22-word sentence. A simple declaration follows a complex conditional.

AI text is low-burstiness. Sentences maintain a consistent complexity level. The variation is narrow. Detection tools flag this uniformity.

Pattern Matching Against Training Data

Some tools go beyond perplexity and burstiness. They compare content against massive datasets of known AI outputs. They analyze grammatical structures, phrase frequency, and syntactic patterns that are common in machine-generated text.

This approach works well for unedited AI content. It struggles with AI text that has been humanized through editing. That limitation matters for anyone using AI as a drafting assistant.

The Confidence Score

Detection tools return a probability, not a binary answer. A result of “87% likely AI” means the tool is fairly confident. A result of “52% likely AI” means the tool is uncertain.

No tool should be treated as a definitive verdict. Cross-referencing multiple tools improves reliability. We cover specific tools in the next chapter.

Stop worrying about AI detection scores. Stacc publishes content built for quality and rankings, not content that needs detection workarounds. Start for $1 →

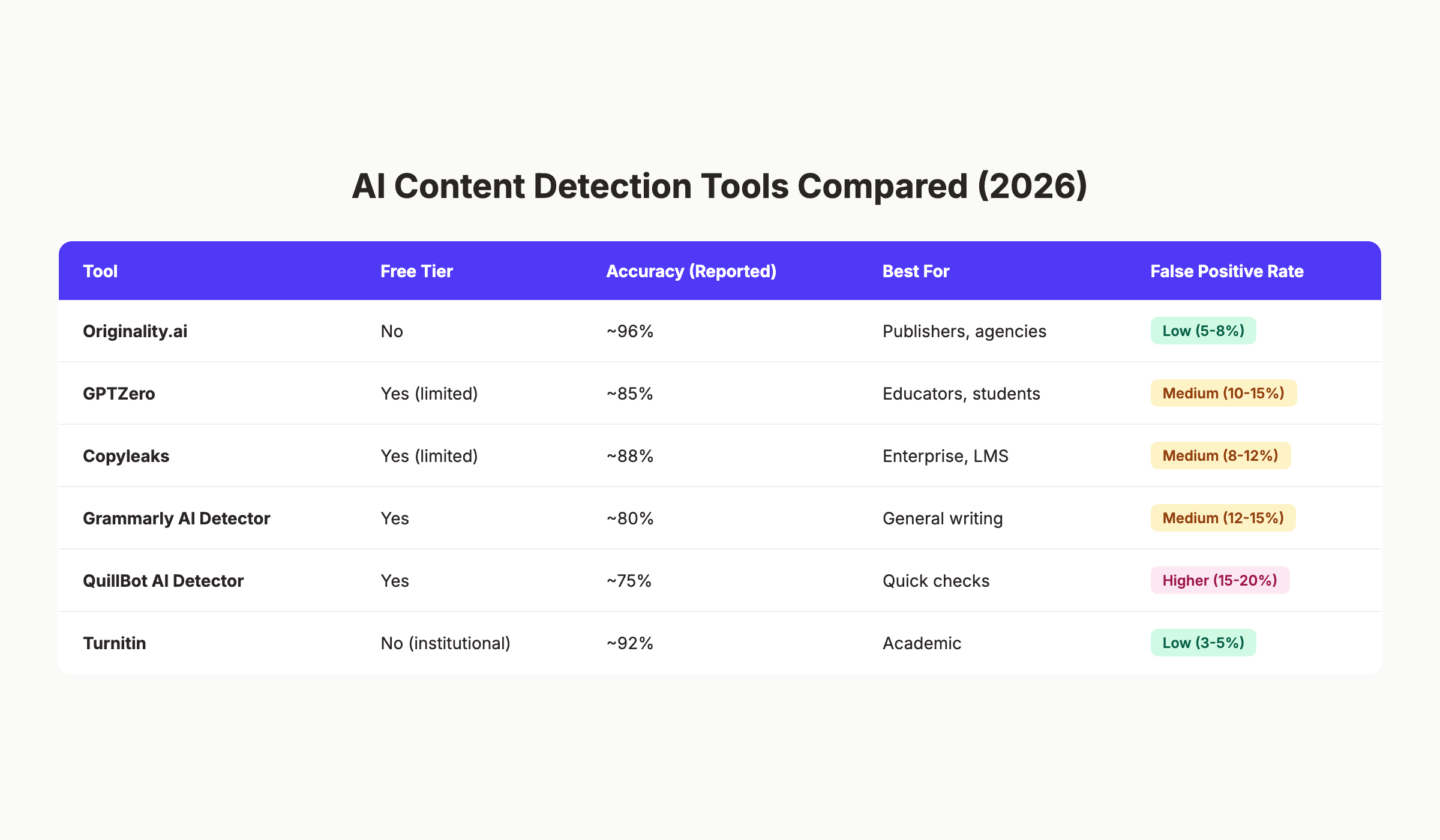

Best AI Detection Tools Compared

Not all AI detectors deliver the same results. Accuracy, false positive rates, pricing, and target audience vary significantly. Here is how the top tools compare in 2026.

Originality.ai

Originality.ai is purpose-built for content marketers and publishers. It reports the highest accuracy rates in independent tests, detecting content from GPT-5, Claude 4 Opus, and Gemini 2.5.

The tool has no free tier. Plans start at $14.95 per month for 2,000 credits. Each credit scans approximately 100 words. For high-volume editorial teams, this is the strongest option.

False positive rates range from 5 to 8%. That is among the lowest in the industry.

GPTZero

GPTZero is the most widely used free detector. Over 10 million users have scanned content through the platform. It works with over 100 organizations in education, hiring, publishing, and legal fields.

Accuracy sits around 85% for unedited AI text. False positive rates are higher than Originality.ai, ranging from 10 to 15%. The free tier limits scans to 5,000 characters at a time.

GPTZero is best for educators and individuals who need quick checks without a subscription.

Copyleaks

Copyleaks combines AI detection with plagiarism detection. The platform integrates directly with learning management systems, making it a popular choice for universities.

Reported accuracy is approximately 88%. The dual-purpose functionality (plagiarism plus AI detection) makes it efficient for academic settings. Enterprise pricing is available on request.

Grammarly AI Detector

Grammarly added AI detection as a free feature within its writing assistant. The detector analyzes text pasted into the Grammarly editor and returns a confidence score.

Accuracy is approximately 80%. This makes it useful for quick checks. It is not reliable enough for high-stakes decisions. The main advantage is convenience. If you already use Grammarly, the AI detector requires zero additional setup.

QuillBot AI Detector

QuillBot offers a free AI detector alongside its paraphrasing tool. Accuracy is lower than competitors at approximately 75%. False positive rates are the highest on this list, ranging from 15 to 20%.

Use QuillBot as a secondary check, not a primary one. The paraphrasing tool is its strength. The AI detector is a convenience add-on.

Turnitin

Turnitin serves the academic market exclusively. Institutions purchase licenses. Individual users cannot access the tool directly.

Accuracy is strong at approximately 92%. False positive rates are the lowest available at 3 to 5%. Turnitin has the advantage of comparing submitted text against its massive database of student papers, academic journals, and known AI outputs.

| Tool | Free Tier | Accuracy | Best For | False Positive Rate |

|---|---|---|---|---|

| Originality.ai | No | ~96% | Publishers, agencies | Low (5-8%) |

| GPTZero | Yes (limited) | ~85% | Educators | Medium (10-15%) |

| Copyleaks | Yes (limited) | ~88% | Enterprise, LMS | Medium (8-12%) |

| Grammarly | Yes | ~80% | General writing | Medium (12-15%) |

| QuillBot | Yes | ~75% | Quick checks | Higher (15-20%) |

| Turnitin | No (institutional) | ~92% | Academic | Low (3-5%) |

Always use at least 2 tools before making a judgment. A single tool result is a data point, not a verdict.

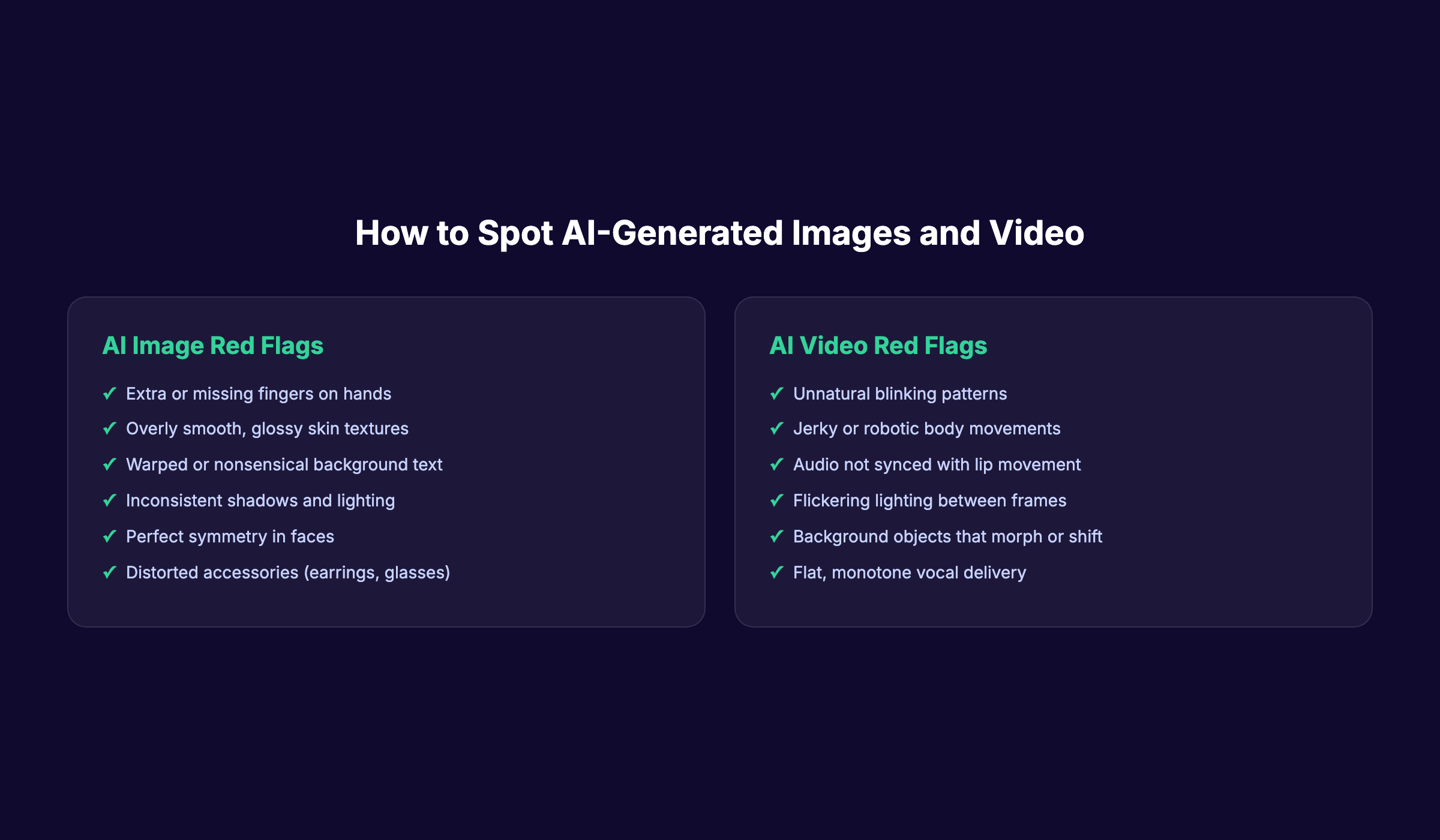

How to Detect AI-Generated Images and Video

Text detection gets the most attention. But synthetic images and video are spreading just as fast. The detection approach differs from text analysis.

AI Image Red Flags

AI image generators (Midjourney, DALL-E 3, Stable Diffusion) have improved dramatically. But several tells remain consistent.

Hands and fingers. AI still struggles with hands. Look for extra fingers, missing fingers, or fingers that merge into each other. This is the single most reliable visual indicator.

Skin texture. AI-generated faces often have unnaturally smooth, glossy skin. Pores, fine lines, and imperfections are absent. The result looks more like a retouched magazine cover than a photograph.

Background text. Any text visible in an AI-generated image is usually gibberish. Street signs, book covers, and storefront names contain nonsensical characters. This is a quick tell.

Symmetry and lighting. AI tends toward perfect facial symmetry, which is rare in real photographs. Shadows may fall in inconsistent directions. Light sources may not match across the scene.

Accessories. Earrings, glasses, and jewelry often contain distortions. One earring may look different from the other. Glasses frames may merge with skin or hair.

AI Video Red Flags

AI-generated video adds temporal indicators that still images do not have.

Blinking patterns. AI-generated faces often blink at unnatural intervals. Some do not blink at all. Others blink too rapidly or too slowly.

Body movement. Motion in AI video is often slightly jerky. Hands gesture without purpose. Head turns are too smooth or too abrupt.

Audio sync. Lip movements may not match the spoken words precisely. The audio may sound flat, with a monotone delivery that lacks natural speech rhythm.

Frame consistency. Watch backgrounds between frames. Objects may shift position, change color, or disappear entirely. These frame-to-frame inconsistencies are difficult for current AI video models to eliminate.

Metadata and Reverse Image Search

Beyond visual inspection, check the metadata. AI-generated images often lack EXIF data that real camera photos include (camera model, GPS coordinates, shutter speed).

Run a reverse image search through Google Images or TinEye. If the image appears nowhere else online and has no source attribution, treat it with suspicion. Content published as part of a strong blog SEO strategy should always use original, attributed visuals.

Get content with real images, real data, and real SEO value. Stacc handles the full publishing workflow for $99 per month. Start for $1 →

Detection Accuracy: What the Data Says

Detection tools are useful. They are not infallible. Understanding their limitations prevents costly false positives and overconfident conclusions.

The 70% Problem

The average AI detection tool achieves approximately 70% accuracy. That means 3 out of every 10 assessments are wrong. For a team reviewing 100 articles per month, that is 30 incorrect judgments.

The errors go both ways. False positives flag human-written content as AI. False negatives miss AI-generated content entirely.

Non-Native English Writers Face Bias

Detection tools show significant bias against non-native English speakers. Research has found false positive rates as high as 70% for non-native English writing. These writers tend to use simpler sentence structures and more common vocabulary, which mirrors AI output patterns.

This is a critical flaw. Organizations that rely on AI detectors for hiring or academic integrity risk penalizing legitimate writers. Any detection protocol must account for this bias.

Edited AI Text Defeats Most Detectors

AI detection tools perform best against raw, unedited AI output. When a human edits AI-generated text, adding personal examples, varying sentence structure, and injecting specific data, detection accuracy drops sharply.

Our guide on how to humanize AI content covers the editing techniques that improve quality. These same techniques reduce detectability. For brands using AI as a drafting tool, the edited output is often indistinguishable from fully human-written content.

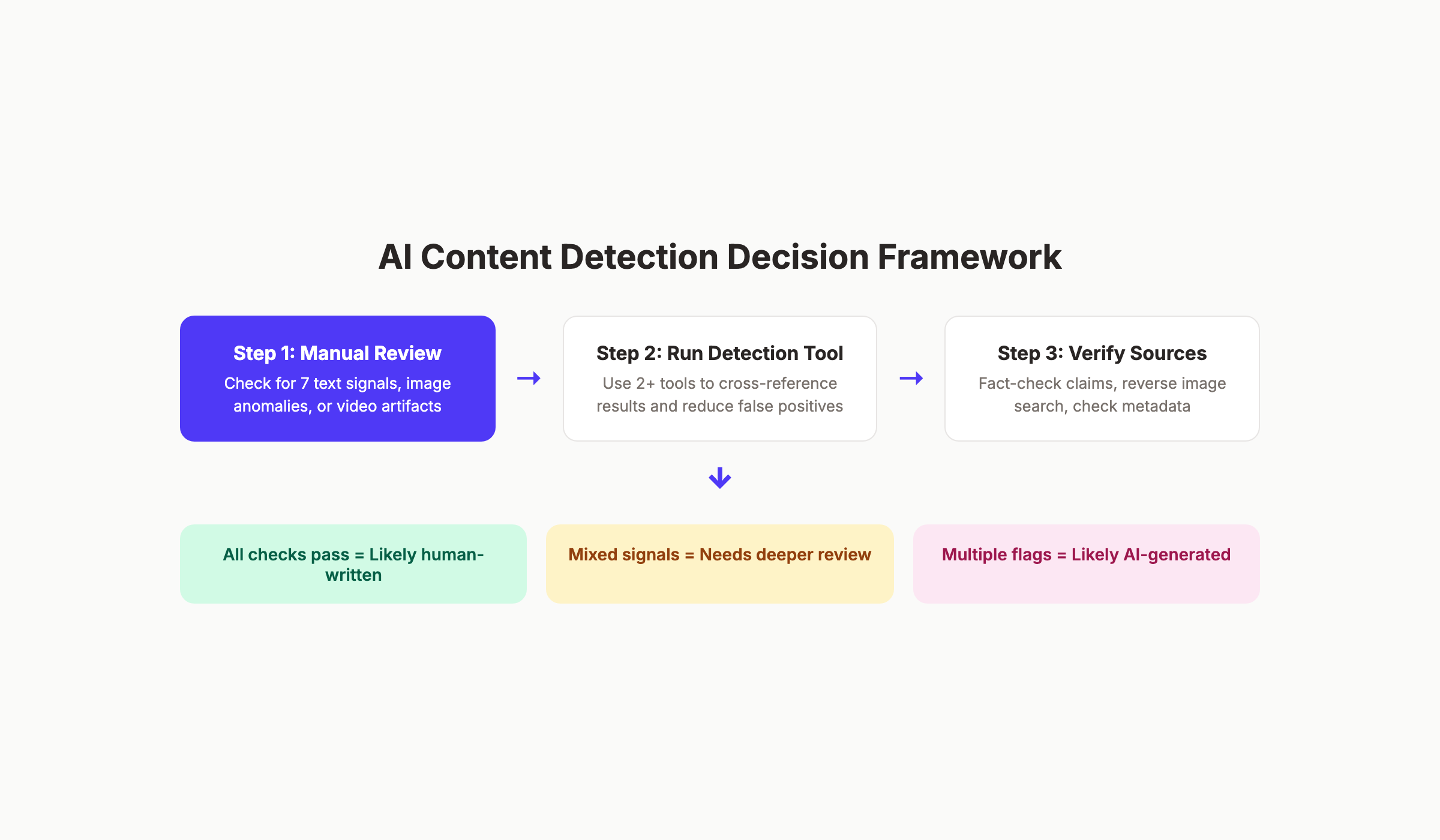

Cross-Tool Validation Improves Reliability

Running content through a single detector gives you one opinion. Running it through 3 detectors gives you a pattern.

If Originality.ai, GPTZero, and Copyleaks all flag the same content as AI-generated, the probability is high. If one tool flags it and two do not, the result is inconclusive.

Build a process:

- Run content through Detector 1 (primary tool)

- Run content through Detector 2 (secondary tool)

- Perform manual review using the 7-signal checklist

- Check for source citations and specific data points

- Make a final determination based on all evidence

This multi-layer approach reduces both false positives and false negatives. It takes more time. It is also more accurate than any single tool.

Why Detection Matters for SEO and Brand Trust

Knowing how to detect AI generated content is not just an academic exercise. It has direct consequences for search rankings, audience trust, and brand reputation.

Google and E-E-A-T

Google does not penalize AI content by default. Google penalizes low-quality content. The distinction matters.

The E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) evaluates content quality regardless of how it was produced. AI content that lacks firsthand experience, specific expertise, and trustworthy sourcing will rank poorly. Human content with the same problems will rank poorly too.

Detection matters because it helps you identify content that lacks E-E-A-T signals. If your team produces AI-assisted SEO content, you need to verify that the output meets quality standards before publishing.

Consumer Trust Is Declining

The 2026 SchemaNinja study found that 50.1% of consumers view AI-written content negatively. Another 40.4% say they view brands worse when they discover AI-generated content.

These numbers are not small. Nearly half of your audience has a negative reaction to discovering AI content. For brands building long-term authority, this perception gap matters.

Consumers do approve of AI for support functions. Research (55.8%), brainstorming (53.7%), and draft editing (50.8%) are acceptable uses. Fully AI-generated, customer-facing content is not.

The takeaway: use AI as a tool, not a replacement. Detect and edit AI outputs before publishing. Our AI content statistics page covers the full data set on consumer sentiment.

Content Auditing Requires Detection Skills

If you are performing a content audit, AI detection is part of the process. Especially if you work with freelance writers, agencies, or inherited content from previous teams.

Undetected AI content can cause content decay over time. Generic AI articles that lack unique data or perspective lose rankings as search engines get better at evaluating quality.

Review your existing content library. Flag articles that show multiple AI signals. Prioritize those for rewriting or enrichment. The content marketing statistics show that refreshed, high-quality content outperforms generic content by a significant margin.

The California AI Transparency Act

California’s AI Transparency Act (SB 942), effective January 2026, requires AI providers to embed invisible digital markers in AI-generated images. These markers include provider names, timestamps, and system identifiers.

This legislation signals where regulation is heading. Brands that produce AI content without disclosure face increasing legal and reputational risk. Detection skills help you stay ahead of regulatory requirements.

Building Your Detection Workflow

Theory without process is useless. Here is a practical workflow for integrating AI detection into your content operations.

For Editorial Teams

- Establish a detection policy. Define what percentage of AI involvement is acceptable.

- Train editors on the 7 manual signals.

- Select 2 detection tools (one primary, one secondary).

- Run every submitted article through both tools.

- Cross-reference tool results with manual review.

- Flag content that scores above 60% AI probability for revision.

- Document decisions for consistency.

For Business Owners

If you outsource content, detection protects your investment. Run spot checks on 20% of delivered content each month. If a freelancer or agency consistently delivers AI-flagged content, that is a conversation worth having.

You should not need to play detective with your own content team. Working with a service that publishes quality content from the start eliminates detection concerns. That is what separates done-for-you publishing from raw AI output.

For Educators

Use detection as one input among many. Combine tool results with in-person conversations about the work. Ask students to explain their reasoning or expand on a specific section. AI-generated submissions often fall apart under follow-up questions.

Never use a single detection tool result as the sole basis for an academic integrity decision. The false positive rates are too high. Our blogging statistics page shows that AI content creation is now mainstream. Teaching students to use AI responsibly matters more than catching every AI-assisted paragraph.

Content that ranks without detection drama. Stacc publishes 30 to 80 SEO articles per month, built for quality from the start. Start for $1 →

Frequently Asked Questions

Can Google detect AI-generated content?

Google has not confirmed a specific AI detection system in its ranking algorithm. Google evaluates content quality through its E-E-A-T framework. AI content that meets quality standards can rank. AI content that lacks experience, specificity, and trustworthiness will not. The AI search changes in 2026 favor content with original data and firsthand expertise.

What is the most accurate AI content detector in 2026?

Originality.ai reports the highest accuracy rates at approximately 96%, with false positive rates between 5 and 8%. Turnitin follows at approximately 92% accuracy for academic content. No tool achieves 100% accuracy. Always cross-reference results from multiple tools.

Do AI detectors work on content that has been edited by a human?

Accuracy drops significantly when AI-generated text is edited by a human. Adding personal examples, varying sentence structure, and inserting specific data points reduces detection rates. Our guide on humanizing AI content explains this process in detail.

Is it illegal to publish AI-generated content?

Publishing AI-generated content is not illegal in most jurisdictions. California’s AI Transparency Act (SB 942) requires digital markers on AI-generated images starting January 2026. The EU AI Act includes disclosure requirements for certain AI-generated content. Regulations are evolving. Check local requirements.

How do I know if a freelance writer is using AI?

Run submitted content through 2 detection tools. Check for the 7 manual signals described in this guide. Look for specific data points, named sources, and unique perspectives. AI-generated content tends to be generalized and lacks firsthand examples. If a writer consistently delivers content that flags across multiple tools, request explanations or writing process documentation.

Does AI-generated content hurt SEO?

AI-generated content does not automatically hurt SEO. Low-quality content hurts SEO, regardless of how it was produced. The risk with unedited AI content is that it lacks the specificity, original data, and experience signals that Google rewards through E-E-A-T. Published AI content should always be reviewed and enriched before going live.

Your Next Steps

The ability to detect AI generated content is a skill, not a tool purchase. Tools help. Manual signals help more. Combining both creates a reliable system.

Build the detection workflow that fits your role. Train your team on the 7 manual signals. Pick 2 detection tools. And focus your energy on producing content that never triggers detection concerns in the first place.

Quality content, whether human-written or AI-assisted, shares the same traits: specific data, original perspective, and genuine expertise. Aim for that standard.

Get 30 SEO articles per month that meet the quality bar. No detection headaches. No quality compromises. Start for $1 →

Written and published by Stacc. We publish 3,500+ articles per month across 70+ industries. All data verified against public sources as of March 2026.