Multi-Agent SEO Workflows: The Complete Guide

Learn how to build multi-agent SEO workflows that automate research, writing, optimization, and publishing. 8-chapter guide. Updated April 2026.

Siddharth Gangal • 2026-04-02 • SEO Tips

In This Article

A single AI agent writing blog posts is not a workflow. It is a shortcut that produces mediocre content at every stage.

Multi-agent SEO workflows split the work. One agent researches keywords. Another builds outlines. A third writes the draft. A fourth optimizes for search. A fifth checks quality. Each agent does one thing well. The output is better than any single agent could produce alone.

This distinction matters. According to BCG research, multi-agent workflows cut low-value SEO work by 25% to 40%. Content strategy tasks that took 40 to 48 hours now finish in 4 to 8 hours with human review included.

We have published 3,500+ blogs across 70+ industries using multi-agent workflows. This guide covers the exact system we use and how to build your own.

Here is what you will learn:

- What multi-agent SEO workflows are and why they outperform single-agent setups

- The 8 specialized agent roles every workflow needs

- How to design your workflow architecture from scratch

- Step-by-step instructions for building your first pipeline

- Which orchestration platforms work best for SEO teams

- The 7 most common mistakes and how to avoid each one

- How to measure ROI from your multi-agent system

- Where this technology is heading in the next 12 months

What Multi-Agent SEO Workflows Actually Are

A multi-agent SEO workflow is a system where multiple specialized AI agents handle different stages of the SEO content pipeline. Each agent has a defined role, specific inputs, and a measurable output that feeds into the next agent.

Think of it as an assembly line for content.

Single Agent vs. Multi-Agent

| Approach | How It Works | Output Quality |

|---|---|---|

| Single agent | One AI does research + writing + optimization | Mediocre at everything |

| Multi-agent | Specialized agents handle each stage | Strong at every stage |

| Human team | Specialists collaborate on each task | Highest quality, slowest speed |

| Multi-agent + human review | Agents draft, humans approve | Best balance of speed and quality |

A single agent asked to “write an SEO blog post about keyword research” will skip competitive analysis, ignore search intent, miss internal linking opportunities, and produce generic content. It tries to do 8 jobs in 1 prompt.

A multi-agent workflow assigns each job to a specialist. The research agent analyzes SERPs. The outline agent structures the content. The writing agent produces the draft. The optimization agent handles on-page SEO. Each output is better because each agent focuses on one task.

The Assembly Line Model

Multi-agent SEO workflows follow a sequential pipeline:

- Research stage — Keyword analysis, competitor audit, search intent mapping

- Planning stage — Outline creation, internal link mapping, image planning

- Writing stage — Draft production following brand voice and content brief

- Optimization stage — SEO elements, schema markup, meta tags

- Quality stage — Fact-checking, readability, AI content detection

- Publishing stage — CMS upload, formatting verification, social distribution

Each stage has a dedicated agent. No agent tries to handle more than one stage.

Why Single-Agent SEO Fails at Scale

Most teams start with a single AI agent for content. It works for the first 5 articles. Then the cracks appear.

The Quality Ceiling Problem

Single agents hit a quality ceiling around article 10 to 15. The content starts sounding the same. The research becomes shallow. The keyword targeting drifts. Internal links get missed. Formatting breaks down.

This happens because a single agent carries too much context in one session. It cannot simultaneously excel at competitive research, creative writing, technical SEO, and quality assurance. No human does all 4 well either.

The Consistency Problem

Article 1 might have 15 internal links. Article 30 might have 2. Article 1 might target the right search intent. Article 30 might drift toward a different audience entirely.

Single agents do not maintain consistent quality standards across large batches. They have no memory between sessions and no feedback mechanism to correct drift.

The Speed Problem

A single agent processes tasks sequentially within one session. Research, outline, write, optimize, check. Each article takes the full cycle time.

Multi-agent workflows run stages in parallel where possible. While the writing agent drafts article 5, the research agent works on article 6. The optimization agent polishes article 4. Throughput increases by 3 to 5 times without any quality loss.

According to Frase.io’s 2026 guide on AI agents for SEO, 90.3% of marketing organizations already use AI agents. The teams seeing the best results use specialized multi-agent systems, not single general-purpose agents.

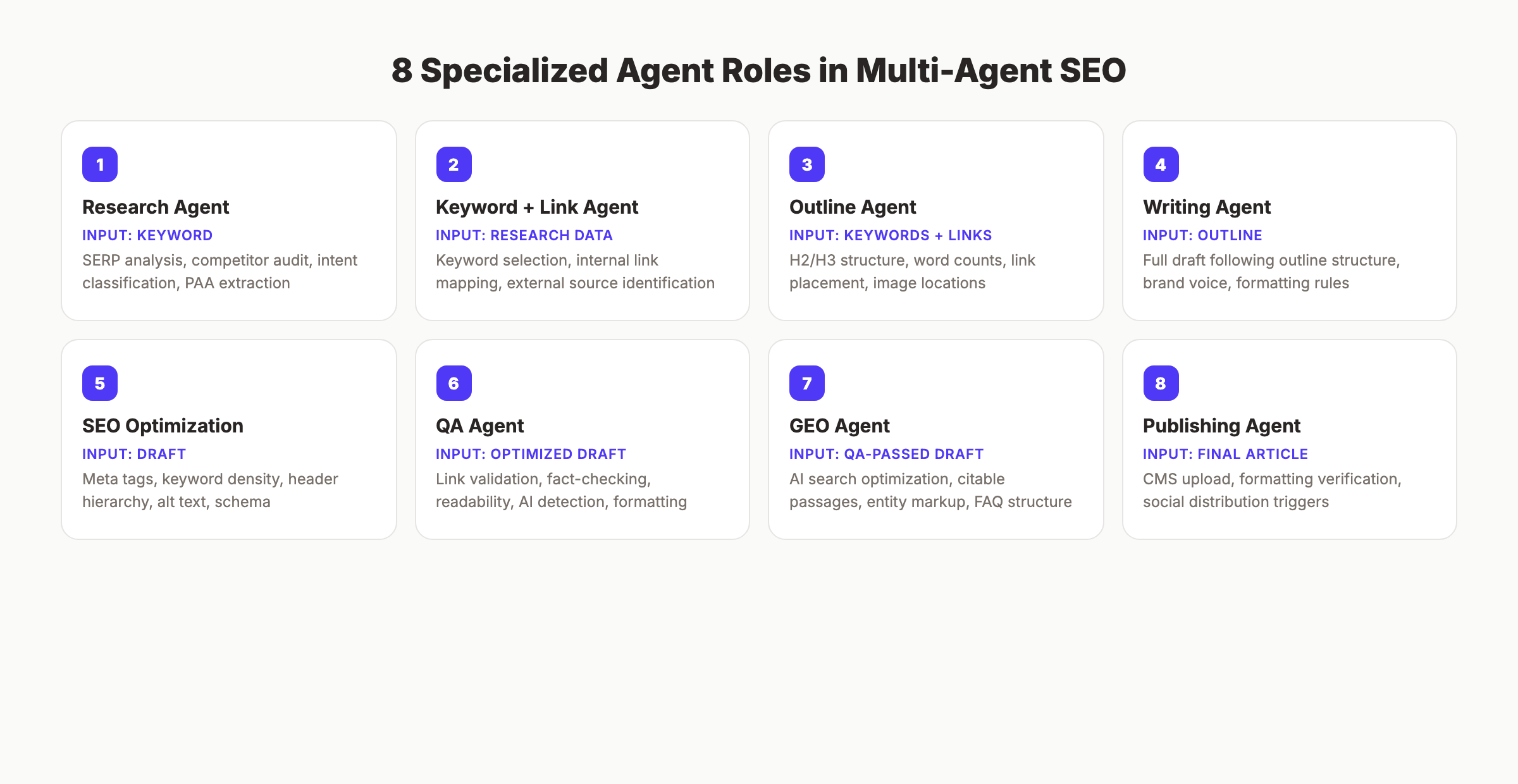

The 8 Agent Roles in a Multi-Agent SEO Workflow

Every high-performing multi-agent SEO workflow includes these 8 roles. Some teams combine 2 roles into 1 agent. No team should combine more than that.

Role 1: Research Agent

Input: Target keyword or topic Output: SERP analysis, competitor content audit, search intent classification, People Also Ask questions

The research agent does what a human SEO strategist does before writing. It analyzes the top 10 ranking pages, extracts their H2 structures, counts word lengths, identifies content gaps, and maps search intent.

Key tasks:

- Pull top 10 SERP results for the target keyword

- Analyze competitor headings and content depth

- Classify search intent (informational, commercial, transactional)

- Extract People Also Ask questions

- Identify content gaps that competitors miss

Role 2: Keyword and Link Agent

Input: Research agent output + site content inventory Output: Primary keyword, secondary keywords, internal link targets, external source URLs

This agent handles the data-heavy SEO work. It maps keywords to search volume and difficulty, identifies long-tail keyword opportunities, and builds the internal link plan by matching the topic to existing site pages.

Key tasks:

- Select primary and secondary keywords

- Map internal link targets from existing content

- Identify 4 to 6 external sources for citations

- Check for keyword cannibalization against existing pages

Role 3: Outline Agent

Input: Research output + keyword/link plan Output: Detailed content outline with H2/H3 structure, word count targets per section, link placement, image locations

The outline agent translates research into a writing blueprint. It follows the content brief format and ensures every section targets a specific keyword cluster.

Key tasks:

- Build H2/H3 heading hierarchy matching SERP patterns

- Assign word count targets per section

- Place internal and external links in specific sections

- Plan image and table locations

- Include FAQ questions sourced from research

Role 4: Writing Agent

Input: Detailed outline + brand voice guidelines Output: Full article draft following the outline structure

The writing agent produces the first draft. It follows the outline exactly. No deviation from the heading structure. No skipping planned sections. It writes in the specified brand voice with the required tone and formatting rules.

This is where most teams make the critical mistake. They give the writing agent too much freedom. A writing agent with a detailed outline produces dramatically better content than one given only a keyword.

Role 5: SEO Optimization Agent

Input: Article draft + target keywords Output: Optimized article with meta tags, header hierarchy, keyword density, alt text

The optimization agent handles everything the writing agent should not think about. It verifies keyword placement in the title, first 100 words, H2 headings, and meta description. It checks header hierarchy. It writes alt text for images. It adds schema markup.

Role 6: Quality Assurance Agent

Input: Optimized article Output: Quality report with pass/fail scores + flagged issues

The QA agent runs automated checks that catch what humans miss:

- Factual claims verified against source URLs

- All internal links point to existing pages

- No duplicate content across the site

- Readability score within target range

- No banned words or AI writing patterns

- Word count meets minimum requirements

- No broken formatting or orphan headings

Role 7: GEO Agent

Input: Optimized article Output: Article enhanced for AI search visibility

The GEO agent ensures content performs in AI search engines, not just Google. It optimizes for generative engine optimization by structuring content so ChatGPT, Perplexity, and Gemini can cite it as a source.

Key tasks:

- Add clear, citable definitions and statistics

- Structure FAQ answers as standalone passages

- Include entity markup that AI models recognize

- Verify content appears in the format AI search engines prefer

Role 8: Publishing Agent

Input: Approved article + images Output: Published page on CMS + social media distribution

The publishing agent handles the last mile. It uploads content to the CMS, verifies formatting renders correctly, and triggers social media distribution of the published post.

Your SEO team. $99 per month. Stacc runs this entire multi-agent workflow for your business. 30 articles per month, published automatically. Start for $1 →

How to Design Your Workflow Architecture

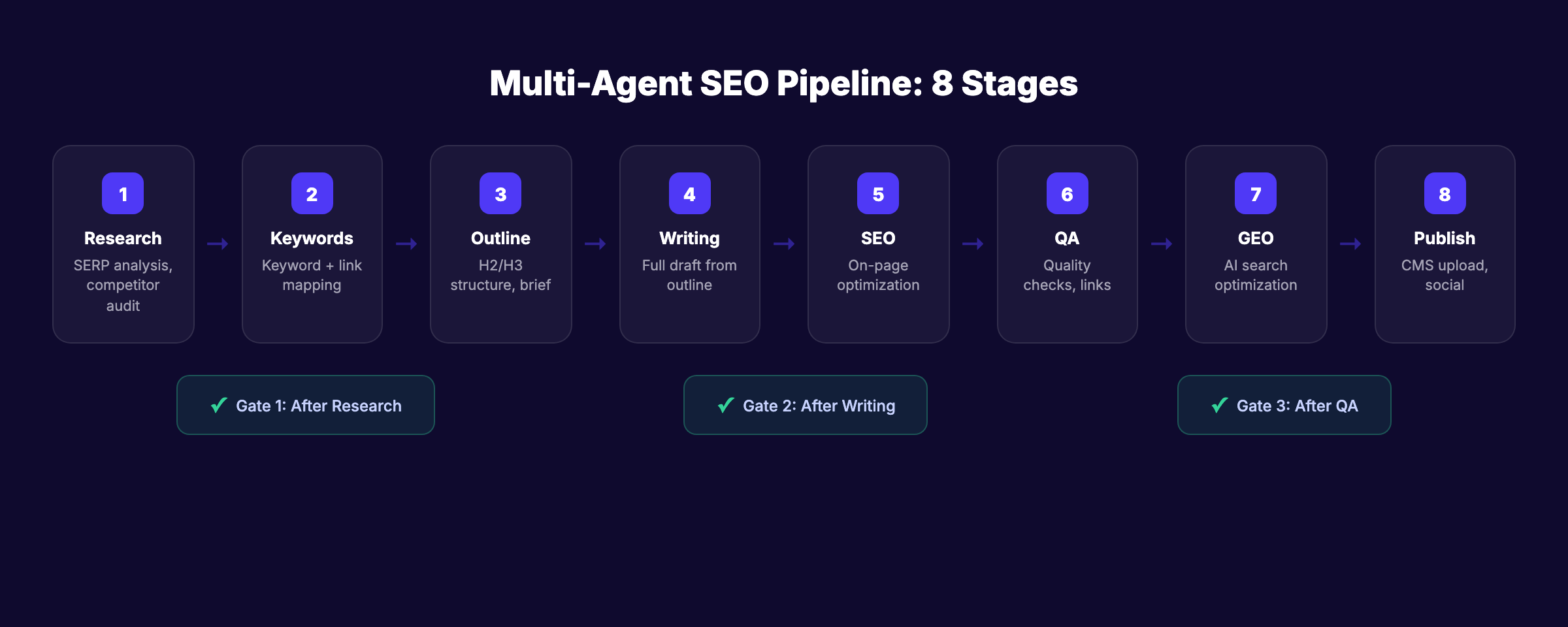

Designing a multi-agent SEO workflow requires 3 decisions: agent sequencing, handoff protocols, and quality gates.

Agent Sequencing

Agents run in a defined order. Each agent’s output becomes the next agent’s input. The sequence matters.

Standard content pipeline:

Research → Keywords/Links → Outline → Writing → SEO Optimization → QA → GEO → PublishingParallel opportunities:

While most stages run sequentially, some run in parallel:

- The research agent and keyword agent can work simultaneously

- The SEO optimization agent and GEO agent can process the same draft in parallel

- The publishing agent can prepare CMS templates while the QA agent reviews content

Parallel processing cuts total pipeline time by 30% to 40%.

Handoff Protocols

Every handoff between agents needs a defined format. Vague handoffs produce vague output.

| Handoff | Format Required |

|---|---|

| Research → Outline | JSON with SERP data, intent classification, PAA questions |

| Outline → Writing | Markdown outline with H2/H3 structure, word counts, link placements |

| Writing → Optimization | Full markdown draft with inline comments for SEO elements |

| Optimization → QA | Optimized markdown + checklist of SEO elements added |

| QA → Publishing | Approved markdown + quality score + flagged issues (if any) |

The more structured the handoff, the better the next agent performs. Never pass unstructured text between agents.

Quality Gates

Place quality gates between critical stages. A quality gate is a pass/fail checkpoint that prevents bad content from moving forward.

Gate 1: After Research — Does the keyword have search volume? Is the intent correctly classified? Are enough competitors analyzed?

Gate 2: After Writing — Does the draft follow the outline? Does it meet the word count minimum? Is the brand voice correct?

Gate 3: After QA — Do all links work? Is the content factually accurate? Does it pass AI detection checks?

Content that fails a gate goes back to the previous agent with specific feedback. It does not move forward.

Building Your First Multi-Agent SEO Pipeline

Start small. One workflow. One content type. Prove the system works before scaling.

Step 1: Define Your Content Type

Pick one content type to automate first. Blog posts are the best starting point. They are the highest-volume, most repeatable content type in SEO.

Do not start with landing pages, product pages, or technical documentation. Those require more human judgment and less repetition.

Step 2: Map the Manual Process

Before building any automation, document how your team creates content today. Write down every step:

- How do you select topics? (Topical map or ad hoc?)

- How do you research keywords? (Which tools? What data do you pull?)

- Who writes the outline? What format?

- Who writes the draft? What guidelines do they follow?

- Who optimizes for SEO? What checklist do they use?

- Who reviews quality? What do they check?

- Who publishes? Where?

Each step becomes an agent in your workflow.

Step 3: Configure Agent Instructions

Each agent needs explicit instructions. Not vague goals. Specific, measurable requirements.

Bad instruction: “Write a good blog post about this topic.”

Good instruction: “Write a 3,000-word blog post following this outline exactly. Use active voice. Maximum 20 words per sentence. Maximum 3 sentences per paragraph. Include these 12 internal links at the specified locations. Do not use contractions. Do not use the following banned words.”

The difference between a mediocre multi-agent workflow and a high-performing one is instruction specificity.

Step 4: Test With 5 Articles

Run your pipeline on 5 articles before scaling. Review every output at every stage. Compare the multi-agent output to your best manually-written content.

Look for:

- Research depth (does the research agent find what a human SEO strategist would find?)

- Outline quality (does the structure match top-ranking competitors?)

- Writing voice (does it sound like your brand?)

- SEO coverage (are all on-page elements correct?)

- Error rate (how many issues does QA catch?)

Fix every problem before scaling to batch production.

Step 5: Scale to Production Volume

Once the pipeline produces consistent quality across 5 test articles, increase volume gradually:

- Week 1-2: 5 articles per week

- Week 3-4: 10 articles per week

- Month 2: 15 to 20 articles per week

- Month 3: Target volume (30+ articles per month)

Monitor quality scores at every step. If quality drops at a specific volume level, investigate which agent is underperforming and adjust its instructions.

3,500+ blogs published. 92% average SEO score. See what a multi-agent SEO workflow produces at scale. Start for $1 →

Orchestration Platforms and Tools

You need a platform that connects your agents, manages handoffs, and enforces quality gates. Here are the categories that matter.

Workflow Orchestration Platforms

These platforms connect multiple AI agents in a defined sequence with conditional logic:

| Platform | Best For | Complexity |

|---|---|---|

| n8n | Self-hosted teams that want full control | High |

| Make (Integromat) | Visual workflow building | Medium |

| Zapier | Simple 2-3 step automations | Low |

| Custom scripts (Python/Node) | Maximum flexibility | Very high |

| Done-for-you platforms (Stacc) | Teams that want zero setup | None |

AI Model Selection Per Agent

Different agents perform best with different AI models. This is a detail most guides miss.

| Agent Role | Recommended Model Type | Why |

|---|---|---|

| Research | Fast, high-context model | Processes large SERP datasets quickly |

| Outline | Structured reasoning model | Produces organized, logical frameworks |

| Writing | Creative, voice-flexible model | Generates natural, brand-aligned prose |

| SEO Optimization | Detail-oriented model | Catches granular on-page elements |

| QA | Rule-following model | Executes checklists without deviation |

| GEO | Current knowledge model | Understands how AI search engines work |

The mistake most teams make: using the same model for every agent. A model that writes well may not audit well. Match the model to the task.

Integration Requirements

Your orchestration platform must connect to:

- CMS — WordPress, Webflow, Ghost, or custom publishing

- SEO tools — Google Search Console, rank tracking, site audit tools

- Data sources — Keyword databases, SERP APIs, competitor analysis tools

- Quality systems — Plagiarism checkers, readability analyzers, link validators

- Publishing channels — Blog, social media, email newsletter

Every broken integration is a broken workflow. Verify all connections before going live.

7 Common Mistakes and How to Fix Them

These mistakes cause most multi-agent SEO workflows to underperform or fail entirely.

Mistake 1: No Outline Stage

Teams skip the outline and send the writing agent directly from research to draft. The result is structurally weak content that misses key sections, ignores competitor patterns, and lacks planned link placement.

Fix: Always include an outline agent between research and writing. The outline is the blueprint. Without it, the writing agent builds without a plan.

Mistake 2: Identical Instructions for All Agents

Giving every agent the same system prompt produces agents that all try to do everything. Research agents start writing. Writing agents start optimizing. Quality drops everywhere.

Fix: Give each agent narrow, role-specific instructions. The research agent should never write prose. The writing agent should never select keywords.

Mistake 3: No Quality Gates

Without quality gates, bad content from early stages cascades through the pipeline. A weak outline produces a weak draft. A weak draft produces a weak optimized article. The publishing agent pushes broken content live.

Fix: Place quality gates after research, writing, and QA. Content that fails any gate returns to the previous agent with specific feedback.

Mistake 4: Unstructured Handoffs

Passing unstructured text between agents forces each agent to interpret what the previous agent meant. Interpretation introduces errors.

Fix: Define strict handoff formats. Use JSON, structured markdown, or templated documents. Every field has a label. Every output has a schema.

Mistake 5: No Human Review Loop

Fully autonomous content pipelines sound appealing. They produce errors at scale. A misconfigured agent can publish 50 articles with broken formatting, wrong keywords, or factual inaccuracies in a single day.

Fix: Include human review at a minimum of 1 stage. Most teams review after the QA agent and before publishing. Review 100% of output during the first 30 days. Reduce to 20% to 30% spot-checking after that.

Mistake 6: Testing at Full Volume

Teams build the pipeline and immediately run 30 articles through it. Half the articles have issues. Fixing 15 broken articles takes longer than fixing the pipeline would have.

Fix: Test with 5 articles. Fix every issue. Then scale gradually over 4 to 6 weeks.

Mistake 7: Ignoring GEO

Most multi-agent workflows optimize only for Google. In 2026, AI search engines drive meaningful traffic. Content that ranks on Google but never gets cited by ChatGPT or Perplexity leaves traffic on the table.

Fix: Add a GEO agent to your pipeline. It runs after SEO optimization and before QA. It ensures content is structured for AI search citation.

Measuring ROI From Multi-Agent SEO

ROI measurement proves the system works and justifies continued investment.

The 4 Metrics That Matter

Cost per article. Total monthly platform cost divided by articles published. Compare to your previous cost (freelance writers, agency fees, or employee hours).

Production velocity. Articles published per week. A well-built multi-agent workflow should produce 7 to 20 articles per week with 1 to 2 hours of human oversight per day.

Quality consistency. Track the QA pass rate. What percentage of articles pass all quality gates on the first attempt? Target 80% or higher after the first month.

Organic traffic growth. The ultimate metric. Track total organic sessions, keywords ranked, and pages in the top 10. Multi-agent workflows should show measurable traffic growth within 60 to 90 days.

ROI Benchmarks

Based on industry data from First Page Sage’s 2026 agentic AI report:

| Metric | Manual Process | Multi-Agent Workflow |

|---|---|---|

| Articles per month | 4-8 | 30-80 |

| Cost per article | $150-$500 | $3-$15 |

| Hours per article (human time) | 3-6 hours | 10-20 minutes |

| Time to first ranking movement | 90-180 days | 60-90 days |

| Keyword coverage growth | 5-10 new keywords/month | 50-200 new keywords/month |

The compounding effect is where multi-agent SEO delivers its biggest advantage. 30 articles per month for 6 months creates 180 indexed pages. That is 180 chances to rank. 180 internal linking opportunities. 180 signals to Google that your site covers a topic deeply enough to earn topical authority.

Where Multi-Agent SEO Is Heading

Multi-agent SEO workflows are not a temporary trend. They represent the permanent operating model for content-driven marketing teams.

3 shifts to watch in the next 12 months:

Agent-to-agent communication. Today, most workflows pass data through a central orchestration platform. The next generation of agents will communicate directly. The research agent tells the writing agent about a trending subtopic in real time. The QA agent sends feedback directly to the optimization agent without human routing.

Self-improving pipelines. Current workflows require manual tuning. Future systems will analyze their own output quality, identify which agent underperforms, and adjust instructions automatically. If article quality drops on technical topics, the system will modify the writing agent’s instructions for that content type.

Unified SEO and GEO optimization. The distinction between traditional SEO and GEO will dissolve. A single pipeline will optimize content for Google, ChatGPT, Perplexity, and Gemini simultaneously. The market is already moving in this direction. Companies that build multi-agent workflows today will have 12 to 18 months of compounding advantage when unified optimization becomes standard.

The global AI agents market is projected to reach $50.31 billion by 2030. The question is not whether multi-agent SEO will become the default. It is whether your team adopts it before or after your competitors.

FAQ

What is a multi-agent SEO workflow?

A multi-agent SEO workflow is a system where multiple specialized AI agents handle different stages of the content pipeline. One agent researches keywords. Another builds outlines. A third writes the draft. Each agent focuses on a single task, producing better output than a single all-purpose agent.

How many agents do I need for an SEO workflow?

Start with 4 core agents: research, outline, writing, and QA. Add optimization, GEO, and publishing agents as your workflow matures. Most production-ready workflows use 6 to 8 agents total.

How much does a multi-agent SEO workflow cost?

Costs range from $0 (self-built with free AI APIs) to $99 per month for done-for-you platforms like Stacc that run the entire pipeline. Mid-range setups using orchestration platforms like n8n or Make typically cost $50 to $200 per month in platform fees plus AI API costs.

Can multi-agent workflows replace human writers?

They replace the writing labor, not the editorial judgment. Humans still set strategy, approve content, handle brand-sensitive topics, and provide creative direction. The most effective workflows keep humans in the quality review stage.

How long does it take to build a multi-agent SEO workflow?

A basic 4-agent pipeline takes 1 to 2 weeks to configure and test. A production-ready 8-agent pipeline takes 3 to 4 weeks including testing with 5 pilot articles. Using a pre-built platform reduces this to same-day setup.

What is the difference between multi-agent SEO and automated SEO workflows?

Automated SEO workflows use rule-based automation (if X, then Y). Multi-agent workflows use AI agents that reason, plan, and make decisions within their defined role. Multi-agent systems handle complex, variable tasks. Rule-based automation handles simple, predictable tasks.

Multi-agent SEO workflows are the difference between publishing 4 articles per month and publishing 40. The technology exists today. The frameworks are proven. The ROI data supports it.

Start with 4 agents. Test on 5 articles. Scale what works. Let the output compound.

Rank everywhere. Do nothing. Stacc runs multi-agent SEO workflows for your business. 30 articles per month, published on autopilot. Start for $1 →

Written and published by Stacc. We publish 3,500+ articles per month across 70+ industries. All data verified against public sources as of March 2026.