What Is AI Content Governance? Complete Guide

AI content governance sets the rules for how teams use AI to create content. Learn frameworks, risk tiers, quality gates, and policies. April 2026.

Siddharth Gangal • 2026-04-02 • Content Strategy

In This Article

What Is AI Content Governance? Complete Guide

77% of organizations are building AI governance programs right now. The other 23% are publishing AI content with no policies, no quality controls, and no accountability. Those are the organizations that end up in the news for the wrong reasons.

AI content governance is the system of policies, processes, roles, and controls that determines how your team uses AI to create, review, and publish content. It answers 4 questions: Who can use AI tools? What content types can AI produce? How does AI content get reviewed? What happens when something goes wrong?

Without governance, AI content production creates real business risk. Google penalized over 1,400 websites in its March 2024 Core Update for “scaled content abuse.” The FTC now expects clear labeling of AI-generated content. The EU AI Act imposes compliance requirements on businesses using AI systems. And one hallucinated claim in a published article can destroy credibility that took years to build.

We have published 3,500+ blogs across 70+ industries with a 92% average SEO score. AI content governance is built into every step of our production process. This guide explains how to build your own.

Here is what you will learn:

- What AI content governance covers and why every team needs it

- The 5 pillars of an effective governance framework

- How to create risk tiers so review intensity matches content sensitivity

- Quality gates that catch errors before publication

- The roles and responsibilities your team needs

- How governance protects your SEO performance

Why AI Content Governance Matters Now

The risk of publishing AI content without governance grows every quarter. Three forces are converging to make governance mandatory.

Google’s Quality Standards Are Tightening

Google does not penalize AI content for being AI content. Google penalizes low-quality content regardless of who created it. But AI makes it easier to produce low-quality content at scale. That is exactly what the March 2024 update targeted.

According to Search Engine Land’s coverage of AI governance in SEO, thin and duplicate AI content carries risks of deindexation and ranking demotions. The E-E-A-T framework applies equally to AI and human content. Without governance, teams produce content that fails E-E-A-T checks and damages entire domain authority.

AI hallucinations caused an estimated $67.4 billion in global losses in 2024. 47% of enterprise AI users report making major business decisions based on incorrect AI information. These are not edge cases. They are the default outcome when AI content ships without review.

Regulatory Requirements Are Real

The EU AI Act requires transparency about AI use. The FTC expects businesses to label AI-generated content. California and Texas have passed state-level AI disclosure laws. These are not suggestions. They are legal requirements with financial penalties.

Content governance ensures your team meets disclosure requirements, maintains audit trails, and can demonstrate human oversight of AI-produced content. The AI governance market is projected to grow from $890 million in 2024 to $5.8 billion by 2029. Organizations are investing because the cost of governance is far lower than the cost of incidents.

Brand Reputation Is at Stake

AI hallucinations are not rare edge cases. They are a predictable output of language models. A single factual error in a published article can mislead customers, damage trust, and create liability. A pattern of errors signals to Google and AI search engines that your content is unreliable.

The cost of governance is minimal compared to the cost of a reputational incident. A few hours of policy creation and workflow setup prevents thousands of dollars in damage control.

Your SEO team. $99 per month. 30 optimized articles, published automatically. Start for $1 →

The 5 Pillars of AI Content Governance

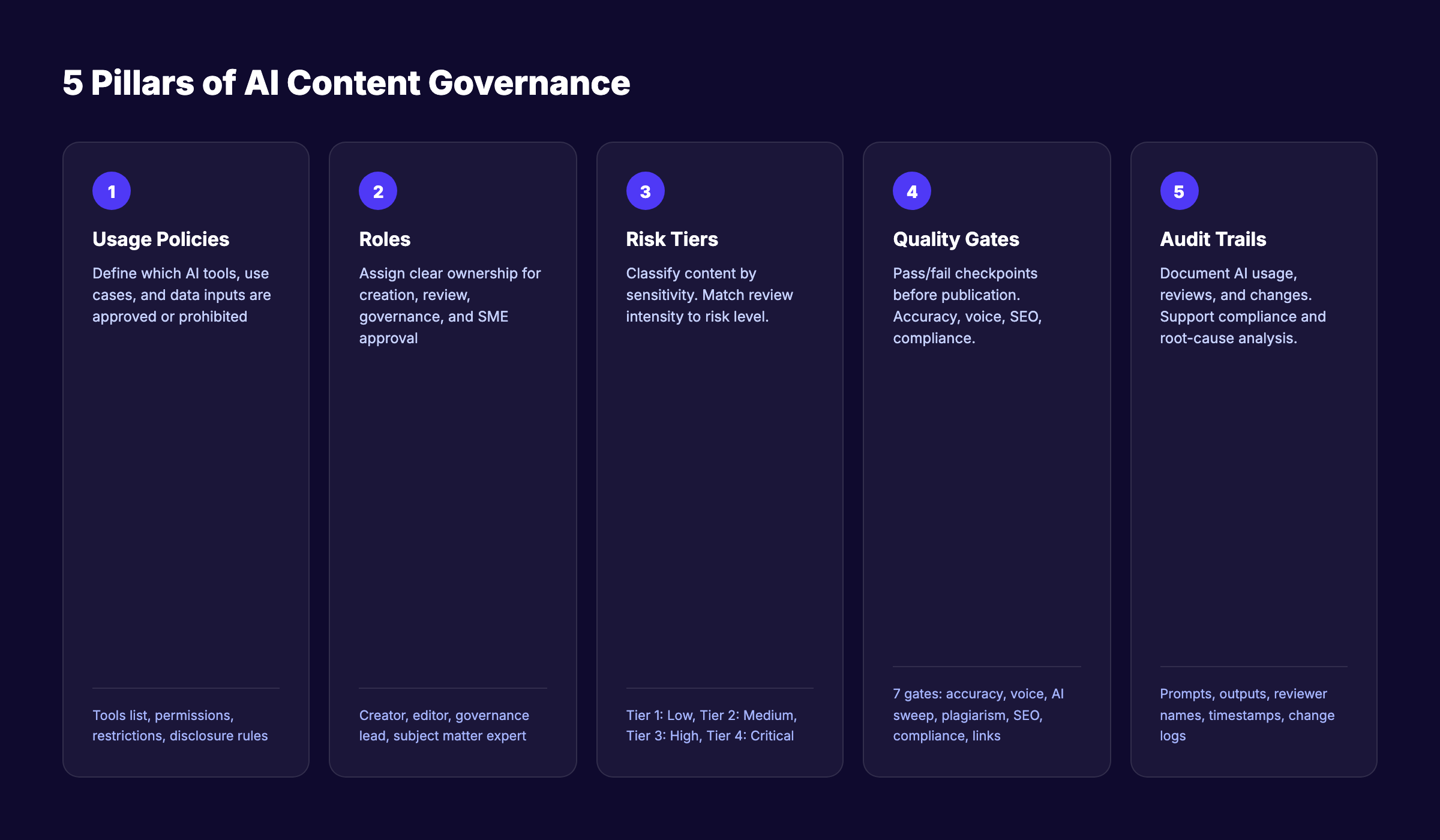

An effective governance framework covers 5 areas. Each pillar addresses a different dimension of risk and control.

Pillar 1: Usage Policies

Define exactly how your team can and cannot use AI tools. Be specific. Vague policies like “use AI responsibly” fail because they leave interpretation to individuals.

What to include:

| Policy Area | Example Rule |

|---|---|

| Approved tools | ”Only ChatGPT, Claude, and Jasper are approved for content creation” |

| Approved use cases | ”AI can generate first drafts and outlines. AI cannot publish without review.” |

| Prohibited use cases | ”AI cannot generate legal disclaimers, medical advice, or financial guidance” |

| Data input restrictions | ”Never input customer PII, proprietary data, or confidential information into AI tools” |

| Disclosure requirements | ”All AI-assisted content must include an editorial disclosure” |

Pillar 2: Roles and Responsibilities

Governance fails without clear ownership. Define who does what.

Essential roles:

- Content creator. Uses AI tools within policy boundaries. Responsible for initial draft quality.

- Editor/reviewer. Reviews all AI-assisted content for accuracy, brand voice, and compliance before publication. This is the human-in-the-loop gate.

- Governance lead. Maintains policies, conducts audits, updates guidelines as AI tools evolve. Reports to leadership on compliance.

- Subject matter expert (SME). Reviews content in specialized areas (legal, medical, financial, technical) where factual accuracy carries higher stakes.

For small teams, one person may hold multiple roles. The key is that no AI content publishes without at least one human review.

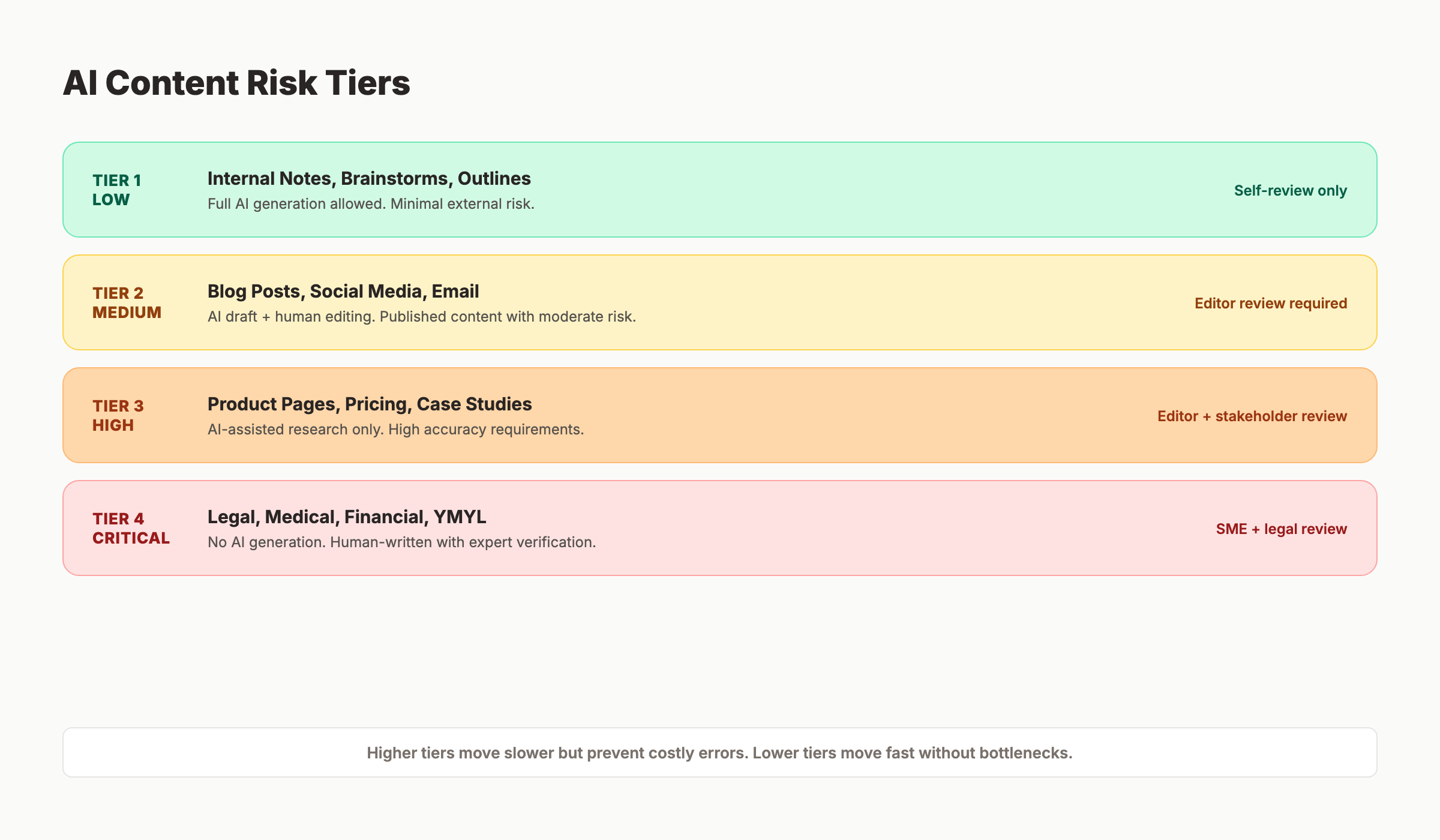

Pillar 3: Content Risk Tiers

Not all content carries equal risk. A brainstorming outline does not need the same review as a published medical article. Smart organizations define risk tiers and apply review intensity proportionally.

| Tier | Content Type | AI Use Level | Review Required |

|---|---|---|---|

| Tier 1 (Low) | Internal notes, brainstorms, draft outlines | Full AI generation | Self-review only |

| Tier 2 (Medium) | Blog posts, social media, email newsletters | AI draft + human editing | Editor review |

| Tier 3 (High) | Product pages, pricing pages, case studies | AI-assisted research only | Editor + stakeholder review |

| Tier 4 (Critical) | Legal content, medical claims, financial advice, YMYL pages | No AI generation | SME + legal review |

Tier 1 content moves fast. Tier 4 content moves slow. The tiering system ensures you do not bottleneck low-risk content with unnecessary reviews. And you do not rush high-risk content through without proper oversight.

For blog content specifically, most SEO blog posts fall into Tier 2. Content targeting on-page SEO topics or keyword research guides also fits Tier 2. AI drafts the content. A human editor reviews for accuracy, brand voice, and quality. This is the model we use at Stacc for every article we publish.

Pillar 4: Quality Gates

Quality gates are specific checkpoints that content must pass before moving to the next stage. They turn abstract quality standards into concrete pass/fail criteria.

Pre-publication quality gates:

- Factual accuracy check. Every statistic, claim, and named source verified against the original source

- Brand voice check. Content matches your tone guidelines. No banned words or phrases.

- Contraction and AI pattern sweep. Remove contractions, filler transitions, and identifiable AI writing patterns

- Plagiarism check. Content does not duplicate existing published material

- SEO check. Keyword placement, header structure, meta description, and internal links verified

- Compliance check. Disclosure requirements met. No prohibited claims. Risk tier review completed.

- Link verification. Every internal and external link tested and functional

Each gate has a clear pass/fail outcome. Content that fails any gate goes back for revision. Content that passes all gates moves to publication.

Pillar 5: Audit Trails and Monitoring

Governance is not a one-time setup. It requires ongoing monitoring and documentation.

What to track:

- Which AI tools produced each piece of content

- Which prompts generated the initial draft

- Who reviewed the content and when

- What changes the reviewer made

- When the content was published and last updated

Audit trails serve 2 purposes. They support compliance audits if regulators ask how your content was produced. And they enable root-cause analysis when quality issues surface. If a factual error appears in a published article, the audit trail shows exactly where the error originated and which review step missed it.

Building Your AI Content Governance Framework

Here is a step-by-step process to implement governance. Start small. Expand as your team’s AI usage grows.

Step 1: Audit Current AI Usage (Week 1)

Before writing policies, understand how your team already uses AI. Survey every team member who creates content. Document:

- Which AI tools they use (approved or not)

- What types of content they produce with AI

- Whether they have any review process in place

- What concerns they have about AI content quality

Most teams discover that AI usage is already happening. Often without any coordination. The audit identifies gaps and establishes a baseline.

Step 2: Define Policies and Risk Tiers (Week 2)

Using the 5 pillars above, create your governance document. Keep it under 5 pages. Longer documents do not get read.

Include:

- Approved AI tools list

- Use case permissions and restrictions

- Risk tier definitions with examples

- Roles and responsibilities matrix

- Quality gate checklist

- Escalation process for violations

Step 3: Set Up Quality Gate Workflows (Week 3)

Integrate quality gates into your existing content calendar and publishing workflow. If you use a project management tool, add review stages as mandatory steps before publication.

For each content piece, the workflow should follow: AI Draft > Self-Review > Editor Review > Quality Gates > Publication. Tier 3 and 4 content adds stakeholder and SME reviews before the quality gates.

Step 4: Train Your Team (Week 4)

Policies only work if everyone understands them. Run a 30-minute training session covering:

- Why governance exists (risks, not rules for the sake of rules)

- How to classify content into risk tiers

- The quality gate checklist

- How to document AI usage for audit trails

- Where to go with questions

Step 5: Monitor and Iterate (Monthly)

Review published content monthly for governance compliance. Check:

- Were all quality gates completed before publication?

- Did any factual errors reach publication? If so, which review step failed?

- Are team members following the approved tools list?

- Has any new AI tool entered the market that should be evaluated?

- Do risk tiers need adjustment based on new content types?

Update your governance document quarterly. AI tools evolve fast. Your policies should keep pace.

3,500+ blogs published. 92% average SEO score. See what Stacc can do for your site. Start for $1 →

How AI Content Governance Protects SEO

Governance is not just a compliance exercise. It directly protects and improves your SEO performance.

Preventing Scaled Content Abuse Penalties

Google’s scaled content abuse policy targets websites that publish large volumes of low-quality content to manipulate search rankings. Without governance, AI makes it easy to fall into this trap. 30 articles per month with no review process can produce exactly the kind of thin, duplicative content Google penalizes.

Quality gates prevent this. Every article passes factual accuracy, brand voice, and SEO checks before publication. Volume stays high. Quality stays consistent. Google rewards the combination.

Read our guide on scaling blog content with AI for the production workflow that makes this possible.

Maintaining E-E-A-T at Scale

E-E-A-T requires demonstrable Experience, Expertise, Authoritativeness, and Trustworthiness. Pure AI content lacks the Experience component. It cannot share first-person insights, original data, or lived expertise.

Governance addresses this by requiring human editors to add experience signals: proprietary data points, specific examples from real projects, author bylines with credentials, and original perspectives that AI cannot generate.

The result: content that ranks for E-E-A-T signals even though AI handled the initial draft.

Ensuring Consistent Quality Across High Volume

Most content quality problems are consistency problems. Article 1 is excellent. Article 15 is mediocre. Article 28 has a factual error. Without governance, quality varies with whoever reviewed (or did not review) each piece.

Quality gates standardize the review process. Every article goes through the same checklist. Quality becomes a system, not an individual effort.

For teams using managed services like Stacc, governance is built into the production pipeline. Every article we publish follows the same quality gates. That consistency is how we maintain a 92% average SEO score across 3,500+ published articles.

Protecting Against AI Content Risks

| Risk | Without Governance | With Governance |

|---|---|---|

| Factual errors (hallucinations) | Published and discovered by readers | Caught in factual accuracy gate |

| Brand voice inconsistency | Random tone shifts across articles | Caught in brand voice gate |

| Duplicate content | SEO penalties for thin/duplicate pages | Caught in plagiarism gate |

| Missing disclosures | Regulatory violations and fines | Caught in compliance gate |

| Broken internal links | Lost link equity and poor UX | Caught in link verification gate |

Rank everywhere. Do nothing. Blog SEO, Local SEO, and Social on autopilot. Start for $1 →

Common AI Content Governance Mistakes

Most teams that attempt governance make the same 5 mistakes. Avoiding them saves months of rework.

Mistake 1: Making Policies Too Restrictive

Teams burned by AI errors sometimes ban AI entirely. That is overreacting. The goal is controlled use, not no use. Banning AI means your competitors publish 30 articles per month while you publish 4. The fix: define clear boundaries, not blanket prohibitions.

Mistake 2: No Risk Tiering

Treating every piece of content the same creates bottlenecks. Blog post outlines do not need legal review. Product claims do. Without risk tiers, everything gets the same slow review process. Teams get frustrated and start bypassing governance entirely.

Mistake 3: Writing Policies Nobody Reads

A 20-page governance document gathers dust. Keep your core policy under 3 pages. Use a 1-page checklist for daily reference. Post the quality gates where your team works. If the policy is not accessible, it will not be followed.

Mistake 4: No Audit Trail

Without documentation, you cannot prove compliance during a regulatory audit. You cannot trace the source of an error. And you cannot improve your process because you have no data on where failures occur. Log prompts, outputs, reviews, and approvals.

Mistake 5: Set-and-Forget

AI tools change quarterly. New capabilities, new risks, new compliance requirements. Governance policies written in January 2026 are already outdated by April. Review and update your framework at least quarterly.

For teams using agentic SEO systems or AI SEO agents that operate with more autonomy, these governance principles become even more critical. The more autonomous the system, the stronger the guardrails need to be. Teams working with AI content writing tools should build governance before scaling output.

AI Content Governance for Different Team Sizes

Governance scales with your team. A 2-person startup does not need the same framework as a 50-person content operation.

Solo Operators and Small Teams (1 to 5 People)

Keep it simple. One page of policies. One person reviews AI content before publication. Focus on:

- A short approved tools list

- A quality gate checklist (print it, tape it to your monitor)

- A rule: nothing publishes without one human read-through

Mid-Size Teams (5 to 20 People)

Add structure. Create the full governance document. Assign editor and governance lead roles. Implement risk tiers. Use your project management tool to enforce review stages.

Enterprise Teams (20+ People)

Full governance framework with cross-functional oversight. Include legal, compliance, brand, and SEO stakeholders. Centralized AI council approves tools and monitors usage. Regular audits with documented findings. Content audits should include AI governance compliance checks. Enterprise teams publishing for AI citation readiness need governance across both traditional and AI search outputs.

FAQ

What is AI content governance?

AI content governance is the system of policies, processes, roles, and quality controls that determines how a team uses AI to create, review, and publish content. It covers which tools are approved, what content types AI can produce, how AI content gets reviewed, and what happens when errors occur.

Is AI content governance required by law?

In some jurisdictions, yes. The EU AI Act requires transparency about AI use. The FTC expects labeling of AI-generated content. California and Texas have state-level AI disclosure laws. Even where not legally required, governance protects against SEO penalties and reputational damage.

Does Google penalize AI content?

Google does not penalize content for being AI-generated. Google penalizes low-quality content regardless of production method. The March 2024 Core Update penalized over 1,400 sites for scaled content abuse. Governance with quality gates prevents the kind of low-quality AI output that triggers penalties.

How many people do I need for AI content governance?

One person can manage governance for a small team. The minimum requirement is that someone reviews every AI-generated piece before publication. For teams publishing 20+ articles per month, dedicate an editor role and a governance lead role.

What are AI content risk tiers?

Risk tiers classify content by how much damage an error could cause. Tier 1 (low risk) includes internal notes and brainstorms. Tier 2 (medium) includes blog posts and social media. Tier 3 (high) includes product and pricing pages. Tier 4 (critical) includes legal, medical, and financial content. Higher tiers require more intensive review.

Can Stacc help with AI content governance?

Yes. Every article Stacc publishes passes through built-in quality gates: factual accuracy checks, brand voice alignment, SEO verification, and link validation. Governance is part of the production pipeline. You get the volume of AI content (30 to 80 articles per month) with the quality controls of a managed editorial process.

Start Governing Before You Scale

AI content governance is not optional for teams that publish at scale. The risks are too real and the cost of governance is too low to justify skipping it.

Start with a one-page policy. Add a quality gate checklist. Assign one person to review before publishing. That baseline handles 80% of the risk. Expand from there as your AI content volume grows.

The teams that govern well publish faster, rank higher, and never worry about the next algorithm update. That is the real ROI of governance.

The businesses that build governance into their workflow today will scale their AI content production with confidence. The ones that skip it will eventually face an incident that makes governance mandatory. Choose the proactive path.

Written and published by Stacc. We publish 3,500+ articles per month across 70+ industries. All data verified against public sources as of March 2026.