AI Content Detection: The Complete Guide (2026)

Learn how AI content detection works, which tools are accurate, and what Google actually does about AI content. Covers bias, false positives, and SEO impact.

AI content detection has become one of the most debated topics in SEO and content marketing. Teachers use it to catch cheating. Clients use it to verify freelancer work. SEO teams run every article through detectors before publishing. The tools promise 99% accuracy. The reality is far messier.

OpenAI built its own AI text classifier in January 2023. It correctly identified AI-written text only 26% of the time. It misclassified human-written text 9% of the time. OpenAI shut it down 6 months later. If the company that built GPT could not build an accurate detector, that should give everyone pause.

This guide covers how AI content detection actually works, which tools perform best, where the technology fails, and what it means for SEO professionals publishing content in 2026.

We have published 3,500+ blogs across 70+ industries. We run detection checks on every piece of content. The workflows and data in this guide come from that experience.

Here is what you will learn:

- How AI detectors analyze text and the math behind their scoring

- Which tools are most accurate and which produce the most false positives

- Why AI detectors are biased against non-native English speakers

- What Google actually says about AI content and search rankings

- How the EU AI Act and watermarking will change detection by August 2026

- How to write AI-assisted content that avoids detection flags

Chapter 1: How AI Content Detection Works

AI content detectors analyze text for statistical patterns that differ between human and machine writing. They do not understand meaning. They measure predictability.

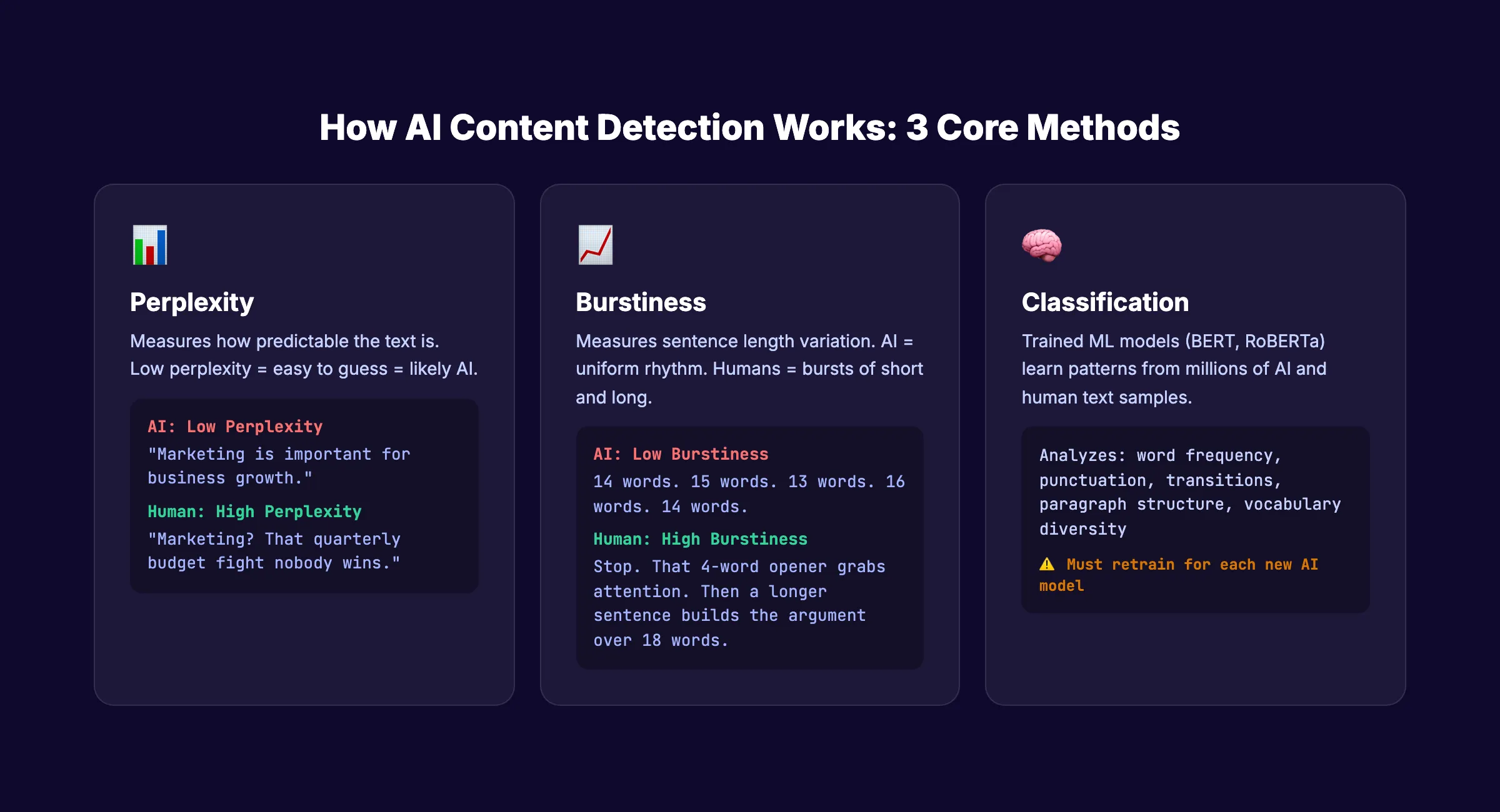

Perplexity: How Surprising Is the Text?

Perplexity measures how predictable a text is. Low perplexity means the next word is easy to guess. High perplexity means the text is surprising and varied.

AI-generated text tends toward low perplexity. Language models choose the most probable next token at each step. Human writing wanders. It uses unexpected word choices, tangents, and stylistic quirks that raise perplexity scores.

When a detector scans your text, it runs it through its own language model and asks: “How predictable was each word?” Highly predictable text scores as likely AI-generated.

Burstiness: How Varied Are the Sentences?

Burstiness measures variation in sentence length and complexity. Human writers naturally produce bursts of short and long sentences. One paragraph has 5-word fragments. The next has complex 18-word constructions.

AI tends to produce uniform sentence lengths. Every paragraph follows a similar rhythm. Every sentence lands between 12 and 18 words. That uniformity is a detection signal.

Classification Models: Pattern Recognition at Scale

Modern detectors go beyond perplexity and burstiness. They train classification models (typically based on BERT, RoBERTa, or ELECTRA architectures) on millions of examples of human and AI text.

These models learn hundreds of subtle patterns: word frequency distributions, punctuation habits, transition word usage, paragraph structure, and vocabulary diversity. They output a probability score. That score becomes the “AI percentage” you see in detection tools.

The training data matters enormously. A model trained on GPT-3.5 output may miss patterns from Claude or Gemini. As new language models launch, detectors must retrain. This creates a permanent lag between AI generation and AI detection.

What Detectors Cannot Do

Detectors cannot determine intent. They cannot tell if a human wrote text with AI assistance, if a human heavily edited AI output, or if a human naturally writes in a structured, predictable style.

They also struggle with mixed content. A 2,000-word article where 500 words are AI-generated and 1,500 are human-written will produce inconsistent scores depending on which sections the detector samples.

Understanding AI content writing at a technical level helps you interpret detector results rather than blindly trusting them.

Stop writing. Start ranking. Stacc publishes 30 SEO articles per month for $99. Every article optimized. Every article reviewed. Start for $1 →

Chapter 2: How Accurate Are AI Detectors?

The accuracy claims from detector vendors are misleading. Here is what the independent research actually shows.

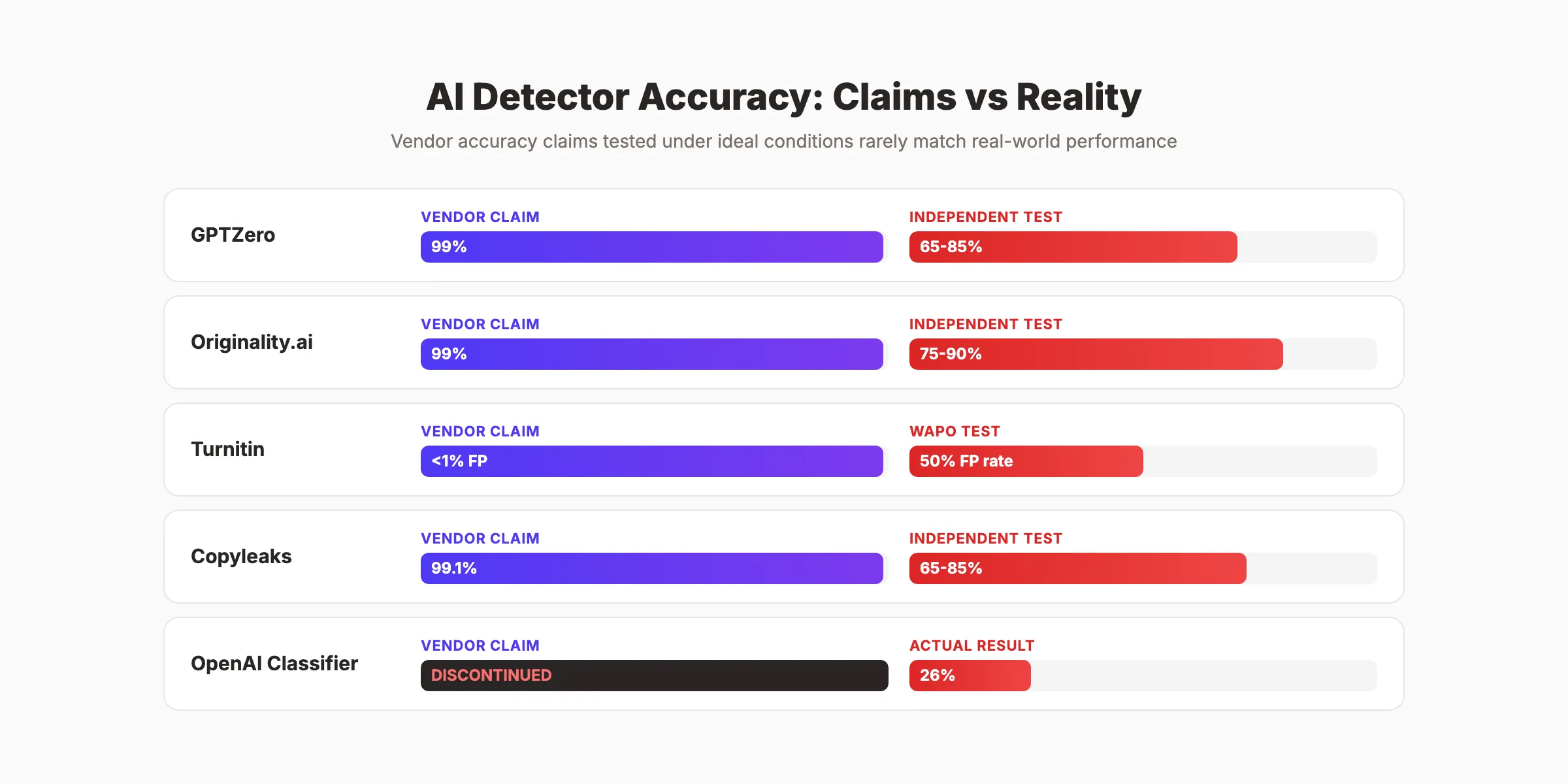

Vendor Claims vs Independent Testing

| Detector | Vendor Accuracy Claim | Independent Test Results |

|---|---|---|

| GPTZero | 99% accuracy, 1% false positive | Varies widely by text type. Flagged 97% of TOEFL essays. |

| Originality.ai | 99% accuracy, 0.5% false positive | Strong on pure AI text. Weaker on edited/mixed content. |

| Turnitin | <1% document-level false positive | Washington Post found 50% false positive rate in testing. 4% sentence-level false positive rate per Turnitin. |

| Copyleaks | 99.1% accuracy claimed | Independent tests show 65-85% range depending on model. |

| OpenAI Classifier | N/A (discontinued) | 26% true positive, 9% false positive. Shut down July 2023. |

The pattern is clear. Vendors test under ideal conditions (pure AI text vs pure human text). Real-world content is messy. It is edited, mixed, paraphrased, and translated. Accuracy drops dramatically in those conditions.

An IACIS 2025 study found that only 5 of over 12 popular detectors scored above 70% accuracy on mixed content. For paraphrased AI content, accuracy frequently drops below 50%.

The False Positive Problem

False positives are the real danger. A false positive means the detector flags human-written text as AI-generated.

This is not a theoretical problem. More than 40% of surveyed 6th-12th grade teachers used AI detection tools during the last school year, according to EdWeek. Students are losing grades, facing academic misconduct charges, and experiencing genuine distress over false accusations.

For content professionals, false positives create a different problem. Clients refuse to pay for work flagged as AI-generated. Editors reject articles. The detector becomes judge, jury, and executioner with no appeals process.

Why Results Vary Between Tools

Paste the same text into 5 different detectors and you will get 5 different scores. This happens because each tool uses different training data, different model architectures, and different scoring thresholds.

Some tools are calibrated to minimize false positives (fewer innocent people flagged). Others are calibrated to maximize true positives (catch more AI text, even if some humans get caught). Neither approach is wrong. But the difference means detector results are not interchangeable.

Chapter 3: The ESL Bias Problem

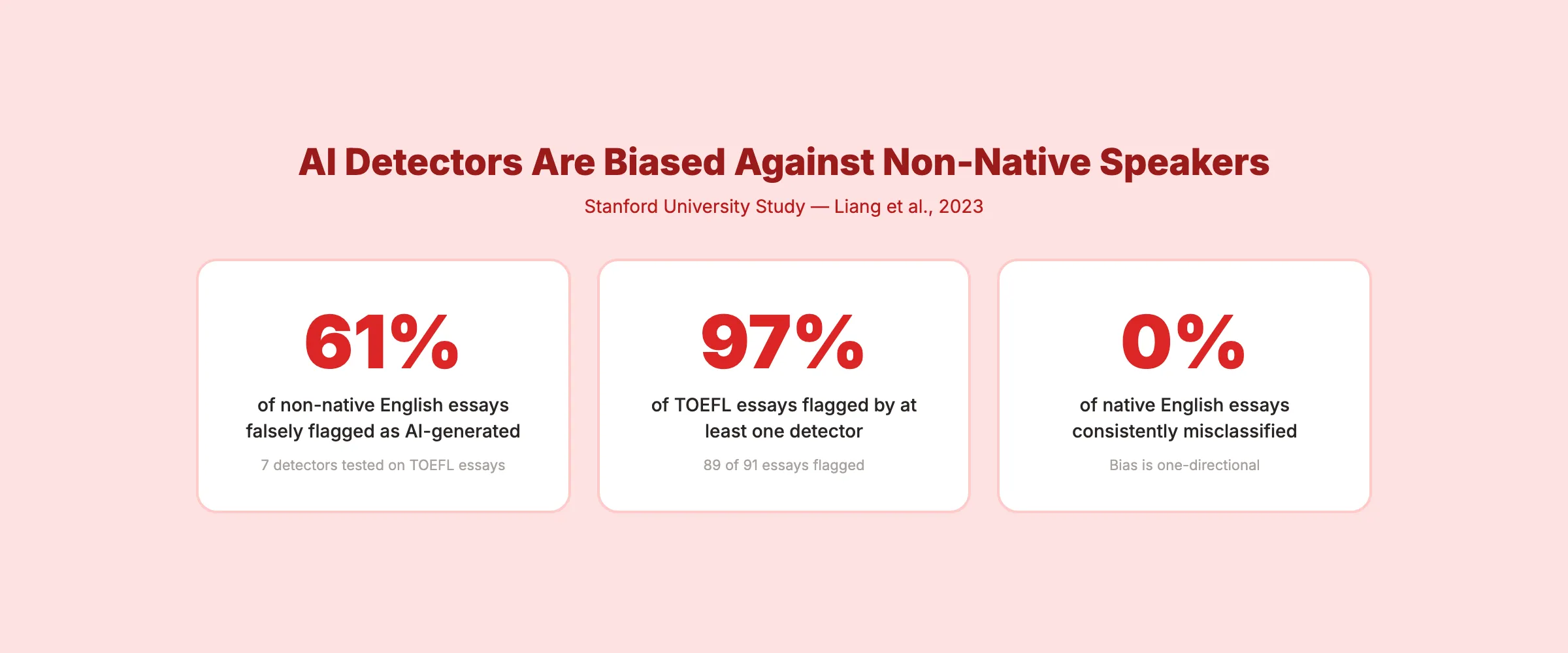

This is the most damning finding in AI detection research. AI detectors are systematically biased against non-native English speakers.

The Stanford Study

Researchers at Stanford tested 7 popular AI detectors on essays written by native and non-native English speakers. The results, published on arXiv, were alarming:

- 61.22% of TOEFL essays by non-native English speakers were falsely flagged as AI-generated

- 97% (89 of 91) TOEFL essays were flagged by at least one of the 7 detectors

- 0% of native English speaker essays were consistently misclassified

The bias exists because non-native speakers tend to use simpler vocabulary, shorter sentences, and more predictable grammar patterns. These are the exact same signals that AI detectors associate with machine-generated text.

Real Consequences

The Markup investigated cases where international students were falsely accused of using AI. Students faced academic misconduct charges, failed courses, and damaged academic records based entirely on detector output.

NPR reported on the psychological toll. Students described the experience as “mentally exhausting” because they could not prove they wrote their own work. The tools offer no path to exoneration.

Why This Matters for SEO

If you publish content written by non-native English speakers, that content is more likely to trigger AI detection flags. This is relevant for:

- International content teams

- Outsourced content production

- Multilingual SEO campaigns

- Guest contributors from diverse backgrounds

The solution is not to stop working with non-native writers. The solution is to stop treating detector output as definitive proof of anything. Use detectors as one signal among many, never as the sole arbiter.

For teams scaling blog content with AI, understanding these limitations prevents overreliance on flawed tools.

Your SEO team. $99 per month. 30 optimized articles, published automatically. Human-reviewed before every publication. Start for $1 →

Chapter 4: Top AI Content Detection Tools Compared

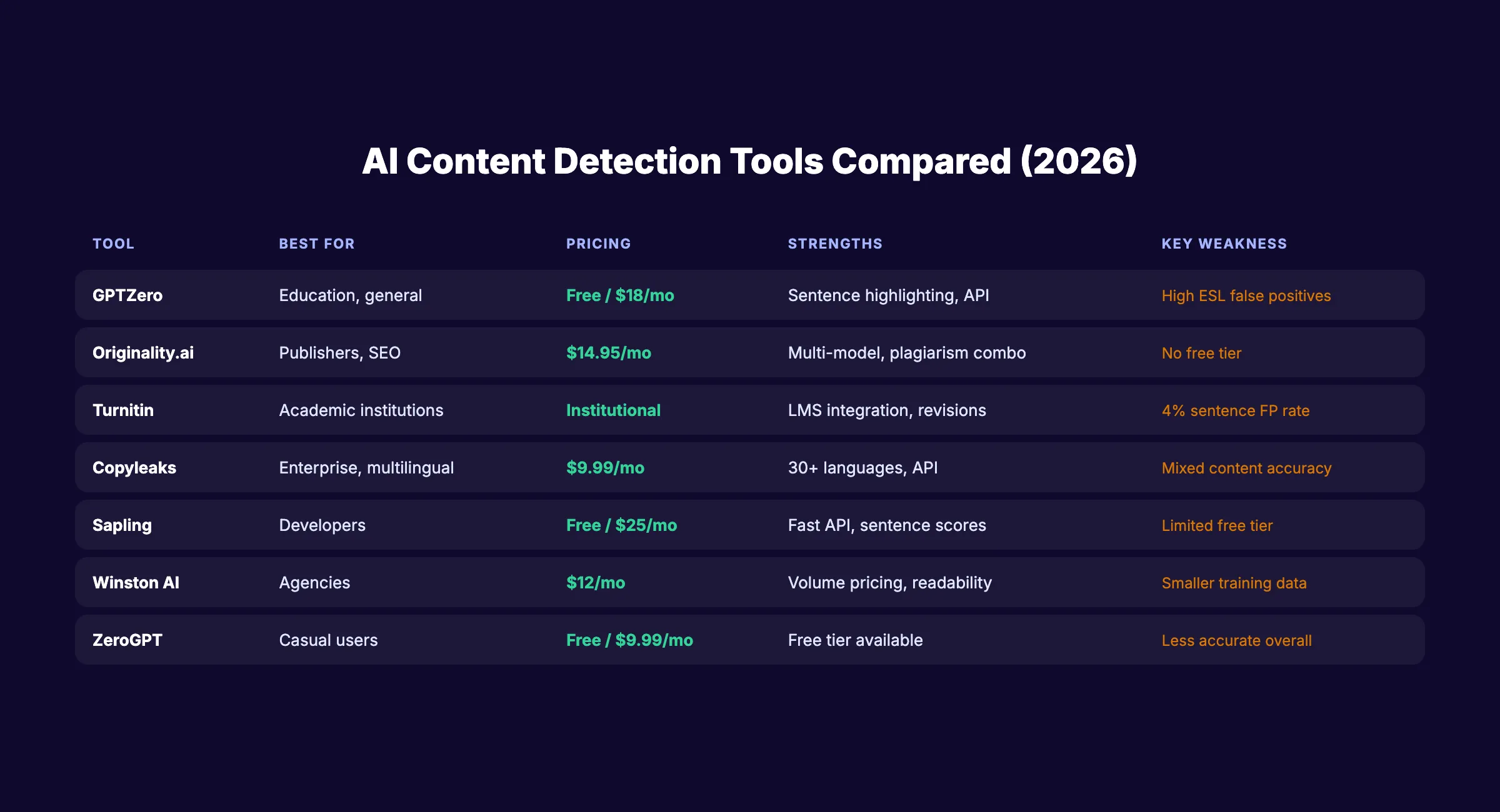

Not all detectors are equal. Here is a comparison based on independent testing, pricing, and specific strengths.

| Tool | Best For | Pricing | Strengths | Weaknesses |

|---|---|---|---|---|

| GPTZero | Education, general use | Free (10K words/mo), $18/mo Pro | Sentence-level highlighting, API available | High false positive rate on ESL text |

| Originality.ai | Content publishers, SEO | $14.95/mo (2,000 scans) | Multi-model detection, plagiarism combo, Chrome extension | No free tier, aggressive marketing |

| Turnitin | Academic institutions | Institutional pricing only | Deep LMS integration, revision tracking | 4% sentence-level false positive rate |

| Copyleaks | Enterprise, multilingual | $9.99/mo (25 pages) | 30+ languages, API, source code detection | Accuracy drops on mixed content |

| Sapling | Developers, API-first | Free (2,000 chars), $25/mo | Fast API, sentence-level scores | Limited free tier |

| Winston AI | Content agencies | $12/mo (80,000 words) | Readability scoring, plagiarism check | Smaller training dataset |

| ZeroGPT | Casual users | Free (basic), $9.99/mo | Free tier available | Less accurate than paid alternatives |

Which Tool Should You Use?

For SEO content teams: Originality.ai offers the best combination of detection accuracy and content workflow features. The plagiarism check plus AI detection in one tool saves time.

For educators: Turnitin remains the standard for LMS integration. But pair it with human judgment. Never rely on the score alone.

For freelance writers: GPTZero offers the most generous free tier. Use it to self-check before submitting work. If flagged, revise rather than argue.

For agencies managing multiple clients: Copyleaks or Winston AI offer volume-based pricing that scales better than per-scan models.

The bottom line: no single tool is reliable enough to make definitive judgments. Use detectors as one input in a larger quality process. Check our guide on the difference between AI and human writers for a broader framework on content quality assessment.

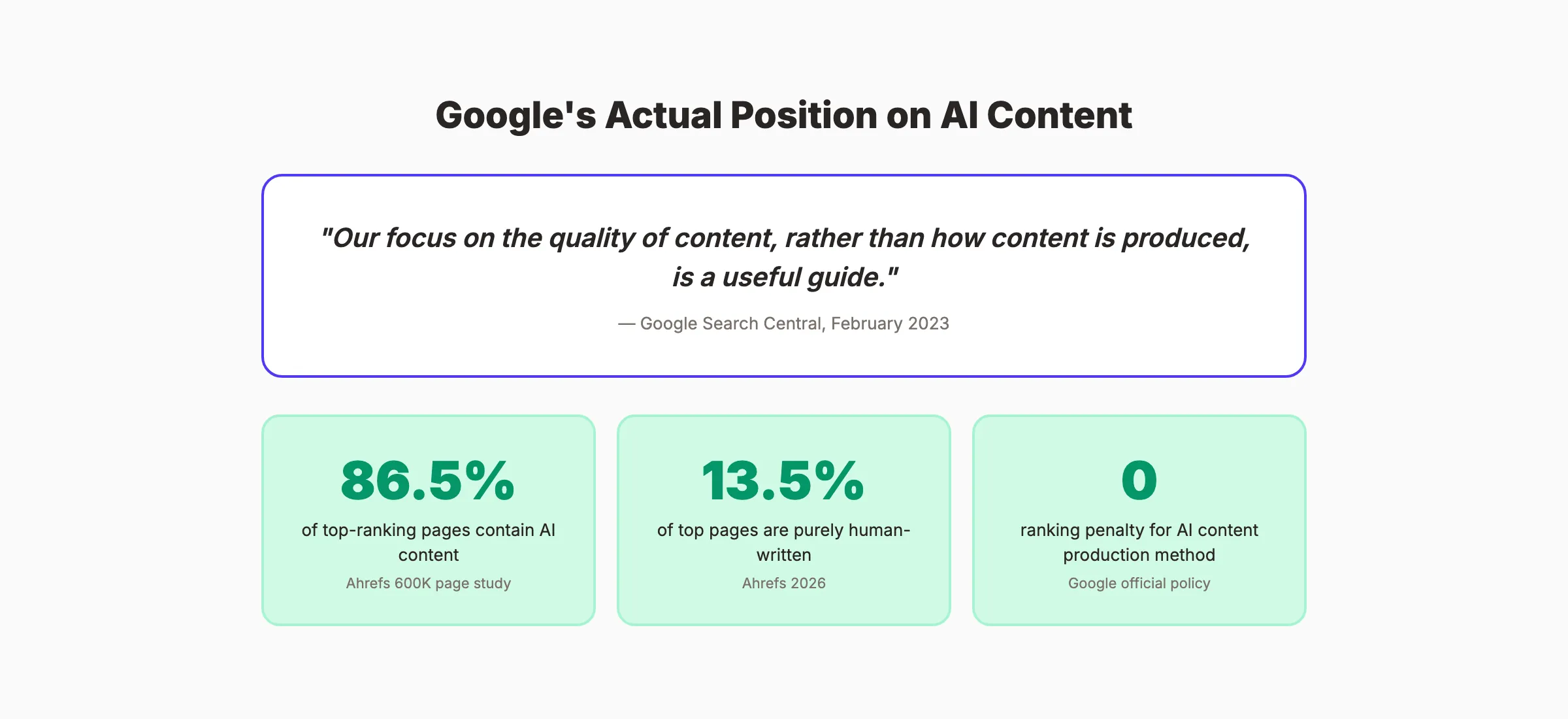

Chapter 5: What Google Actually Says About AI Content

Most AI detection anxiety in SEO comes from a misunderstanding of Google’s position. Here is what Google has actually stated.

Google’s Official Stance

In February 2023, Google published clear guidance on AI content and search. The key statement:

“Our focus on the quality of content, rather than how content is produced, is a useful guide.”

Google does not penalize content for being AI-generated. Google penalizes content for being low-quality, thin, or manipulative regardless of how it was produced. A well-researched, accurate, helpful AI-assisted article will outperform a poorly written human article every time.

What the Data Shows

Ahrefs analyzed 600,000 pages ranking in the top 20 of Google search results. The findings:

- 86.5% of top-ranking pages contain some AI-generated content

- Only 13.5% are purely human-written

- AI content presence had no negative correlation with rankings

The data is clear. AI content ranks. The quality of the content determines rankings, not the production method.

When AI Content Does Get Penalized

Google targets content that violates its spam policies. That includes:

- Scaled content abuse: Publishing thousands of low-quality pages to manipulate rankings

- Thin content: Pages with no original value, insight, or expertise

- Misleading content: Fake reviews, fabricated expertise signals, deceptive practices

These policies apply to both human and AI content equally. A human writing 500 thin articles for link manipulation gets the same penalty as AI doing it.

For teams publishing SEO content at scale, the lesson is simple: quality and helpfulness matter. The production method does not.

Should You Run AI Detection on Your Content?

For SEO purposes, running AI detection on your own content serves one purpose: quality control. If a detector flags your content as highly likely AI-generated, that may signal the content is too predictable, too uniform, or lacking original insight. Those are legitimate quality concerns worth addressing.

Do not run detection as a pass/fail gate. Use it as a quality signal. If the content is helpful, accurate, and well-written, the detection score is irrelevant for Google rankings.

3,500+ blogs published. 92% average SEO score. See what Stacc can do for your site. Start for $1 →

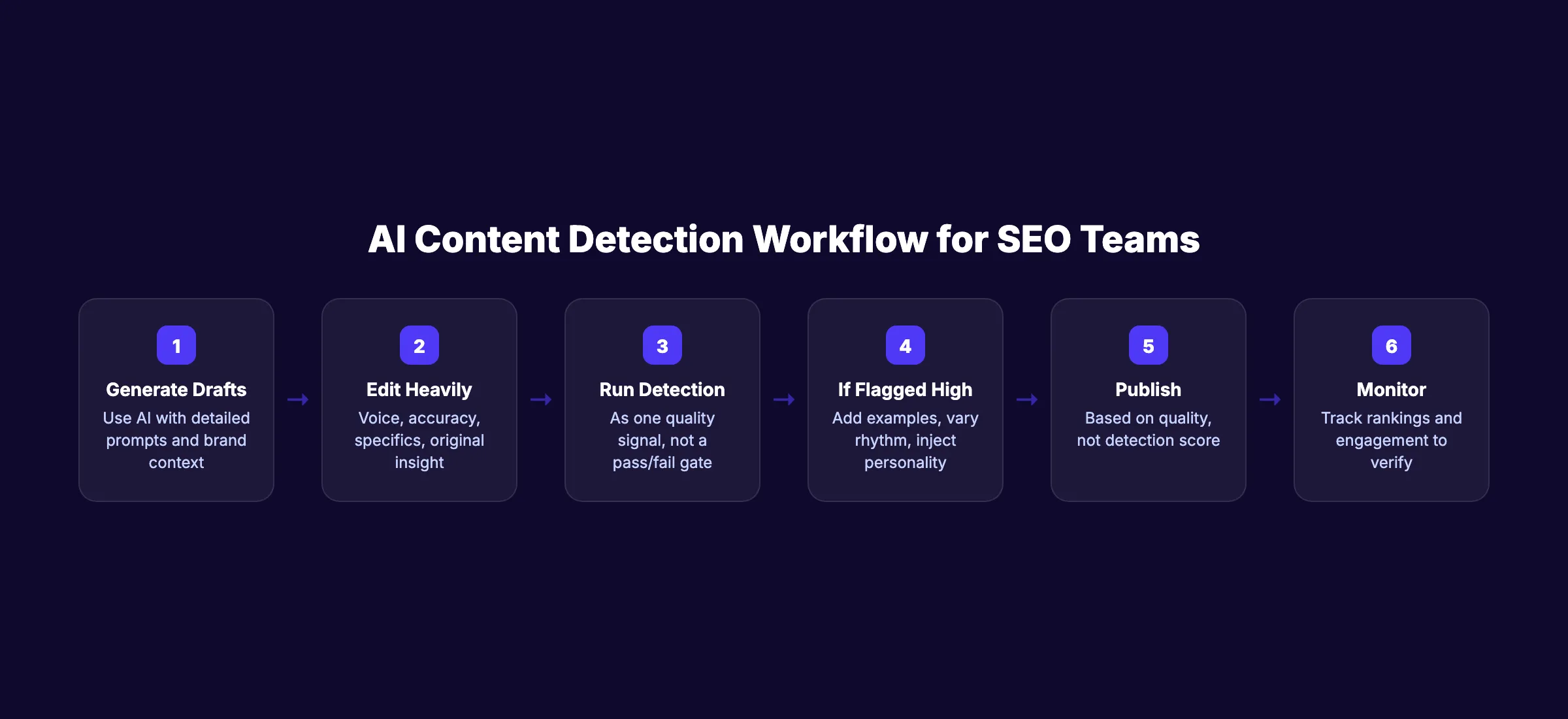

Chapter 6: AI Content Detection for SEO Professionals

As an SEO professional, your relationship with AI detection tools should be practical, not paranoid.

When AI Detection Is Useful

Freelancer quality control. If you hire writers and want to verify they did original work rather than pasting ChatGPT output, a detection check is one reasonable quality signal. Pair it with plagiarism checks and editorial review.

Content audit tool. Running existing content through a detector can identify articles that read too formulaically. High AI scores on human-written content often indicate the writing needs more personality, specifics, and original insight.

Client reporting. Some clients require AI detection reports as part of content deliverables. Knowing how to interpret and present detector results professionally builds trust.

When AI Detection Is a Waste of Time

As a Google ranking signal. Google has stated clearly that production method does not determine rankings. Running detection to protect rankings is solving a problem that does not exist.

As proof of authorship. No detector can definitively prove who wrote what. A 60% AI score does not mean 60% of the content is AI-generated. It means the detector’s model assigned a 60% probability based on statistical patterns.

As a gatekeeper for publication. If the content meets your quality standards, satisfies user intent, and demonstrates expertise, publishing it is the right call regardless of detection scores.

A Better Workflow for AI-Assisted Content

- Generate drafts with AI using detailed prompts and brand context

- Edit heavily for voice, accuracy, specifics, and original insight

- Run a detection check as one quality signal (not a pass/fail gate)

- If flagged high: Review for formulaic patterns, add personal examples, vary sentence structure

- Publish based on quality, not detection score

- Monitor rankings and engagement to verify performance

Learn to humanize AI content effectively. Humanization is not about tricking detectors. It is about making content genuinely better.

For teams using ChatGPT for SEO content, this workflow separates high-quality AI-assisted articles from low-effort AI spam.

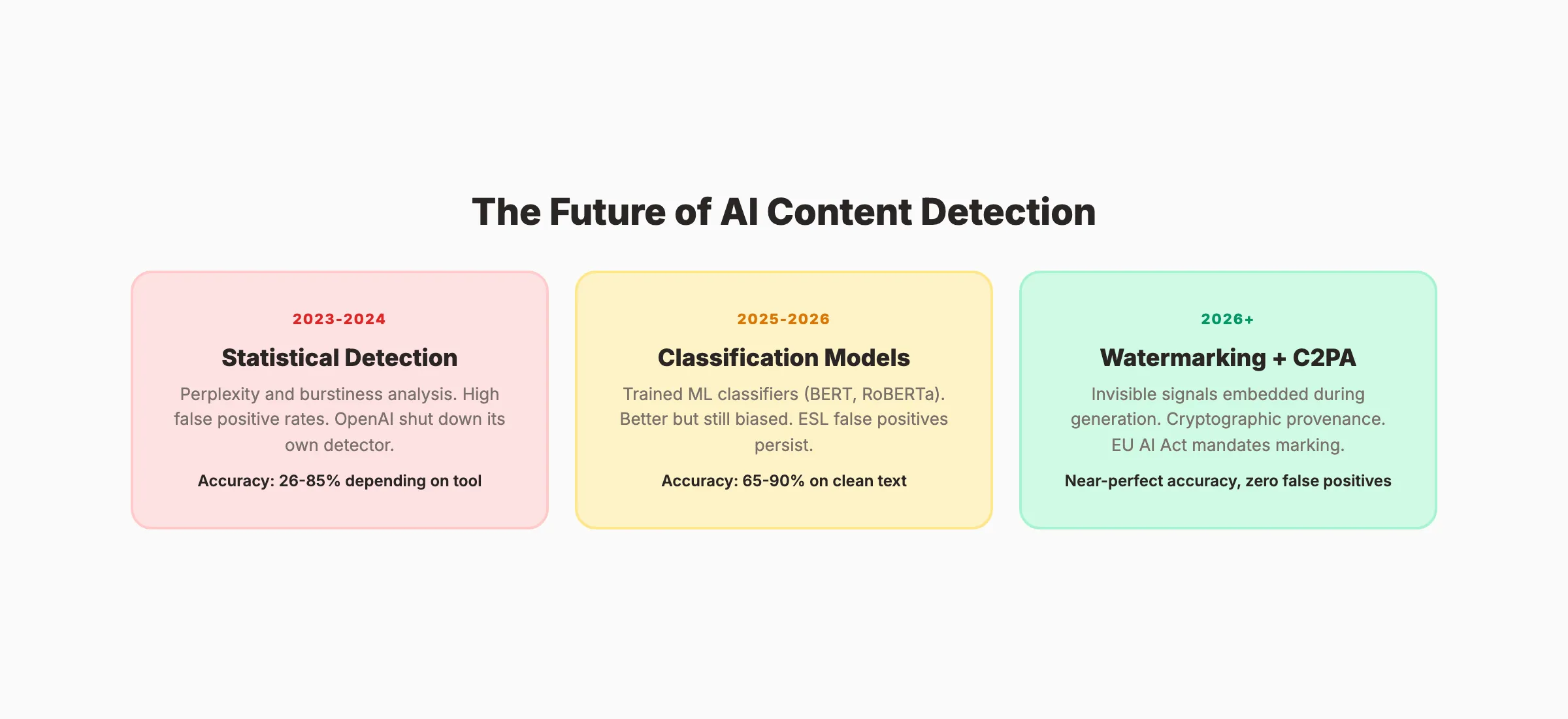

Chapter 7: The Future of AI Content Detection

Current detection methods (perplexity, burstiness, classification) are reaching their limits. The next generation of detection uses fundamentally different approaches.

Watermarking: Detection at the Source

Rather than analyzing finished text, watermarking embeds invisible signals during generation. The AI model slightly biases its word choices to create a statistical pattern that a detector can verify later.

OpenAI, Google DeepMind, and Meta have all developed watermarking systems. The advantage: near-perfect accuracy on watermarked text with virtually zero false positives. The limitation: it only works on text generated by participating models. It cannot detect AI text from unwatermarked models.

C2PA: Content Provenance Standards

The Coalition for Content Provenance and Authenticity (C2PA) is developing standards for tracking content origin. C2PA metadata records who created content, what tools were used, and whether AI was involved.

Major platforms including Adobe, Microsoft, Google, and Intel support C2PA. The standard embeds cryptographic signatures that verify content history. Unlike statistical detection, provenance cannot be fooled by paraphrasing or editing.

The EU AI Act: Mandatory Disclosure by August 2026

The EU AI Act Article 50 requires AI-generated content to carry machine-detectable marking. The provision becomes fully applicable on August 2, 2026.

This regulation shifts the burden from detection (analyzing after the fact) to disclosure (marking at creation). For businesses operating in the EU or serving EU audiences, compliance planning should start now.

What This Means for SEO

The future of AI content detection is not better statistical classifiers. It is provenance and watermarking built into the generation process.

For SEO professionals, the practical takeaway: focus on content quality today. The detection environment will change fundamentally within 12-18 months as watermarking and C2PA standards roll out. Building workflows around current detection accuracy is building on sand.

Review the latest AI content statistics to track how adoption and detection capabilities evolve across the industry.

Rank everywhere. Do nothing. Blog SEO, Local SEO, and Social on autopilot. Stacc starts at $99/mo with a $1 trial. Start for $1 →

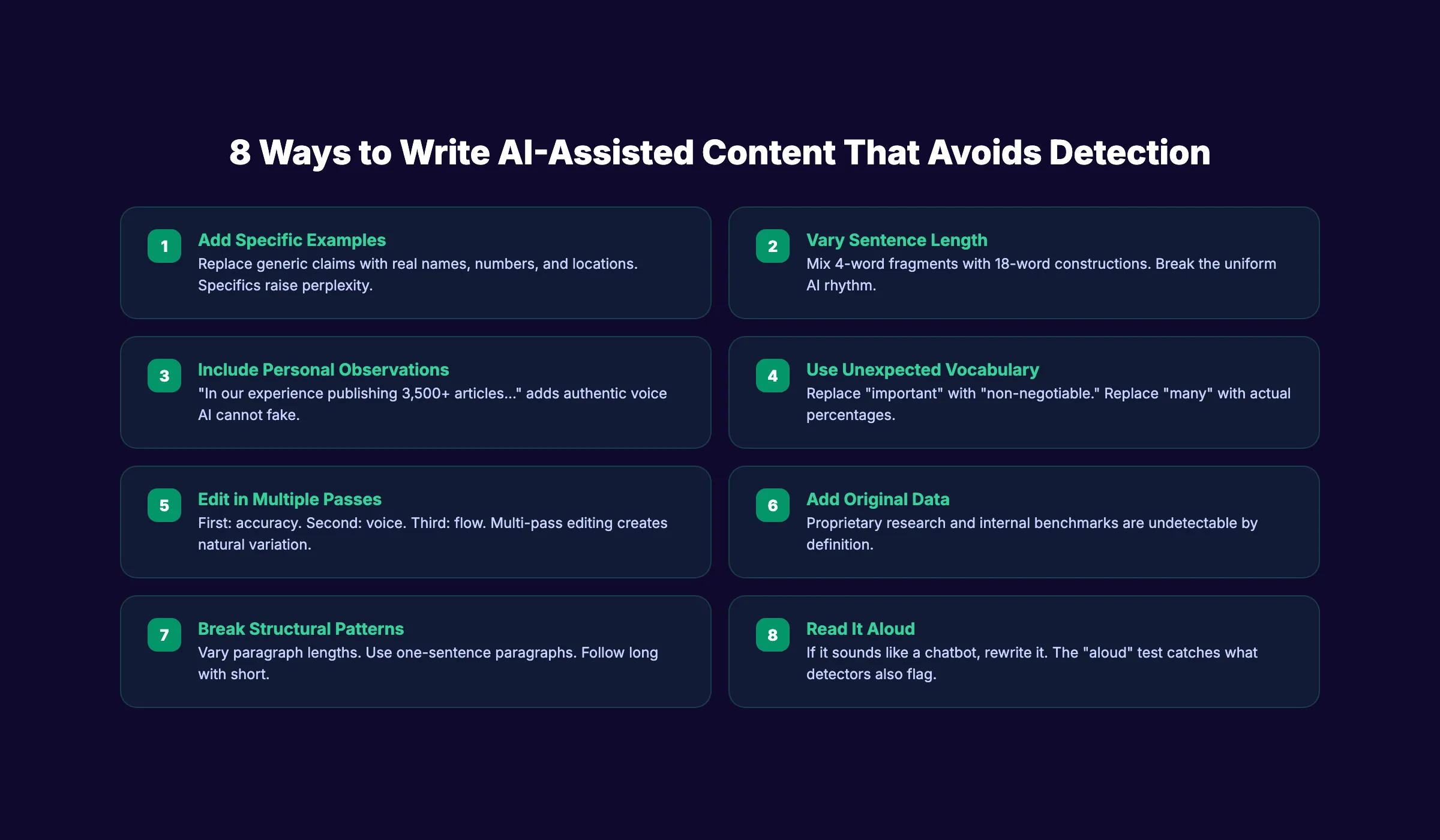

Chapter 8: How to Write AI-Assisted Content That Avoids Detection Flags

This is not about tricking detectors. It is about writing better content that happens to avoid the patterns detectors flag.

Why “Better Writing” Beats Detection

Detectors flag predictability. Better writing is inherently less predictable. When you add original examples, vary your rhythm, and inject personality, you produce content that reads better for humans and scores lower on detectors simultaneously.

8 Practical Techniques

1. Add specific examples. Replace “many businesses struggle with SEO” with “a 12-person law firm in Denver saw traffic drop 40% after a core update.” Specifics raise perplexity and improve content quality.

2. Vary sentence length deliberately. Mix 4-word fragments with 18-word constructions. Break the uniform rhythm that AI produces by default.

3. Include personal observations. “In our experience publishing 3,500+ articles…” adds authentic perspective that AI cannot fabricate convincingly.

4. Use unexpected vocabulary. AI defaults to common word choices. Replace “important” with “non-negotiable” or “critical.” Replace “many” with “87%” using actual data.

5. Edit in passes, not all at once. First pass for accuracy. Second pass for voice. Third pass for flow. Multi-pass editing produces natural variation that single-draft AI content lacks.

6. Add original data or research. Detectors flag generic claims. Original survey results, internal benchmarks, or proprietary case studies are inherently undetectable because they do not exist in training data.

7. Break structural patterns. AI writes uniform paragraphs. Vary your structure. Use one-sentence paragraphs for emphasis. Follow a 50-word paragraph with a 15-word paragraph.

8. Read it aloud. If any section sounds like a chatbot, rewrite it. The “read aloud” test catches robotic phrasing that detectors also flag.

These techniques align with the content strategy approach we recommend for any team using AI in their production workflow.

FAQ

How does AI content detection work?

AI detectors analyze text for statistical patterns associated with machine-generated writing. They measure perplexity (how predictable the text is), burstiness (how varied the sentences are), and trained classification signals. The detector outputs a probability score, not a definitive verdict. No detector can prove with certainty how text was produced.

Are AI content detectors accurate?

Accuracy varies widely. Vendors claim 95-99% accuracy, but independent tests show 65-90% on clean AI text and below 50% on paraphrased or edited content. The Stanford study found a 61% false positive rate on essays by non-native English speakers. Use detector results as one signal, not as proof.

Does Google penalize AI-generated content?

No. Google has stated that it focuses on content quality, not production method. Ahrefs analyzed 600,000 pages and found that 86.5% of top-ranking pages contain some AI content. Google penalizes thin, manipulative, and low-quality content regardless of whether humans or AI produced it.

Can AI detectors be bypassed?

Yes. Paraphrasing, editing, and humanizer tools reduce detection scores. Studies show accuracy drops dramatically on edited AI content. This is why the industry is shifting toward watermarking and provenance standards (C2PA) rather than post-hoc statistical detection.

Are AI detectors biased against non-native English speakers?

Yes. The Stanford study found that 7 popular AI detectors falsely flagged 61% of TOEFL essays by non-native speakers as AI-generated. Simpler vocabulary and sentence structures used by ESL writers match the patterns detectors associate with AI text.

What is the best AI content detector?

No single detector is definitively “best.” Originality.ai and GPTZero perform strongest in independent comparisons for general use. Turnitin dominates in education. The best approach is using any detector as one quality signal within a broader editorial process, not as the final judgment.

AI content detection is a useful quality signal, not an authoritative verdict. The technology flags patterns, not intent. The accuracy limitations, ESL bias, and false positive rates mean no detector should serve as the sole judge of content quality or authorship. Focus on writing content that serves your audience well. That is what Google rewards. That is what builds trust. And it is what the most accurate detector of all, your reader, actually cares about.

Written by

Siddharth GangalSiddharth is the founder of theStacc and Arka360, and a graduate of IIT Mandi. He spent years watching great businesses lose organic traffic to competitors who simply published more. So he built a system to fix that. He writes about SEO, content at scale, and the tactics that actually move rankings.

30 SEO blog articles published every month

Keyword-optimized, scheduled, and live on your site. Automatically.

30-day trial · Cancel anytime

theStacc

Stop writing SEO content manually

30 blog articles, 30 GBP posts, and social media content. Published every month. Automatically.

Start Your $1 Trial$1 for 3 days · Cancel anytime