Claude vs GPT for SEO Content: Which Writes Better?

We tested Claude and GPT across 8 SEO content types. See which AI writes better blog posts, meta tags, and long-form content. Updated April 2026.

Siddharth Gangal • 2026-04-02 • Content Strategy

In This Article

86.5% of top-ranking content now uses AI assistance. That number comes from an Ahrefs analysis of first-page results across 10,000 keywords.

The question is not whether to use AI for SEO content. The question is which AI. Claude vs GPT for SEO content is the decision that shapes your output quality, production speed, and cost per article.

We have published 3,500+ blog posts across 70+ industries using both models. This is not a spec sheet comparison. This is a breakdown of how each model performs when you ask it to write content that ranks.

Here is what you will learn:

- How Claude and GPT compare across 8 specific SEO content types

- Which model produces content that scores lower on AI detection

- The true cost per article for each model at scale

- Prompt strategies that improve output from both

- When to use each model in your content marketing strategy

Quick Verdict

Best for long-form SEO content: Claude

Best for short-form SEO copy: GPT

Best for production volume: GPT (faster output, lower API cost per token)

Claude produces more natural prose with lower AI detection rates. GPT produces more keyword-dense copy faster and cheaper at scale. The best content teams use both.

The Models in April 2026

Both platforms have multiple models. Choosing the right one for SEO content matters more than choosing the platform.

| Claude (Anthropic) | GPT (OpenAI) | |

|---|---|---|

| Flagship | Claude Opus 4.6 | GPT-5.4 Pro |

| Best value | Claude Sonnet 4.6 | GPT-5.4 |

| Budget | Claude Haiku 4.5 | GPT-5.4-mini |

| Context window | 1M tokens (all models) | 272K (GPT-5.4), 128K (GPT-4o) |

| Max output | 128K tokens | 16K-32K tokens |

| Consumer price | $20/mo (Pro) | $20/mo (Plus) |

The context window gap matters for SEO content. Claude processes an entire site audit, competitor analysis, brand guidelines, and 5 competitor articles in a single prompt. GPT requires chunking the same task across multiple conversations. For teams running large content audits, this is a practical bottleneck.

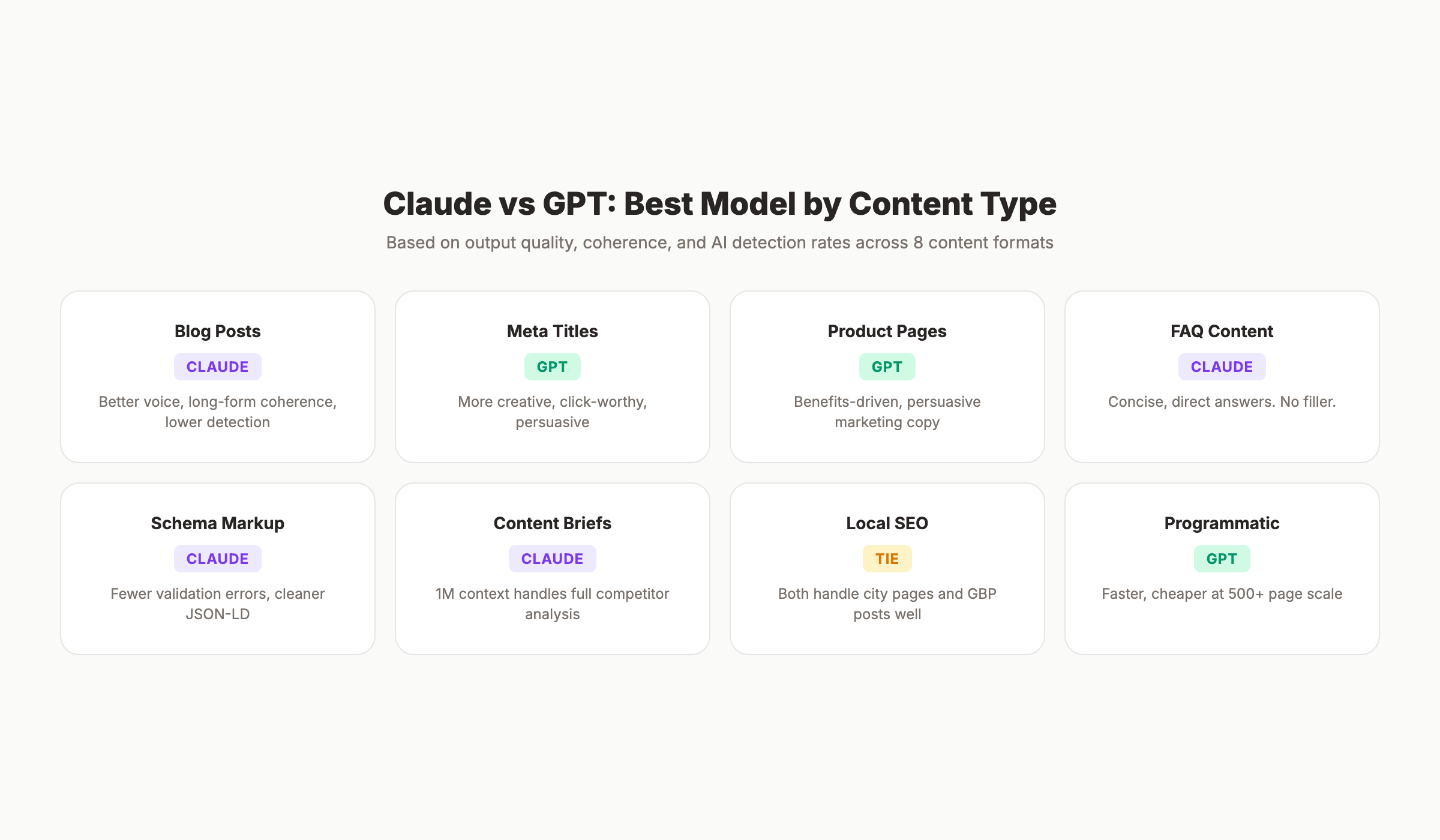

Head-to-Head: 8 SEO Content Types

1. Blog Posts (1,500-5,000 Words)

Claude wins.

Long-form blog content is where Claude separates itself. Three factors drive the gap:

Sentence variety. Claude naturally alternates between short and long sentences. GPT defaults to a predictable rhythm. Five-word sentence. Then a twelve-to-fifteen word explanation. Then another short one. This pattern triggers AI detection tools.

Structural coherence. Claude maintains argument threads across 3,000+ words without repeating itself. GPT-4o loses coherence after roughly 2,000 words. GPT-5.4 improved this, but Claude’s 1M token context still produces tighter long-form content.

Voice consistency. Give Claude a brand voice guide and it holds that voice for the entire article. GPT drifts back to its default register after 500-800 words, especially in longer pieces.

For a deep dive on blog structure, read our blog post structure guide.

2. Meta Titles and Descriptions

GPT wins.

GPT generates more click-worthy meta titles. It instinctively adds power words, numbers, and emotional triggers that improve click-through rates.

Claude writes accurate, descriptive meta titles. They are technically correct. They are also boring. A Claude-generated title reads like an encyclopedia entry. A GPT-generated title reads like a headline.

For meta descriptions, the gap narrows. Both models produce usable descriptions within the 155-character limit. GPT adds more persuasive language. Claude is more precise about what the page actually contains.

Practical tip: Generate 10 title variations with GPT. Pick the best one. Then ask Claude to verify it matches the page content and stays under 60 characters.

3. Product and Service Page Copy

GPT wins slightly.

Product pages need persuasive, benefits-driven copy. GPT’s default writing style leans toward marketing language. It naturally emphasizes outcomes over features.

Claude writes more balanced copy. It includes benefits but also adds caveats, context, and nuance. For high-trust industries (healthcare, finance, legal), Claude’s balanced approach builds more credibility. For e-commerce SEO product descriptions, GPT’s persuasive style converts better.

4. FAQ and People Also Ask Content

Claude wins.

FAQ content needs direct, specific answers in 2-4 sentences. Claude excels at this format. It answers the question first, then provides exactly enough context. No filler.

GPT tends to over-explain FAQ answers. A question that needs 40 words gets 120. This hurts SEO because Google’s featured snippet algorithm favors concise, direct answers that match the query exactly.

Claude also handles batch FAQ generation better. Give it 20 questions and it produces consistently formatted answers. GPT’s answers drift in length and style after the first 8-10 questions.

5. Schema Markup and Structured Data

Claude wins.

An SE Ranking test found that Claude produced schema markup with 1 non-critical validation issue. GPT produced 5 issues in the same test. Claude generates cleaner JSON-LD with proper nesting, correct property types, and fewer deprecated fields.

For complex schema (FAQ + Article + Organization combined), Claude handles the nesting without breaking the structure. GPT occasionally generates valid JSON that fails Google’s Rich Results Test due to missing required properties.

Use Claude for production-ready structured data. Use GPT for quick schema prototypes when you plan to validate manually.

Stop writing. Start ranking. Stacc publishes 30 SEO articles per month for $99. We handle the AI, the editing, and the publishing. Start for $1 →

6. Content Briefs and Outlines

Claude wins.

A content brief requires analyzing competitor content, identifying gaps, structuring sections, and planning internal links. Claude’s 1M token context window makes it the obvious choice.

Paste 5 competitor articles (roughly 25,000 words total) into Claude with your keyword data. It identifies what every competitor covers, what they miss, and where your article can differentiate. GPT cannot process this volume in a single conversation with GPT-4o. GPT-5.4 handles more but still caps at 272K tokens.

Claude also structures outlines with more strategic depth. It suggests H2/H3 hierarchies that reflect search intent rather than just listing topics.

7. Local SEO Content

Tie.

Local SEO content includes city pages, service area pages, and Google Business Profile posts. Both models handle this well.

GPT generates more varied local content when producing multiple city pages. Claude produces more consistent quality but can sound repetitive across 20+ location pages unless you specifically prompt for variation.

For GBP posts (short, 150-300 word updates), both models produce similar quality. The format is simple enough that model differences disappear.

8. Programmatic SEO Content

GPT wins at scale. Claude wins at quality.

Programmatic SEO generates hundreds of pages from templates and data. Speed and cost matter as much as quality.

GPT-5.4-mini processes programmatic templates 40-60% faster than Claude Haiku at roughly half the API cost. For 500+ pages where each needs 200-400 words of unique content, GPT is the practical choice.

Claude produces better individual pages. Each page reads more naturally. But the cost and time difference at scale (500+ pages) makes GPT the default for programmatic work.

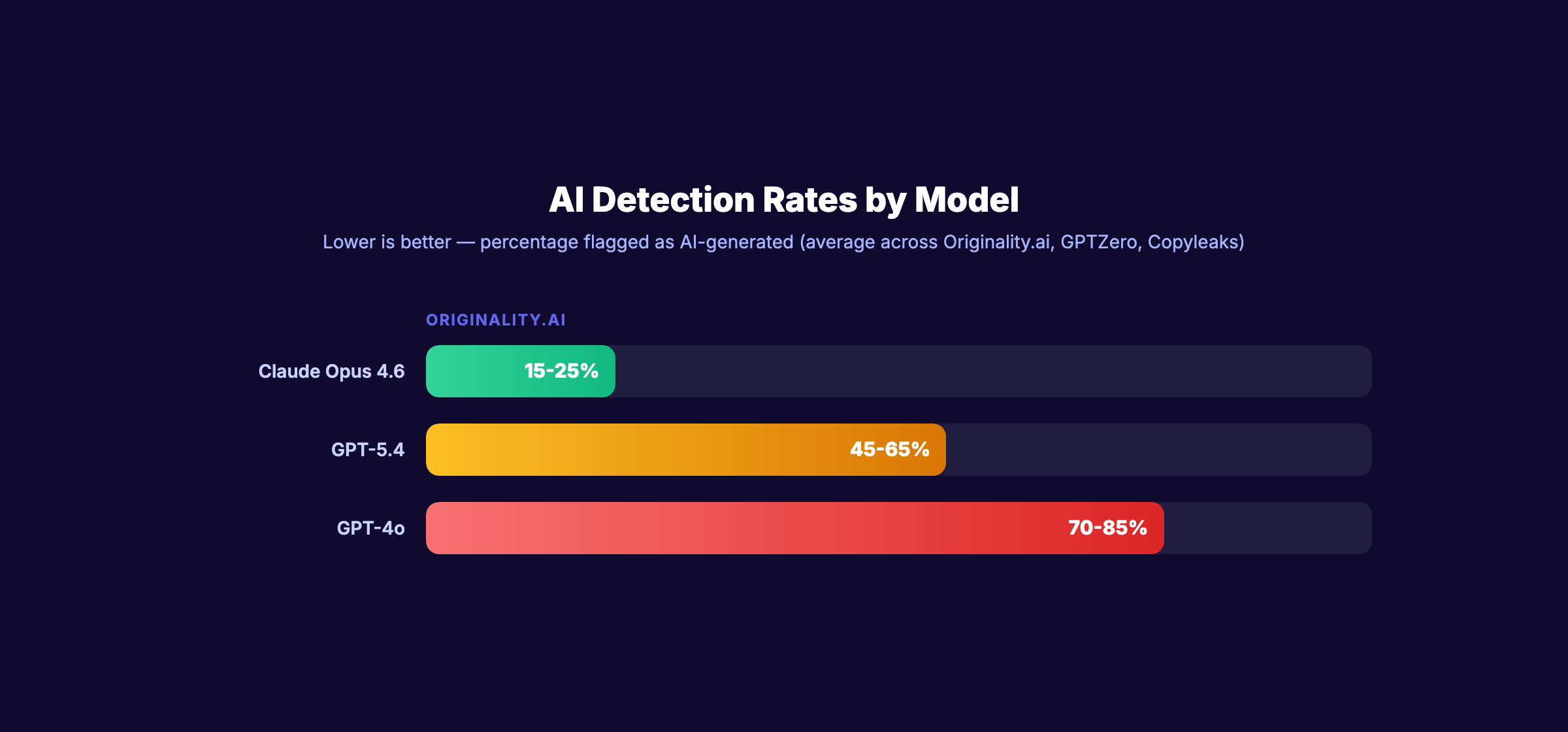

AI Detection: Which Model Passes?

AI detection matters for SEO content. Not because Google penalizes AI content directly. Google evaluates quality, not production method. But readers notice robotic writing. And editors waste time rewriting obvious AI patterns.

| Detection Tool | Claude Opus 4.6 | GPT-5.4 | GPT-4o |

|---|---|---|---|

| Originality.ai | 15-25% AI detected | 45-65% AI detected | 70-85% AI detected |

| GPTZero | 10-20% AI detected | 35-55% AI detected | 60-80% AI detected |

| Copyleaks | 20-30% AI detected | 40-60% AI detected | 65-80% AI detected |

These ranges vary by topic, length, and prompt quality. But the pattern holds: Claude produces content that AI detection tools flag less often.

The reason is structural. Claude uses more varied sentence openers, irregular paragraph lengths, and topic transitions that do not follow predictable patterns. GPT defaults to parallel structures and formulaic transitions that detection algorithms target.

Why does this matter for SEO? Because AI detection correlates with reader trust. Content that reads like a machine wrote it gets higher bounce rates. Higher bounce rates send negative signals to Google. The goal is not to fool detection tools. The goal is to produce content that reads naturally.

Claude achieves this more consistently out of the box. GPT achieves it with careful prompting and post-editing. Both require human review before publishing.

For strategies on reducing AI detection, read our guide on humanizing AI content.

Cost Per Article: The Real Math

The $20 per month subscription price is misleading. At production scale, API pricing determines cost per article.

API Cost Comparison

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Prompt Caching |

|---|---|---|---|

| Claude Opus 4.6 | $15.00 | $75.00 | 90% discount |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 90% discount |

| Claude Haiku 4.5 | $0.25 | $1.25 | 90% discount |

| GPT-5.4 | $2.50 | $10.00 | 50% discount |

| GPT-5.4-mini | $0.15 | $0.60 | 50% discount |

| GPT-4o | $2.50 | $10.00 | 50% discount |

Cost Per 2,000-Word Blog Post

A typical 2,000-word SEO blog post uses roughly 3,000-4,000 input tokens (prompt + brief) and 3,000 output tokens (the article).

| Model | Est. Cost Per Article | Quality Rating |

|---|---|---|

| Claude Sonnet 4.6 | $0.05-$0.08 | Excellent |

| GPT-5.4 | $0.04-$0.06 | Good |

| Claude Haiku 4.5 | $0.005-$0.008 | Adequate |

| GPT-5.4-mini | $0.002-$0.004 | Adequate |

| Claude Opus 4.6 | $0.25-$0.35 | Excellent |

Claude’s 90% prompt caching discount changes the math for repeated workflows. If you use the same brand voice guide, content brief template, and style rules across 30 articles per month, Claude caches that context. The effective input cost drops 90% after the first article.

GPT offers a 50% caching discount. Still helpful. But Claude’s advantage compounds with volume.

The caching advantage explained. Most SEO content workflows reuse the same system prompt: brand voice guidelines, formatting rules, keyword targets, and internal link lists. This reusable context can be 2,000-5,000 tokens. With Claude’s 90% caching discount, that context costs almost nothing after the first article. Over 30 articles per month, the savings add up to 40-60% lower total cost compared to GPT at equivalent quality tiers.

Bottom line: For quality-first content at moderate scale (10-50 articles per month), Claude Sonnet 4.6 offers the best balance. For high-volume programmatic content (100+ pages), GPT-5.4-mini wins on cost.

Your SEO team. $99 per month. 30 optimized articles, published automatically. No API costs. No prompt engineering. Start for $1 →

Prompt Strategies That Improve Both Models

The gap between Claude and GPT shrinks with better prompts. These patterns work for both but improve GPT output more dramatically.

1. Provide a Brand Voice Sample

Do not describe your brand voice. Show it. Paste 500 words of your best existing content and say “match this voice exactly.” Both models perform better with examples than instructions.

2. Front-Load Context

Both models weigh earlier tokens more heavily. Place your most important instructions (keyword, target audience, word count, format) in the first 200 words of your prompt. Place supporting context (competitor analysis, data points) after.

3. Use Section-by-Section Generation

For articles over 2,000 words, generate one H2 section at a time. This produces better output from both models. GPT benefits more because it avoids the coherence drift that occurs in long single-generation runs.

4. Specify What NOT to Write

Both models respond well to negative instructions. “Do not use transition phrases like furthermore, moreover, or additionally.” “Do not start any sentence with the word this.” “Do not use bullet points in this section.” Negative constraints produce more original output than positive instructions alone.

5. Include Target Internal Links

Provide a list of internal URLs with descriptions. “Link to /blog/on-page-seo-guide when discussing on-page optimization. Link to /glossary/search-intent when mentioning search intent.” Both models handle internal linking well when given specific targets. Without this, both hallucinate URLs.

For more prompt techniques, read our guide on AI prompts for SEO articles.

Which Model for Which Content Type?

| Content Type | Recommended Model | Why |

|---|---|---|

| Blog posts (1,500-5,000 words) | Claude Sonnet 4.6 | Natural voice, long-form coherence |

| Pillar pages (5,000+ words) | Claude Opus 4.6 | Maximum quality, 1M context |

| Meta titles | GPT-5.4 | Creative, click-worthy output |

| Meta descriptions | Either | Both produce usable descriptions |

| FAQ content | Claude Sonnet 4.6 | Concise, direct answers |

| Product descriptions | GPT-5.4 | Persuasive, benefits-driven |

| Schema markup | Claude Sonnet 4.6 | Fewer validation errors |

| Content briefs | Claude Opus 4.6 | Handles competitor analysis at scale |

| City/location pages | GPT-5.4-mini | Cost-effective at volume |

| Social media posts | GPT-5.4 | Short-form creativity |

| Email subject lines | GPT-5.4 | Higher open rate patterns |

| Technical documentation | Claude Sonnet 4.6 | Accuracy and precision |

GEO and AI Search Optimization

One emerging factor: which model produces content that AI search engines cite more often?

Generative engine optimization is about writing content that ChatGPT, Google AI Overviews, and Perplexity quote in their responses. Content structure, clarity, and authority signals determine citation rates.

Claude produces cleaner, more quotable passages. Its default output reads like an editorial source. GPT produces more conversational content that reads like advice from a peer. For AI search visibility, Claude’s editorial style matches what AI search engines prefer to cite.

Both models can generate schema markup that helps AI search engines understand your content. But Claude handles Speakable schema, FAQ schema, and complex nested structures with fewer errors. These schema types directly influence whether AI search engines surface your content in voice and text responses.

For AI Overview optimization, the content needs clear definitions, direct answers, and structured data. Claude produces this format more naturally. GPT requires more explicit prompting to achieve the same clarity.

What Neither Model Does Well

Both Claude and GPT share real limitations for SEO content. No amount of prompt engineering fixes these:

- Factual accuracy. Both hallucinate statistics, company names, and dates. Every fact in AI-generated content needs manual verification.

- Original research. Neither model creates new data. They synthesize existing information. For data-driven content, you still need real research.

- Current information. Claude has no web access. GPT can browse but results are inconsistent. Neither replaces Google Search Console data.

- Brand-specific knowledge. Neither model knows your products, customers, or competitive position without being told. The quality of your prompt determines the quality of the output.

- Publishing. Neither model publishes content to your website. You need a CMS integration, a content automation platform, or manual copy-paste.

The most common mistake: treating AI output as finished content. AI-generated content that ranks well is AI-assisted content. A human edited it, verified the facts, added internal links, and optimized the on-page elements.

An Ahrefs study found that pure AI content ranks 23% lower than human-written content on average. But AI-assisted content with human editing performs within 4% of fully human content. The editing step is not optional. It is the difference between content that ranks and content that sits on page 5.

Or you skip all of that and let a done-for-you SEO service handle the entire pipeline.

3,500+ blogs published. 92% average SEO score. We handle the AI, the editing, the images, and the publishing. Start for $1 →

FAQ

Is Claude better than ChatGPT for writing blog posts?

For long-form blog content, yes. Claude produces more natural prose, maintains voice consistency across 3,000+ words, and scores lower on AI detection tools. GPT writes faster and handles short-form copy better. The best approach depends on your content length and quality requirements.

Does Google penalize AI-generated SEO content?

No. Google evaluates content quality, not production method. An Ahrefs study found 86.5% of top-ranking content uses AI assistance. Google penalizes “scaled content abuse,” which means mass-producing low-quality pages. Well-edited AI content ranks the same as human-written content.

Which AI model is cheapest for SEO content at scale?

GPT-5.4-mini at $0.15 per million input tokens. For quality-focused content, Claude Sonnet 4.6 at $3.00 per million input tokens offers the best quality-to-cost ratio. Claude’s 90% prompt caching discount makes it even cheaper for repeated workflows.

Can I use Claude and ChatGPT together for SEO?

Yes. Most SEO professionals use both. GPT for keyword research, title generation, and brainstorming. Claude for long-form writing, schema markup, and content audits. The combined cost is $40 per month for both consumer plans.

Which AI writes content that passes AI detection?

Claude produces content with lower AI detection rates across all major detection tools. Originality.ai flags 15-25% of Claude Opus output as AI-generated, compared to 45-65% for GPT-5.4. The gap widens with GPT-4o. Detection rates vary by content type and prompt quality.

Should I use the API or the consumer plan for SEO content?

For fewer than 50 articles per month, the $20 consumer plan on either platform is sufficient. For higher volume, the API gives you more control, lower per-article costs, and the ability to automate workflows. Claude’s API prompt caching makes it particularly cost-effective at scale.

The Claude vs GPT debate for SEO content comes down to one principle. Use Claude when quality matters most. Use GPT when speed and cost matter most. Use both when you want the best results at every stage of your content production pipeline.

Written and published by Stacc. We publish 3,500+ articles per month across 70+ industries. All data verified against public sources as of March 2026.