GPT Model Updates and Brand Visibility (2026)

GPT model updates shift which brands get cited. Learn how training data changes affect your AI visibility and what to do about it. Updated April 2026.

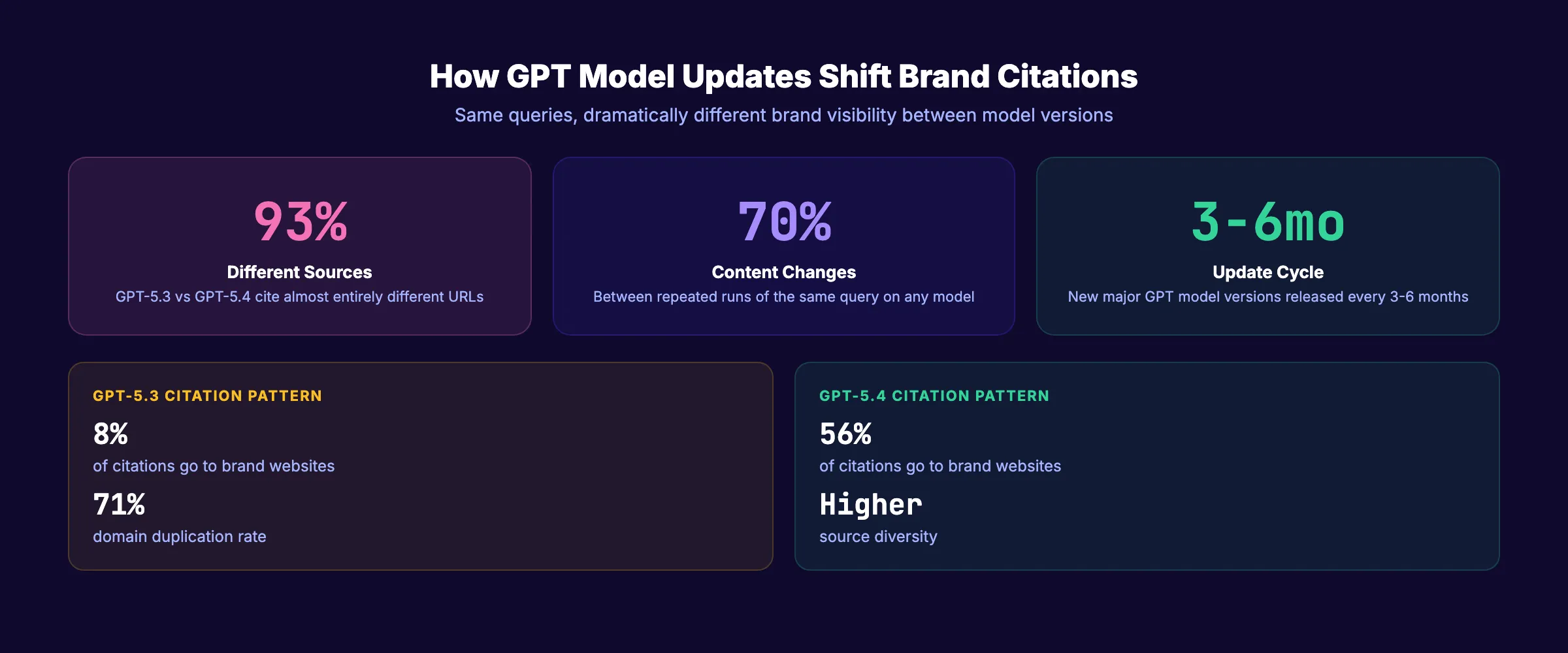

GPT-5.3 and GPT-5.4 cite 93% different sources for the same queries. One model sends users to blog posts about your brand. The other sends them directly to your website. A single GPT model update can shift your brand visibility overnight.

GPT model updates and brand visibility are now inseparable. Every time OpenAI releases a new model version, the training data changes, the citation behavior shifts, and the brands that get recommended can shuffle completely. A brand that appears in 40% of relevant ChatGPT answers today could drop to 5% tomorrow with a model update.

Most businesses do not monitor this. They optimize once, check if ChatGPT mentions them, and move on. Then a model update rolls out and their visibility disappears without warning.

We have published 3,500+ SEO articles across 70+ industries and tracked how model updates across ChatGPT, Gemini, Claude, and Perplexity affect brand citations. This guide explains what happens during GPT model updates, why they affect brand visibility, and how to build a strategy that survives model volatility.

Here is what you will learn:

- What GPT model updates change and why they matter for brand visibility

- How training data cutoffs create visibility windows and blind spots

- The specific citation behavior differences between GPT model versions

- How to build a model-resilient brand visibility strategy

- The monitoring system that catches visibility drops before they hurt revenue

- How to recover when a model update eliminates your brand citations

What Happens During a GPT Model Update

OpenAI releases new GPT model versions every 3 to 6 months. Each update changes 3 things that directly affect which brands get cited.

Training Data Refresh

Every model has a knowledge cutoff date. Content published after that date does not exist in the model’s base knowledge. When OpenAI updates the cutoff, new content enters the model’s awareness and old content gets reweighted.

| Model | Knowledge Cutoff | Release Date |

|---|---|---|

| GPT-3.5 | January 2022 | March 2023 |

| GPT-4 | April 2023 | March 2023 |

| GPT-4o | June 2024 | May 2024 |

| GPT-5.2 | August 2025 | Late 2025 |

| GPT-5.4 | August 2025 | March 2026 |

The cutoff date determines which brands, products, and content the model “knows” from its training data. If your competitor launched a major content campaign in July 2025 and the model cutoff is August 2025, that campaign exists in the model. If your brand went quiet during that same period, the model noticed.

Citation Behavior Changes

Different model versions handle citations differently. GPT-5.3 sends users to blog posts about your brand. GPT-5.4 sends them directly to your website. The same query produces 93% different source URLs between the 2 versions.

56% of GPT-5.4 citations go to brand websites. Only 8% of GPT-5.3 citations do. That is a 7x difference in direct brand website traffic from the same AI platform, determined entirely by which model version the user runs.

Search Integration Updates

ChatGPT now combines base model knowledge with real-time web search. When OpenAI updates its search integration (through Bing), the web sources that appear in ChatGPT answers shift too. A change in search ranking weights or crawling patterns affects which pages ChatGPT surfaces alongside its training data.

This dual-source system means your brand visibility depends on 2 independent channels: training data (what the model learned) and real-time search (what it finds right now). A model update can change either channel or both simultaneously.

How This Differs from Google Algorithm Updates

Google algorithm updates change where you rank in a list. GPT model updates change whether you exist in the answer at all. The difference is binary. Google shifts you from position 3 to position 7. A GPT model update shifts you from “mentioned” to “not mentioned.”

The stakes per update are higher for AI visibility. A position drop in Google loses some clicks. Disappearing from ChatGPT answers loses all AI-referred traffic for that query. This is why monitoring and rapid response to model updates matters more than most businesses realize.

Why Model Updates Create Visibility Volatility

LLM-generated answers are inherently more volatile than traditional search results. Model updates amplify that volatility.

The Probabilistic Nature of LLMs

LLMs do not produce deterministic outputs. Ask ChatGPT the same question twice, and you can get different answers with different brand mentions. Research shows that 70% of AI-generated content changes between repeated runs of the same query. Only 30% of brands remain visible in back-to-back responses.

Model updates change the underlying probability distributions. A brand that had a 60% chance of being mentioned before an update could drop to 10% after. The math changed. Your content did not.

Training Data Creates Winners and Losers

Every training data refresh creates a new snapshot of the web. Brands that published heavily in the months before the cutoff date get disproportionate representation. Brands that went quiet during that period lose representation.

This creates a counterintuitive dynamic. Your content velocity in the 6 months before a training data cutoff matters more than your content velocity in the 6 months after. The model only knows what it trained on.

Citation Pattern Shifts

Each model version has different preferences for how it handles citations:

| Citation Behavior | GPT-5.3 | GPT-5.4 |

|---|---|---|

| Brand website citations | 8% of citations | 56% of citations |

| Blog post citations | High | Low |

| Third-party mentions | Moderate | Lower |

| Source diversity | Lower (71% domain duplication) | Higher |

| Direct answer without citation | Common | Less common |

A brand strategy optimized for GPT-5.3 (focus on getting mentioned in third-party blog posts) may underperform on GPT-5.4 (which favors direct brand websites). The optimal strategy must work across model versions.

Stop writing. Start ranking. Stacc publishes 30 SEO articles per month for $99. Build the consistent content signal that survives model updates. Start for $1 →

How to Build a Model-Resilient Brand Visibility Strategy

The brands that maintain LLM visibility across model updates share 5 characteristics. They do not depend on any single model version’s behavior.

1. Consistent Publishing Velocity

Models reward brands with sustained publishing activity. A brand that publishes 30 articles per month for 12 months has deep representation in every training data snapshot. A brand that published 100 articles in 2023 and nothing since appears in older models but fades from newer ones.

Consistent publishing creates redundancy. Even if one training snapshot underrepresents your recent content, the cumulative body of work keeps your brand visible.

2. Multi-Platform Brand Signals

Brands appearing on 4+ platforms are 2.8x more likely to be cited by ChatGPT. Multi-platform presence creates signal redundancy that survives model updates.

If your brand only exists on your website, a single crawl change can eliminate your visibility. If your brand appears across your website, Wikipedia, G2, LinkedIn, industry directories, and press coverage, no single update can remove all signals.

Key platforms for model-resilient visibility:

- Your own website with fresh, structured content

- Wikipedia or Wikidata entity

- Industry review sites (G2, Capterra, Trustpilot)

- LinkedIn company page with regular posts

- Press coverage on authoritative publications

- Reddit and community discussions mentioning your brand

- YouTube with branded content

3. Strong Entity Definition

ChatGPT recommends brands based on entity recognition from training data. When the model clearly understands what your brand is, who it serves, and what makes it different, model updates do not disrupt that understanding.

Weak entity definition: “We help businesses grow.” Strong entity definition: “Stacc publishes 30 SEO articles per month for $99 for local service businesses.”

The specific entity definition persists across model versions. The vague one gets lost in the noise.

Build entity clarity through consistent messaging across all platforms. Use the same language to describe your brand everywhere. Read our guide on building topical authority for the content strategy that reinforces entity recognition.

4. Authoritative List Placement

41% of ChatGPT brand recommendations come from authoritative list mentions. “Best of” lists, industry roundups, and comparison articles on third-party sites drive a disproportionate share of AI citations.

List placement survives model updates because the underlying content (the list article) persists in training data. Getting featured on 5 to 10 authoritative list articles creates citation redundancy that no single model update can eliminate.

5. Real-Time Search Optimization

ChatGPT uses real-time web search (through Bing) alongside its training data. Even when training data changes, real-time search can surface your content if your pages rank well in Bing.

Action items for real-time search resilience:

- Submit to Bing Webmaster Tools and implement IndexNow

- Maintain strong on-page SEO fundamentals

- Update key pages quarterly with current data

- Add schema markup for AI parsing

- Ensure your content ranks in Bing (not just Google)

3,500+ blogs published. 92% average SEO score. Consistent publishing builds the model-resilient visibility that survives every GPT update. Start for $1 →

The Monitoring System for Model Update Impacts

You cannot fix what you do not measure. Here is the monitoring cadence that catches visibility drops early.

Weekly Spot Checks

Run your top 20 brand queries through ChatGPT every week. Document whether your brand appears, how it is described, and which sources get cited.

| Query Type | Example | Frequency |

|---|---|---|

| Brand name query | ”What is [your brand]?” | Weekly |

| Category query | ”Best [your category] for [audience]“ | Weekly |

| Comparison query | ”[Your brand] vs [competitor]“ | Weekly |

| Problem query | ”How to [solve problem your product addresses]“ | Weekly |

Model Update Alerts

Track when OpenAI releases new model versions. Key sources for update alerts:

- OpenAI Model Release Notes (official changelog)

- LLM Changelog by reconnAI (tracks all AI platform updates)

- AI SEO newsletters and industry publications

When a new model version releases, immediately run your full query battery. Compare results against your baseline from the previous version. Document any visibility changes.

Monthly Deep Analysis

Run a full visibility audit monthly. Track share of voice, citation accuracy, sentiment, and positioning across ChatGPT, Perplexity, Gemini, and Claude. Compare month-over-month changes.

For the complete tracking methodology, read our guides on AI search visibility tracking and LLM visibility.

Automated Monitoring Tools

| Tool | What It Tracks | Update Frequency |

|---|---|---|

| Semrush AI Visibility | Brand mentions across AI platforms | Real-time |

| LLMrefs | URL-level citations per model | Daily |

| Otterly | Brand visibility across ChatGPT, Gemini | Weekly |

| AIclicks | Share of voice across AI platforms | Real-time |

How to Recover from a Model Update Visibility Drop

Model updates can drop your brand visibility without warning. Here is the recovery playbook.

Step 1: Diagnose the Cause

Run 50 target queries through the new model version. Compare results against your pre-update baseline. Identify which query categories lost visibility and which sources replaced you.

Common causes of post-update drops:

- Training data cutoff excluded your recent content

- Citation behavior shifted (blog mentions vs direct website)

- Competitor content entered the new training data

- Entity definition weakened due to inconsistent messaging

Step 2: Refresh Critical Content

Update your top 20 pages with current data, statistics, and examples. Add timestamps that the model can parse. Models with web search capabilities will find updated content faster than waiting for the next training refresh.

Step 3: Amplify External Signals

Publish guest posts, earn press mentions, and generate new reviews on third-party platforms. External signals reach models through both training data and real-time search. The more platforms that mention your brand, the harder it is for a model update to erase your visibility.

Step 4: Monitor Recovery

Track your visibility weekly after implementing recovery actions. Expect 2 to 4 weeks for real-time search improvements to appear. Training data improvements require waiting for the next model update (3 to 6 months).

The recovery timeline depends on the cause. Citation behavior changes (like GPT-5.3 to 5.4) require adjusting your content strategy. Training data gaps require consistent publishing to fill the gap in the next training cycle.

Prevention Is Better Than Recovery

The best defense against model update visibility drops is proactive, not reactive. Build the multi-platform presence and publishing consistency described in the strategy section above. The brands that do this rarely experience dramatic drops because their signal is too distributed for any single update to eliminate.

The brands that experience the worst drops are those with thin content, single-platform presence, and long gaps between publishing. They have a fragile visibility footprint that any model update can disrupt.

For a broader look at how AI search is changing SEO and how to build a resilient strategy across all AI platforms, read our full analysis.

What GPT Model Updates Mean for Your SEO Strategy

GPT model updates add a new dimension to SEO planning. Your content calendar must account for AI model cycles, not just Google algorithm updates.

The Content Compound Effect and Model Resilience

Every article you publish creates another data point in future training datasets. A site with 30 new articles per month generates 360 new data points per year. A site with 2 articles per month generates 24. When the next model trains on web data, which site has stronger representation?

The Content Compound Effect applies directly to model resilience. Consistent publishing creates a deep enough content footprint that no single model update can erase your brand signal. This is the same principle behind generative engine optimization. The brands with the most data points in training data get the most citations.

Planning Around Training Data Cutoffs

When a new model release is announced with a known cutoff date, audit your content published before that date. That content represents your brand in the model. If you published thin, generic content during that window, the model has a weak impression of your brand. If you published deep, authoritative content, the model trusts you.

Plan your highest-impact content publishing for the periods most likely to fall within the next training data window. You cannot predict exact cutoff dates, but you can ensure you are always publishing high-quality content so every window captures a strong signal.

The practical execution: publish at least 4 articles per week. Each article strengthens your representation in the next training snapshot. At 30 articles per month, every training data window captures a minimum of 90 new data points from your domain. Compare that to a competitor publishing 3 articles per month (9 data points per window). The math favors volume.

This is the same principle behind the Stacc Stack Method. Blog SEO and Local SEO compound together across both traditional search and AI training data. Every piece of content serves multiple channels simultaneously. When the next model trains on web data, your brand has deeper representation than competitors who publish less frequently.

The answer to “how do I protect my brand visibility across GPT model updates?” is simple. Publish more. Publish consistently. Publish with structure and data. Do this across multiple platforms. The cumulative effect protects you from any single model update.

Rank everywhere. Do nothing. Blog SEO, Local SEO, and Social on autopilot. Build visibility that survives every model update. Start for $1 →

FAQ

How often does OpenAI update GPT models?

OpenAI releases major model updates every 3 to 6 months and minor updates more frequently. Each major update can shift training data, citation behavior, and brand visibility. Monitor the OpenAI model release notes for announcement dates.

Can a model update eliminate my brand from ChatGPT answers?

Yes. If the new model version changes its citation behavior or reweights its training data, your brand can disappear from answers that previously mentioned you. This is why multi-platform presence and consistent publishing are essential. No single model update can remove all signals if your brand exists across multiple platforms.

How is this different from Google algorithm updates?

Google algorithm updates change how pages rank in a list. GPT model updates change whether your brand exists in AI-generated answers at all. Google shifts position 3 to position 7. GPT model updates shift “mentioned” to “not mentioned.” The stakes per change are higher.

Should I optimize differently for each GPT model version?

No. Optimize for the principles that work across all versions: consistent publishing, strong entity definition, multi-platform presence, and structured content. Chasing model-specific behavior leads to whiplash when the next update changes everything.

How do I know which GPT version users are running?

You cannot. ChatGPT users may run different model versions depending on their subscription tier and settings. Free users often run older models. Plus users run the latest. Optimize for all versions by building broad, consistent signals.

Does Stacc help protect against GPT model update visibility drops?

Stacc publishes 30 optimized articles per month with structured headings, schema markup, and answer-first formatting. Consistent publishing at that volume ensures your brand has strong representation in every training data window. The Content Compound Effect builds model-resilient visibility over time.

GPT model updates are the new algorithm updates for AI search. The brands that publish consistently, build multi-platform signals, and monitor visibility proactively will maintain their citations through every model cycle. The brands that optimize once and stop will lose visibility with the next update.

Written by

Siddharth GangalSiddharth is the founder of theStacc and Arka360, and a graduate of IIT Mandi. He spent years watching great businesses lose organic traffic to competitors who simply published more. So he built a system to fix that. He writes about SEO, content at scale, and the tactics that actually move rankings.

30 SEO blog articles published every month

Keyword-optimized, scheduled, and live on your site. Automatically.

30-day trial · Cancel anytime

theStacc

Stop writing SEO content manually

30 blog articles, 30 GBP posts, and social media content. Published every month. Automatically.

Start Your $1 Trial$1 for 3 days · Cancel anytime