What Is LLM Visibility? The Complete Guide (2026)

LLM visibility measures how AI models describe and cite your brand. Learn what drives citations, how to track them, and how to improve. Updated April 2026.

Nearly 90% of ChatGPT citations come from URLs ranked position 21 or lower in Google. Brand search volume, not backlinks, is the strongest predictor of whether an AI model mentions your brand. And 70% of AI-generated content changes between repeated runs of the same query.

LLM visibility is the new metric that determines whether your business exists in the AI-first search era. Traditional SEO tells you where you rank on Google. LLM visibility tells you what ChatGPT, Perplexity, Gemini, and Claude say about you when someone asks a question.

The difference matters. Search engine volume is expected to drop 25% by 2026 as users shift to AI assistants. The brands that show up in AI answers capture the attention. The ones that do not become invisible.

We have published 3,500+ SEO articles across 70+ industries and tracked how AI models cite, describe, and recommend brands. This guide explains what LLM visibility is, why it matters, how AI models decide what to cite, and what you can do about it.

Here is what you will learn:

- What LLM visibility means and how it differs from traditional SEO

- The 4 layers of LLM visibility every brand should track

- How AI models choose which sources to cite

- The specific content signals that increase citation likelihood

- How to measure and improve your LLM visibility in 2026

- Which tools track brand mentions across AI platforms

What Is LLM Visibility?

LLM visibility measures how large language models describe, position, and cite your brand when users ask questions. It goes beyond whether your brand appears in an AI response. It captures how the AI frames your brand, what it says about you, and whether it recommends you.

Think of it as your brand reputation inside AI. When someone asks ChatGPT “what is the best project management tool,” the AI does not return a list of links. It generates an answer. It names specific brands. It describes their strengths and weaknesses. It recommends one or two.

That answer shapes purchase decisions. And unlike Google search results where you compete for clicks among 10 blue links, AI responses typically mention only 2 to 7 brands. If you are not one of them, you are invisible.

LLM Visibility vs Traditional SEO

| Factor | Traditional SEO | LLM Visibility |

|---|---|---|

| Goal | Rank on page 1 of Google | Get cited in AI-generated answers |

| Measurement | Position tracking (1-100) | Share of voice across AI models |

| Primary signal | Backlinks and on-page optimization | Brand authority and content depth |

| User behavior | Human clicks through to your site | AI synthesizes answer, user may never visit |

| Content format | Keyword-optimized pages | Fact-dense, extractable passages |

| Competitor scope | 10 results per SERP | 2-7 brands per AI response |

| Volatility | Gradual ranking shifts | 70% of AI content changes between queries |

The most important difference: traditional SEO ranking explains very little about why a brand gets cited in AI responses. A page can rank number 1 on Google and be completely absent from ChatGPT answers to the same question.

The 4 Layers of LLM Visibility

LLM visibility is not a single metric. It has 4 distinct layers, and each one tells you something different about how AI perceives your brand.

Layer 1: Presence

Does your brand appear in AI responses at all? This is the baseline. Run your target queries through ChatGPT, Perplexity, Gemini, and Claude. Check if your brand gets mentioned.

Presence alone is not enough. A brand can appear in an AI answer as a negative example. But without presence, nothing else matters.

Layer 2: Positioning

How does the AI frame your brand? Is it described as the premium option, the budget pick, the best for beginners, or the enterprise choice? AI models assign positioning based on the language patterns they find across the web.

If your brand is consistently described as “affordable but limited” when you want to be seen as “professional and full-featured,” you have a positioning gap.

Layer 3: Sentiment and Trust

Does the AI recommend your brand with confidence? Or does it hedge? The difference between “Brand X is the best option for small businesses” and “Brand X is sometimes recommended, though results vary” is the difference between a conversion and a lost opportunity.

AI models express confidence based on consistency of signals. Brands with uniform messaging across multiple sources get confident recommendations. Brands with contradictory signals get hedged language.

Layer 4: Narrative Gaps

What is the AI getting wrong about your brand? Missing features, outdated pricing, discontinued products, or inaccurate descriptions all create narrative gaps.

These gaps directly affect conversion. If ChatGPT tells a user your product costs $299 when it actually costs $99, that user moves on. If Gemini says your product lacks a feature you launched 6 months ago, you lose the comparison.

Monitoring narrative gaps is essential for maintaining accurate AI representation. The fix is straightforward. Update your public-facing content with current facts. AI models recrawl and update their training data. The correction propagates within weeks on models that use real-time search (Perplexity, Google AI Mode) and within months for models with less frequent training updates (ChatGPT, Claude).

Stop writing. Start ranking. Stacc publishes 30 SEO articles per month for $99. Build the content signals that AI models use to evaluate your brand. Start for $1 →

How AI Models Choose What to Cite

Most businesses assume AI models work like search engines. They do not. Understanding the actual citation mechanism is essential for improving LLM visibility.

Brand Authority Beats Backlinks

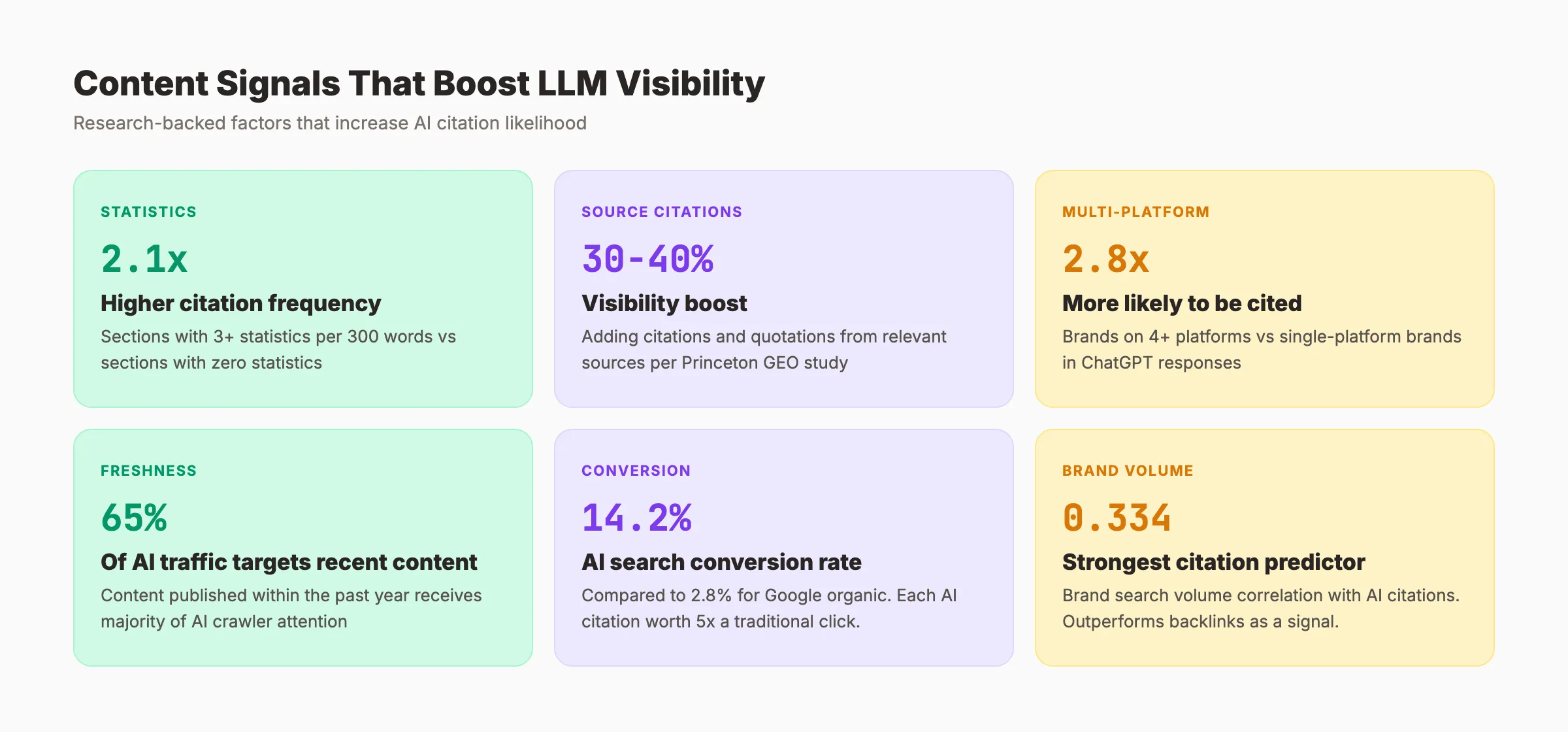

Research shows that brand search volume has a 0.334 correlation with AI citations. That is the strongest predictor measured. Backlinks, the traditional SEO signal, have a much weaker correlation.

Brands that appear on 4+ platforms (their own website, Wikipedia, social media, industry directories, review sites) are 2.8x more likely to appear in ChatGPT responses than brands visible on only 1 platform.

Distributed Presence Builds Citation Confidence

AI models do not trust a single source. They synthesize information from multiple sources. When a model sees your brand mentioned consistently across third-party publishers, customer reviews, community discussions, and professional directories, it builds citation confidence.

A single mention on your own website carries less weight than 10 consistent mentions across external sources. This is why building topical authority across multiple content types and platforms matters more than optimizing a single page.

Content Depth Signals Expertise

LLMs favor content that explains over content that persuades. AI systems look for discrete, extractable facts: specific pricing, named features, documented use cases, and measurable outcomes.

According to the foundational GEO research from Princeton University, adding citations, statistics, and quotations to content can boost source visibility by 30 to 40% in AI-generated responses. Content sections with 3+ statistics per 300 words achieve a 2.1x higher citation frequency than sections with zero statistics.

Freshness Matters

65% of AI bot traffic targets content published within the past year. Only 6% of AI citations reference content older than 6 years. AI models show a documented recency bias.

But there is a nuance. The average domain age of ChatGPT-cited sources is 17 years. AI trusts established domains. The combination of an established domain and fresh content is the strongest citation signal.

This is why consistent publishing matters. A site that published 30 articles last month on an established domain signals both authority and freshness to AI models. A site that published 2 articles 6 months ago does not register as a current authority.

AI Search Traffic Converts Better

Here is a data point most businesses miss. AI search traffic converts at 14.2% compared to Google organic traffic at 2.8%. That makes a single AI citation worth roughly 5x more than a traditional organic click.

The math favors LLM visibility. Fewer total visitors from AI search produce more conversions than larger volumes from traditional search. This is because AI users have already processed recommendations and arrive with higher intent.

How to Improve Your LLM Visibility

Improving LLM visibility requires a different approach than traditional SEO. Here are the actions that move the needle, ranked by impact.

1. Build Multi-Platform Brand Presence

AI models evaluate your brand across the entire web, not just your website.

Action items:

- Create and optimize profiles on LinkedIn, X, YouTube, and relevant industry directories

- Ensure your brand has a Wikipedia entry (if eligible) or at minimum a Wikidata entity

- Generate reviews on G2, Trustpilot, Capterra, or industry-specific review platforms

- Earn editorial mentions through guest posts, interviews, and press coverage

- Maintain an active presence in community forums (Reddit, industry Slack groups)

- Optimize your Google Business Profile (AI models pull GBP data)

2. Create Fact-Dense, Extractable Content

AI models cite content they can extract clean facts from. Structure your content for AI consumption.

Action items:

- Lead every section with a direct answer to a specific question

- Include specific numbers, percentages, and data points (3+ stats per 300 words)

- Use clear H2/H3 heading structures that match natural language queries

- Keep paragraphs to 2-3 sentences that can stand alone as citable units

- Add original research, case studies, and first-hand data

- Follow on-page SEO best practices for structure and formatting

For a deeper guide on content structure, read our guide to getting cited in AI search.

3. Implement Technical Foundations for AI Access

AI crawlers need to find and parse your content. Technical barriers block LLM visibility.

Action items:

- Publish an

llms.txtfile that describes your site structure - Ensure AI crawlers can access your content through

robots.txt - Add schema markup (Article, Organization, FAQ, Product) to all key pages

- Maintain fast page speeds and clean technical SEO (broken pages reduce trust signals)

- Optimize for generative engine optimization standards

4. Monitor and Correct Narrative Gaps

AI models sometimes get facts wrong about your brand. Actively monitor and correct errors.

Action items:

- Run your brand name through ChatGPT, Perplexity, Gemini, and Claude monthly

- Document any incorrect pricing, features, or descriptions

- Update your website content to clearly state current facts (AI recrawls pick up changes)

- Publish correction content on high-authority platforms where AI sources information

- Use AI search visibility tracking to monitor changes over time

3,500+ blogs published. 92% average SEO score. Every article builds the signal density that AI models use to cite your brand. Start for $1 →

Measuring LLM Visibility: Metrics That Matter

Traditional SEO metrics do not translate to LLM visibility. Here are the 5 metrics that actually matter.

Share of Voice

Percentage of AI answers that mention your brand for target queries. The top-performing brands capture 15% or more share across their core query sets.

How to measure: Run 50 to 100 target queries across ChatGPT, Perplexity, and Gemini. Count how many responses mention your brand. Calculate percentage. Repeat monthly.

Citation Frequency

How often AI models cite your specific URLs or content. Different from share of voice because it measures direct references, not just brand mentions.

How to measure: Use tools like LLMrefs, Semrush AI Visibility, or Otterly to track URL-level citations across models.

Sentiment Score

Whether AI describes your brand positively, neutrally, or with hedging language. A confident recommendation converts better than a cautious mention.

How to measure: Analyze the exact language AI uses. “Best option for” and “highly recommended” indicate positive sentiment. “Sometimes suggested” and “results may vary” indicate weak sentiment.

Positioning Accuracy

Whether AI positions your brand correctly relative to your actual market position. Misalignment between your intended positioning and AI perception is a red flag.

How to measure: Compare what AI says about your brand versus your intended messaging. Document every discrepancy.

Narrative Accuracy

Whether AI states correct facts about your product, pricing, and features. Inaccuracies directly lose potential customers.

How to measure: Run detailed product queries (“how much does [brand] cost?” or “what features does [brand] include?”) and verify accuracy.

| Metric | What It Measures | Tracking Frequency | Target Benchmark |

|---|---|---|---|

| Share of voice | Brand mention percentage | Monthly | 15%+ for core queries |

| Citation frequency | Direct URL references | Weekly | Increasing trend |

| Sentiment score | Confidence of recommendation | Monthly | Positive in 80%+ responses |

| Positioning accuracy | Brand perception alignment | Quarterly | 90%+ match to intended positioning |

| Narrative accuracy | Factual correctness | Monthly | Zero critical errors |

LLM Visibility Tools for 2026

Several platforms now track brand visibility across AI models. Here are the categories and what they measure.

| Tool Category | What It Tracks | Example Platforms |

|---|---|---|

| AI visibility trackers | Brand mentions across ChatGPT, Gemini, Perplexity, Claude | Semrush AI Visibility, LLMrefs, Otterly |

| Brand mention monitors | Cross-platform brand mentions including AI | AIclicks, LLM Pulse, AI Search Watcher |

| Citation analyzers | Which URLs AI models reference for specific queries | LLMrefs, Mangools AI Search Watcher |

| Sentiment analyzers | How AI describes your brand (positive/neutral/negative) | LLM Insight, Erlin |

No single tool covers everything. Most businesses use a combination of manual spot-checks (running queries directly in AI models) and automated tracking (through one of the platforms above).

Start with manual checks. Run your top 20 target queries through ChatGPT and Perplexity once per week. Document which brands appear, how they are described, and whether your brand is mentioned. This baseline costs nothing and takes 30 minutes. Add automated tools when you have enough data to justify the investment.

For a full breakdown of AI search visibility tracking, including free methods, read our dedicated guide.

What LLM Visibility Means for SEO Strategy

LLM visibility does not replace SEO. It adds a new layer.

Traditional SEO still drives organic traffic from Google. Content marketing strategy still matters. But the businesses that ignore LLM visibility will lose an increasing share of their discovery channel.

Here is the practical impact:

Content velocity matters more. AI models favor fresh content from established domains. Publishing 30 articles per month builds both topical authority and freshness signals. Publishing 2 per month does not generate enough signal density.

Quality per paragraph matters more. AI extracts passages, not pages. Every paragraph needs to contain a citable fact. Filler content that exists only for word count actively hurts LLM visibility because it dilutes your fact density.

Brand consistency matters most. AI models synthesize information from dozens of sources. If your pricing, features, and positioning are inconsistent across your website, review sites, and social profiles, AI hedges its recommendations. Consistency across every public touchpoint is the foundation of LLM visibility.

The Content Compound Effect applies directly to LLM visibility. Every article you publish adds another data point that AI models evaluate. Over time, the compounding signal from consistent publishing builds the citation confidence that makes AI recommend your brand by default.

Rank everywhere. Do nothing. Blog SEO, Local SEO, and Social on autopilot. Build the signal density that AI models trust. Start for $1 →

FAQ

Is LLM visibility the same as GEO?

Related but different. Generative engine optimization (GEO) is the practice of optimizing content to get cited by AI systems. LLM visibility is the metric that measures the result. GEO is what you do. LLM visibility is what you measure.

Can I control what AI says about my brand?

Not directly. AI models synthesize information from multiple sources. But you can influence what they say by ensuring consistent, accurate information across your website, review platforms, social profiles, and third-party mentions. The more consistent your signals, the more accurately AI represents your brand.

Does LLM visibility affect my Google rankings?

LLM visibility and Google rankings are separate systems. But the activities that improve LLM visibility (content depth, brand authority, multi-platform presence) also improve traditional SEO. The overlap is significant. Investing in one improves the other.

How often should I check my LLM visibility?

Run manual spot-checks weekly for your top 20 queries. Conduct a full audit monthly. Review positioning and narrative accuracy quarterly. AI responses change frequently (70% variation between runs), so consistent monitoring is essential.

Which AI model matters most for my business?

It depends on your audience. ChatGPT has the largest user base. Google AI Mode integrates directly with search. Perplexity serves research-heavy users. Track all major models and prioritize based on where your customers ask questions. For most businesses, ChatGPT and Google AI Mode are the starting points.

How long does it take to improve LLM visibility?

Most brands see initial changes within 4 to 8 weeks of implementing consistent content and brand signal improvements. Meaningful shifts in AI perception take 3 to 6 months. The timeline accelerates with higher publishing volume and multi-platform brand building.

LLM visibility is the metric that defines whether your brand exists in AI-generated answers. The shift from search engine rankings to AI citations is already underway. The brands that build citation-worthy content and consistent signals now will capture the traffic that others lose as AI search grows.

Written by

Siddharth GangalSiddharth is the founder of theStacc and Arka360, and a graduate of IIT Mandi. He spent years watching great businesses lose organic traffic to competitors who simply published more. So he built a system to fix that. He writes about SEO, content at scale, and the tactics that actually move rankings.

30 SEO blog articles published every month

Keyword-optimized, scheduled, and live on your site. Automatically.

30-day trial · Cancel anytime

theStacc

Stop writing SEO content manually

30 blog articles, 30 GBP posts, and social media content. Published every month. Automatically.

Start Your $1 Trial$1 for 3 days · Cancel anytime