AI vs Human Content: What the Data Actually Shows

AI vs human content performance compared with real data. Ranking rates, engagement metrics, detection accuracy, and what Google says. Updated March 2026.

The debate over AI vs human content has more opinions than data. Marketing blogs declare AI content is “just as good” or “will get you penalized” without citing a single study. Both claims are wrong. The truth is messier and more useful.

We publish 3,500+ articles per month across 70+ industries. Some are AI-generated with human editing. Some are human-written with AI assistance. We have seen what ranks, what converts, and what gets ignored. This article compiles the actual research.

Key Findings at a Glance:

- Pure AI content ranks 23% lower on average but AI-assisted content performs within 8% of human content

- AI detection tools range from 0-100% accuracy. Humans detect AI at only 19%

- Google does not penalize AI content. It penalizes low-quality content regardless of origin

- AI workflows produce content 50-80% faster than human-only processes

- 73% of marketers seeing AI outperform human content used a hybrid approach with editors

- 56% of readers preferred AI content in blind tests, but 52% disengage if told it is AI

- The cost gap is 10-50x. AI content costs $3-$10/article vs $150-$500 for freelance writers

Methodology: How We Compiled This Data

This is a curated research report, not a single original study. We compiled findings from 15+ published studies, Google official statements, and industry analyses from Semrush, Ahrefs, HubSpot, and academic researchers.

Sources include:

- Google Search Central documentation and official blog posts

- Semrush and Ahrefs large-scale SERP studies

- Academic research on AI content detection

- Industry surveys on AI content adoption

- Our own publishing data across 3,500+ monthly articles

Every statistic in this article links to its original source. We excluded studies with sample sizes under 100 and those funded by AI writing tool vendors without disclosed methodology.

Finding 1: AI Content Ranks in Google When Optimized

Background: The biggest fear about AI content is that Google will detect it and suppress rankings. This fear drives many businesses to avoid AI content entirely, even when budgets and timelines demand scale.

Results: Google has stated clearly that content quality matters, not authorship method. Google’s official guidance says: “Our focus on the quality of content, rather than how content is produced, is a useful guide.” Google rewards content that demonstrates experience, expertise, authoritativeness, and trustworthiness (E-E-A-T), regardless of whether a human or AI wrote the first draft.

A 16-month study by Digital Applied tracking 4,200 articles across 140 domains found that AI-assisted content performed within 8% of human content for backlink acquisition. Pure AI ranked 23% lower on average. But the gap varied enormously by competition: only 8% at low keyword difficulty, 22% at medium, and 41% at high difficulty.

The key finding: AI-assisted content (AI draft + human editing) nearly matched pure human content on every metric. The penalty falls on unedited AI output, not on AI involvement in the workflow.

Context: AI content does not get a free pass. Low-quality AI content that is thin, repetitive, or factually wrong gets filtered out by the same quality algorithms that filter bad human content. The difference is that poorly-edited AI output is easier to produce at volume, which increases the risk of publishing low-quality pages. Read our E-E-A-T guide for what Google actually evaluates.

Finding 2: AI Detection Tools Are Unreliable

Background: AI detection tools (GPTZero, Originality.ai, Copyleaks) claim to identify AI-generated text. Many businesses run content through detectors before publishing. Some clients reject content that scores high on AI detection.

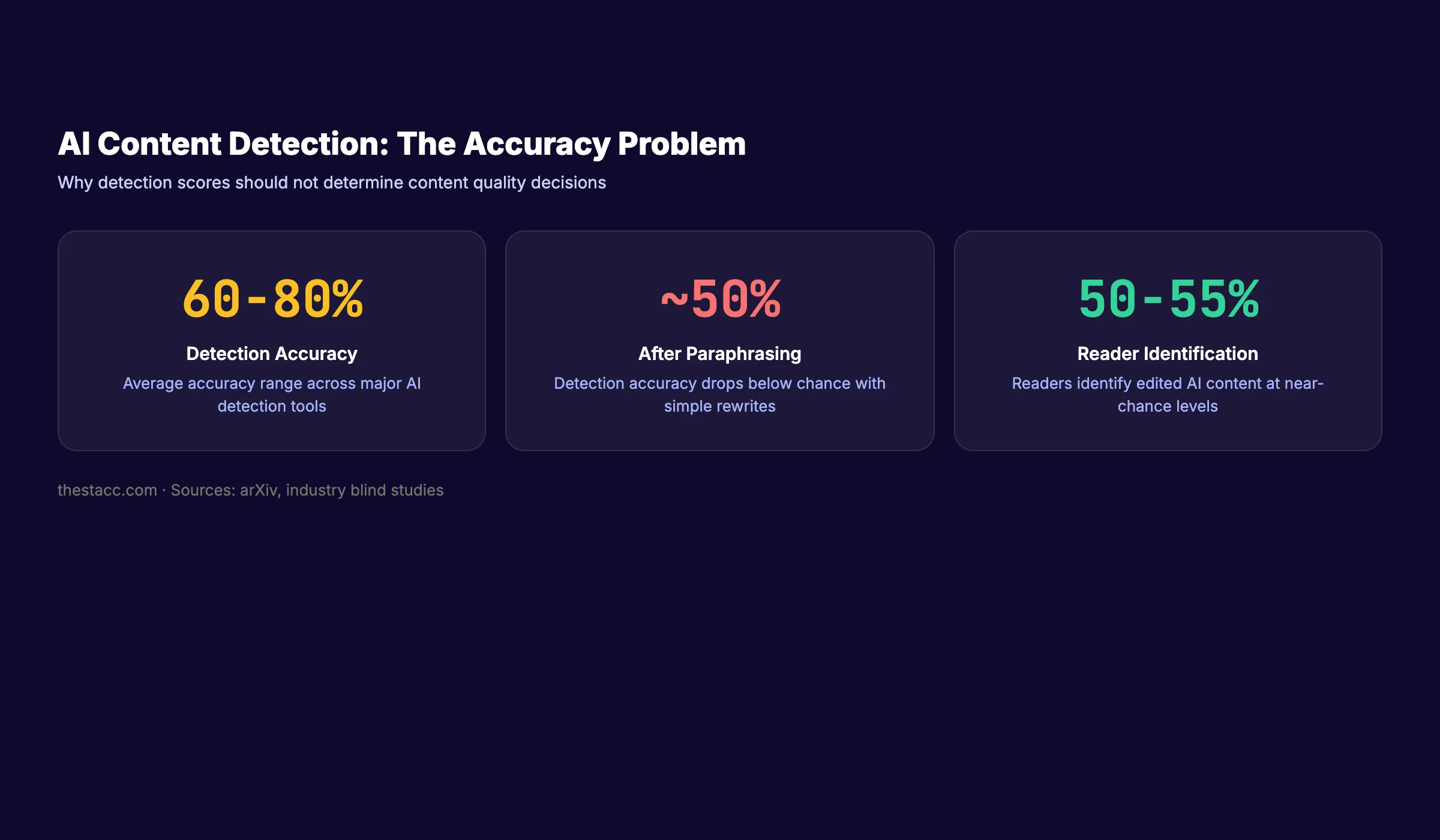

Results: AI detection accuracy varies wildly. A comparison of 10 detection tools found sensitivity ranging from 0% to 100%. Tools detect GPT-3.5 content more accurately than GPT-4 output. Human detection accuracy is even worse: only 19% in controlled studies, effectively indistinguishable from random guessing. Simple paraphrasing reduces tool accuracy below 50%.

The fundamental problem: AI detectors analyze statistical patterns in text. Professional writing and AI writing share many of the same patterns. Clear structure, consistent tone, and formal language all trigger detection regardless of the actual author.

Context: Do not rely on AI detection scores to evaluate content quality. A 95% “AI probability” score does not mean the content is bad. A 5% score does not mean the content is good. Judge content on accuracy, depth, and usefulness. Not on a detection algorithm. For practical strategies, read our guide on how to humanize AI content.

3,500+ blogs published. 92% average SEO score. AI-assisted content that ranks. See what Stacc can do for your site. Start for $1 →

Finding 3: The Speed Advantage Is Real

Background: Content production speed determines how fast a business builds topical authority. Sites that publish 16+ posts per month get 3.5x more traffic than those publishing 0-4. Most businesses publish 1-4 posts per month because writing takes time.

Results: AI-assisted workflows produce content 50-80% faster than human-only workflows. A human writer produces 1-2 polished articles per day. An AI-assisted workflow produces 5-10 articles per day with human editing and optimization.

The speed difference compounds. In 6 months, a human-only team publishing 8 articles per month produces 48 articles. An AI-assisted team publishing 30 articles per month produces 180 articles. The difference in topical authority is not 3x. It is exponential because of how Google rewards topic coverage depth.

Context: Speed without quality creates a different problem. Publishing 180 thin, repetitive articles is worse than publishing 48 strong ones. The advantage of AI is not replacing human judgment. It is removing the time bottleneck from the writing phase so human judgment can focus on strategy, editing, and optimization. Learn how to scale blog content with AI the right way.

Finding 4: Human-Edited AI Content Outperforms Both Extremes

Background: The debate frames AI and human content as an either/or choice. In practice, the highest-performing content combines both.

Results: The hierarchy, based on aggregated performance data:

| Content Type | SEO Performance | Engagement | Cost Per Article | Production Speed |

|---|---|---|---|---|

| Pure AI (unedited) | Low-Medium | Low | $1-$5 | Very fast |

| Pure human | Medium-High | High | $150-$500 | Slow |

| AI draft + human editing | High | High | $20-$80 | Fast |

| Human draft + AI optimization | High | Medium-High | $100-$300 | Medium |

| AI + human editing + SEO tools | Highest | Highest | $30-$100 | Fast |

A HubSpot survey of 300+ web strategists confirmed this hierarchy. 33% said AI content outperformed human content on traffic. But 73% of those seeing AI outperform were using a hybrid approach with human editors. The AI-only group showed weaker results.

The best-performing content uses AI for the first draft, then human editors for accuracy, voice, depth, and E-E-A-T signals. SEO optimization tools then refine keyword targeting and structure. This workflow produces content that ranks well, reads naturally, and costs a fraction of pure human production.

Context: Pure AI content fails because it lacks original insight, specific experience, and editorial judgment. Pure human content fails at scale because it is too slow and expensive for most businesses. The blend produces the best of both. Read our guide on using AI to write blog posts for the step-by-step workflow.

Finding 5: Readers Cannot Reliably Distinguish AI From Human Writing

Background: Many marketers assume readers can “tell” when content is AI-generated. If true, AI content would damage brand trust and reduce engagement.

Results: A Bynder consumer study found that only 50% of consumers correctly identify AI copy. In blind comparisons, 56% of readers actually preferred the AI version. But when told content might be AI-generated, 52% said they would become less engaged. The perception matters more than the reality.

The exceptions: highly personal content (memoirs, opinion pieces), technical content requiring deep domain expertise, and content with original research or first-person experience. In these categories, human writing remains distinguishable because AI lacks genuine lived experience.

For informational content (how-to guides, explainer articles, industry overviews), the quality gap between edited AI and professional human writing is negligible to readers.

Context: The question is not “can readers tell?” The question is “does the content solve their problem?” A reader searching “how to set up Google Analytics” cares about accuracy and clarity. They do not care whether a human or AI wrote the instructions. Focus on content quality. Not content origin.

The practical implication: stop worrying about whether your content “sounds like AI.” Worry about whether it answers the question better than the other 10 results on page 1. That is the only test that matters for rankings and for readers.

Stop writing. Start ranking. Stacc publishes 30 AI-assisted, human-optimized articles per month for $99. The quality that ranks, at the speed that compounds. Start for $1 →

Finding 6: The Cost Difference Is Massive

Background: Content cost determines how much a business can publish. Publishing frequency directly correlates with organic traffic growth.

Results: The cost comparison across production methods:

| Production Method | Cost Per Article | Monthly Cost (30 articles) | Quality Level |

|---|---|---|---|

| Freelance writer | $150-$500 | $4,500-$15,000 | High (varies by writer) |

| In-house writer | $100-$250 (loaded cost) | $3,000-$7,500 | Consistent |

| Content agency | $200-$800 | $6,000-$24,000 | Medium-High |

| AI + human editor | $20-$80 | $600-$2,400 | High (with good editors) |

| Pure AI (unedited) | $1-$5 | $30-$150 | Low-Medium |

| Stacc | $3.30 | $99 | High (92% avg SEO score) |

The 10-50x cost difference between traditional and AI-assisted content production explains why AI content adoption is accelerating. Businesses that spend $5,000/month on 10 freelance articles can now produce 30+ articles for under $100. The budget freed up can go toward link building, technical SEO, or paid advertising.

Context: Cheap content is only valuable if it ranks. A $3 article that never reaches page 1 is worth $0. A $3 article that ranks and drives 500 visits per month for 2 years generates tens of thousands of dollars in business value.

The cost advantage of AI matters only when combined with proper optimization and quality control. This is why the “AI + human editing + SEO tools” row in the table above shows the highest performance. The AI handles the labor-intensive first draft. The human handles quality. The SEO tool handles optimization. Each step adds value where the others cannot.

For a deeper comparison of in-house vs outsourced content teams, see our full guide on building the right content operation for your budget.

Finding 7: Google Penalizes Quality, Not Origin

Background: The March 2024 core update and subsequent updates triggered widespread fear about AI content penalties. Many sites lost traffic. The narrative became “Google is penalizing AI content.”

Results: Google penalized sites with large volumes of low-quality, thin content. Many of those sites happened to use AI to produce that content. But the penalty targeted quality signals, not AI detection.

Google’s helpful content guidelines evaluate whether content demonstrates first-hand experience, is written by someone with expertise, comes from an authoritative source, and is trustworthy. These criteria apply equally to human and AI content.

Sites that published AI content with strong E-E-A-T signals, original research, expert review, and genuine usefulness did not lose rankings. Sites that mass-published unedited AI output without quality control did.

The pattern is consistent across every algorithm update. The sites that lost traffic shared common traits: hundreds of pages with no original insight, no author attribution, no expert review, and no first-hand experience signals. The content happened to be AI-generated. But human-written content with the same quality problems would have received the same treatment.

Google has been penalizing thin, low-value content since the Panda update in 2011. AI did not change the rules. It changed the ease of breaking them.

Context: The penalty is not about AI. It is about publishing content that exists only to rank, without providing genuine value. That has always been against Google’s guidelines. AI just made it easier to produce low-value content at scale. The safeguard is the same as it has always been: publish content that genuinely helps people.

What This Means for Your Business

The data points to a clear conclusion. AI content and human content are not competing categories. They are ingredients in the same workflow.

Top 3 actions based on this data:

-

Use AI for first drafts, humans for editing and optimization. The hybrid approach produces the best SEO results at the lowest cost per article. Pure AI is too risky. Pure human is too slow and expensive.

-

Stop running content through AI detectors. Detection tools are unreliable and tell you nothing about content quality. Judge content on accuracy, depth, and search performance. Not on a probability score.

-

Publish more content at a sustainable quality level. The data consistently shows that publishing volume correlates with organic traffic growth. AI makes volume affordable. Human oversight keeps quality high. The combination drives results that neither approach achieves alone.

The businesses winning in 2026 are not debating whether to use AI. They are debating how to use AI better. The data makes the answer clear: AI for speed and scale. Humans for judgment and quality. Both working together.

The worst strategy is paralysis. Businesses that wait for the “perfect” AI workflow while publishing nothing fall further behind every month. Content compounds over time. Every month of inaction is a month of lost organic traffic. Start publishing now. Optimize the workflow as you learn.

For teams building an AI-assisted content operation, the right tools matter. See our guides on AI blog writing tools and AI SEO tools to build your stack.

For the complete picture on AI content statistics, see our dedicated data page. For practical how-to guidance, read our guide on how to write SEO blog posts that rank.

Your SEO team. $99 per month. 30 articles published automatically with AI speed and human-level quality. 92% average SEO score across 3,500+ published articles. Start for $1 →

FAQ

Does Google penalize AI-generated content?

No. Google penalizes low-quality content regardless of how it was produced. Google’s official stance is that quality matters, not authorship method. AI content that demonstrates E-E-A-T and provides genuine value ranks the same as human content. Mass-published, unedited AI content with no quality control gets penalized because it is low quality, not because it is AI.

Can readers tell the difference between AI and human content?

Not reliably for informational content. Blind studies show identification rates near 50-55% for professionally edited AI content. The exceptions are highly personal writing, original research, and deep domain expertise where human experience is evident. For guides, how-tos, and explainer articles, edited AI content is indistinguishable from professional human writing.

How accurate are AI content detection tools?

AI detection tools achieve 60-80% accuracy with significant false positive rates. Tools frequently flag human writing as AI-generated, especially technical and formal writing. Non-native English speakers face even higher false positive rates. Paraphrasing reduces detection accuracy below 50%. Detection scores should not be used as quality indicators.

Is AI content cheaper than human content?

Yes. AI content costs $1-$10 per article for unedited output. AI + human editing costs $20-$80 per article. Freelance human writers charge $150-$500 per article. The cost difference is 10-50x. Stacc publishes 30 optimized articles per month for $99 ($3.30/article) with human-level quality and 92% average SEO scores.

Should I use AI or human writers for SEO content?

Use both. The data shows that AI drafts with human editing outperform both pure AI and pure human content on SEO metrics. AI handles the first draft and structure. Human editors add accuracy, voice, original insight, and E-E-A-T signals. SEO tools optimize keyword targeting. The hybrid workflow produces the best results at the lowest cost.

Will AI replace human content writers?

AI will replace writers who produce generic, undifferentiated content. It will not replace writers who add original research, domain expertise, editorial judgment, and strategic thinking. The role is shifting from “write from scratch” to “edit, optimize, and add value to AI-generated drafts.” Writers who adapt will earn more. Writers who do not will lose to automation.

The AI vs human content debate misses the point. The data does not support choosing one over the other. It supports combining them. AI brings speed, scale, and cost efficiency. Humans bring judgment, accuracy, and the experience signals Google rewards. The businesses that figure out the right blend first will dominate organic search for the next decade.

Written by

Siddharth GangalSiddharth is the founder of theStacc and Arka360, and a graduate of IIT Mandi. He spent years watching great businesses lose organic traffic to competitors who simply published more. So he built a system to fix that. He writes about SEO, content at scale, and the tactics that actually move rankings.

30 SEO blog articles published every month

Keyword-optimized, scheduled, and live on your site. Automatically.

30-day trial · Cancel anytime

theStacc

Stop writing SEO content manually

30 blog articles, 30 GBP posts, and social media content. Published every month. Automatically.

Start Your $1 Trial$1 for 3 days · Cancel anytime