LLM Optimization for SEO: The Complete Guide (2026)

Master LLM optimization for SEO with this 8-chapter guide. Covers the passage framework, AI citation tactics, platform strategies, and a 90-day plan. Updated 2026.

Your content ranks on page 1 of Google. ChatGPT still has never mentioned it.

That disconnect is the defining SEO problem of 2026. AI traffic grew 527% between January and May 2025. ChatGPT now processes 1 billion weekly searches. But ranking on Google and getting cited by an LLM require entirely different strategies.

The stakes are high. AI-referred visitors convert at 4.4 times the rate of organic search visitors. LLM referrals deliver approximately 18% conversion rates. The highest of any discovery channel. Every week your content sits outside AI citations, a competitor captures that high-intent traffic instead.

We have published 3,500+ SEO articles across 70+ industries with a 92% average SEO score. We track how AI models cite and recommend content at scale. This guide covers exactly how to optimize for LLM search. Every tactic integrates with the SEO workflow you already have.

Here is what you will learn:

- Why Google rankings and LLM citations measure completely different things

- How AI crawlers find, extract, and cite your content

- The 3-layer LLM visibility framework every SEO team should implement

- The exact content tactics that increase citation frequency by up to 40%

- Platform-specific strategies for ChatGPT, Perplexity, Gemini, and Claude

- How to measure LLM performance when most citations leave no analytics trail

- A 90-day action plan to build LLM visibility from scratch

Chapter 1: What Is LLM Optimization for SEO. And Why Rankings Are Not Enough {#ch1}

LLM optimization for SEO is the practice of structuring content so large language models cite it in AI-generated answers. It extends traditional search engine optimization into the AI discovery layer where ChatGPT, Perplexity, Gemini, and Claude now operate.

The market shift is no longer a forecast. According to the Previsible 2025 State of AI Discovery Report, ChatGPT alone handles 1 billion weekly searches. Claude and Copilot citations each grew 12.8x and 25.2x year-over-year. Semrush projects that AI search visitors will surpass traditional search visitors before 2028.

Why Google Page 1 Does Not Guarantee LLM Citations

76% of URLs cited by AI systems also rank in Google’s top 10. Strong traditional SEO builds the foundation. But 80% of LLM citations do not appear in Google’s top 100.

Two distinct discovery pools exist. Users who find you via Google and users who encounter you via AI answers are often finding entirely different content. Optimize only for Google, and you are invisible to a fast-growing channel.

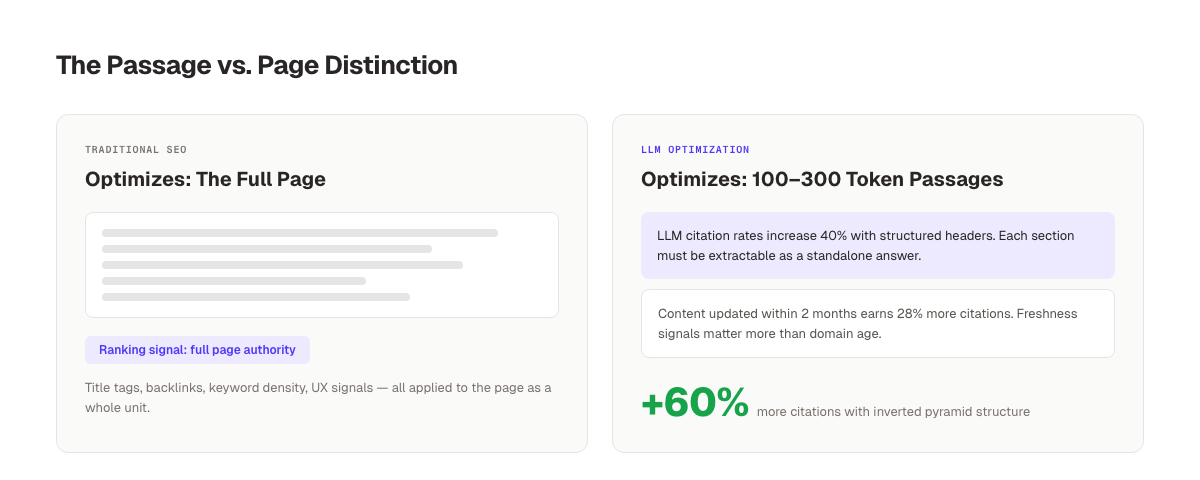

The Passage vs. Page Distinction

Traditional SEO optimizes the entire page for rankings. LLM optimization targets a different unit: the passage.

A passage is a self-contained, 100-300 token block of text that directly answers a question. When ChatGPT processes your content, it does not read your page as a whole. It extracts specific passages that match the user’s query and synthesizes a response from those fragments.

A single 3,000-word blog post contains 10-15 extractable passages. Each passage can be cited for a different question. This is why high-ranking pages get ignored while lower-ranked pages get cited. The top-ranking page optimized for keywords. The cited page optimized for answerable passages.

Traditional SEO vs. LLM Optimization vs. GEO

| Dimension | Traditional SEO | LLM Optimization | GEO |

|---|---|---|---|

| Primary goal | Rank in Google SERPs | Get cited in AI answers | Visibility across all AI surfaces |

| Discovery surface | Google, Bing | ChatGPT, Perplexity, Gemini, Claude | All of the above |

| Content unit | Full page | 100-300 token passage | Varies by platform |

| Success metric | Click-through rate | Citation frequency | Brand mentions + citations |

| Primary signals | Backlinks + keywords | Semantic clarity + authority | All signals combined |

| Refresh cycle | Weekly-monthly | 30/90/180 days | Continuous |

For a full breakdown of generative engine optimization and its relationship to traditional SEO, read our dedicated GEO guide. For a foundational look at what GEO is versus SEO, start there first.

Chapter 2: How LLMs Find and Cite Your Content {#ch2}

Understanding how LLMs retrieve information determines which optimization tactics work. Two distinct mechanisms drive every citation.

Training Data vs. Live Retrieval

Training data: Every LLM is trained on a large corpus of web content captured before a knowledge cutoff date. Content from this corpus shapes the model’s base knowledge. If your brand, product, or expertise appears frequently in that training data, the model treats you as authoritative by default.

Live retrieval (RAG): Most modern AI search products. ChatGPT search, Perplexity, Google AI Overviews. Use Retrieval-Augmented Generation. They crawl the live web in real time, pull relevant passages, and synthesize them into answers. This is where LLM optimization directly applies.

For live retrieval, your content must be crawlable, extractable, and semantically clear. The model cannot synthesize what it cannot access.

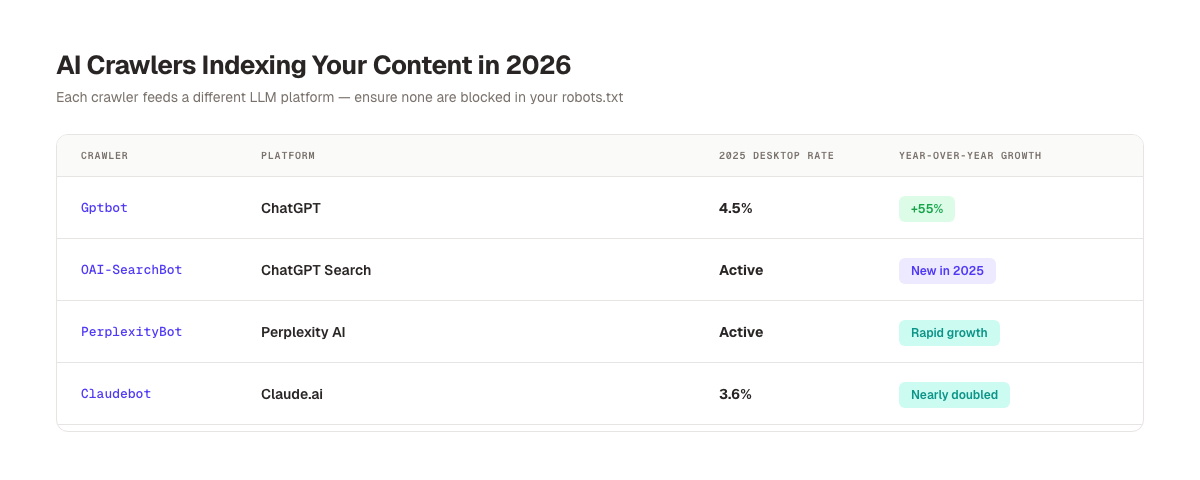

The AI Crawler Ecosystem

Five crawlers dominate AI content retrieval. Each serves a different platform:

| Crawler | Platform | 2025 Desktop Crawl Rate | Growth vs. 2024 |

|---|---|---|---|

| Gptbot | ChatGPT | 4.5% | +55% |

| OAI-SearchBot | ChatGPT Search | Active | New in 2025 |

| PerplexityBot | Perplexity | Active | Rapid growth |

| Claudebot | Claude.ai | 3.6% | Nearly doubled |

| Ccbot | Common Crawl / LLM Training | 3.5% | +30% |

Sites loading under 2 seconds are crawled 5 times more frequently than slower sites. If your server response time is slow, AI crawlers skip you.

Make sure none of these crawlers are blocked in your robots.txt. Many sites accidentally block them when using blanket bot rules. A dedicated llms.txt guide explains how to structure explicit LLM access signals on your domain.

The 100-300 Token Extraction Mechanism

When a live-retrieval LLM processes your content, it identifies the most relevant passage for the user’s query. That passage is typically 100-300 tokens. Roughly 75-225 words.

The passage must be self-contained. It should state a claim, support it, and reach a conclusion within those 75-225 words. A passage that starts “As we mentioned in the previous section…” gets skipped. A passage that begins “Search intent falls into 4 categories: informational, navigational, commercial, and transactional” gets cited.

According to Search Engine Land’s citation analysis, 44.2% of all LLM citations come from the first 30% of an article. Front-load your most citable passages.

Stop writing. Start ranking. Stacc publishes 30 SEO-optimized articles every month, structured for both Google and AI citations. Start your $1 trial →

Chapter 3: The 3-Layer LLM Visibility Framework {#ch3}

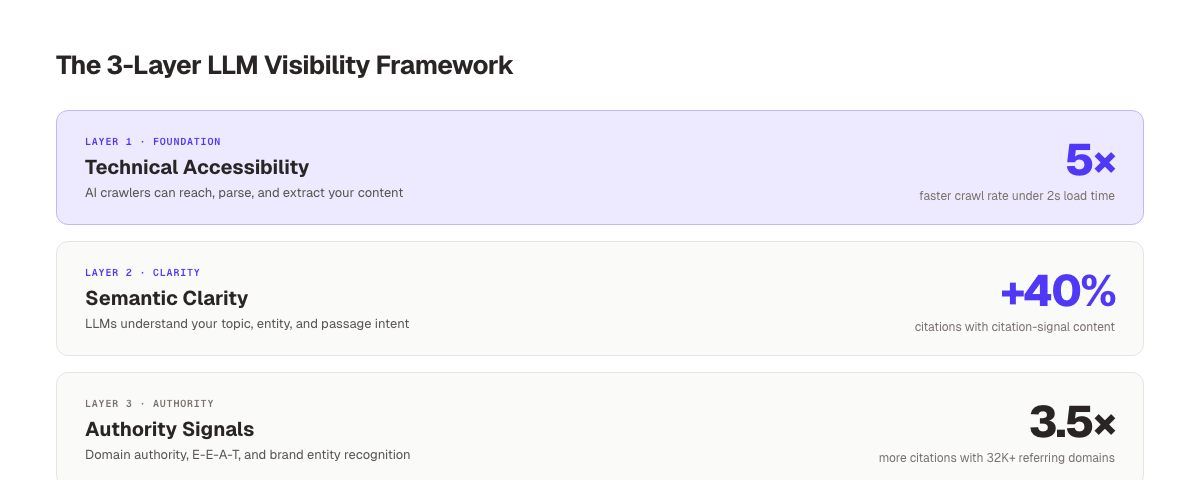

LLM visibility requires optimization across 3 distinct layers. Skip any one, and the other 2 cannot compensate.

Layer 1: Technical Accessibility

Before any content optimization, LLMs must be able to crawl and parse your site. Technical failures block every downstream effort.

Key requirements:

- Allow AI crawlers in

robots.txt(Gptbot, OAI-SearchBot, PerplexityBot, Claudebot, Ccbot) - Use server-side rendering or static site generation. JavaScript-rendered content is frequently skipped

- Achieve sub-2-second server response times

- Add an

llms.txtfile at your domain root to give AI models a structured content map - Use clean semantic HTML with proper H1-H3 hierarchy

If your site relies heavily on client-side JavaScript for rendering, AI crawlers often see an empty page. This is the most common reason high-quality content gets zero LLM citations. Learn how to create an llms.txt file for step-by-step setup.

Layer 2: Semantic Clarity

LLMs select passages based on semantic relevance. Your content must communicate its topic with unambiguous clarity.

Semantic clarity requires:

- Consistent entity naming. Use the same name for your product, company, or topic throughout

- Clear question-answer structures (each H3 poses an implicit question, the paragraph answers it)

- Specific, verifiable claims (“73% of B2B websites,” not “most companies”)

- Passage-level completeness. Every block of 2-4 paragraphs answers a full question without external context

Generic writing fails semantic clarity tests. “Content marketing helps businesses grow” tells an LLM nothing. “Publishing 30+ optimized articles per month drives a compounding 40% organic traffic increase within 12 months” gives the model a citable claim. For more on how AI search engines cite sources, read our deep-dive analysis.

Layer 3: Authority Signals

LLMs do not cite content they distrust. Authority is built from 3 overlapping signals.

Domain authority: Sites with 32,000+ referring domains are 3.5 times more likely to be cited by ChatGPT than sites with fewer than 200. Traditional link building for topical authority directly feeds LLM authority.

E-E-A-T signals: Experience, Expertise, Authoritativeness, Trustworthiness. Google’s quality signals map directly to LLM citation behavior. Named authors, publication dates, cited sources, and original data all increase citation probability.

Brand entity strength: LLMs are more likely to cite brands they recognize from training data. Building your brand entity in SEO through consistent mentions across the web creates the recognition that drives citation frequency.

Chapter 4: Core Content Tactics That Drive LLM Citations {#ch4}

Research across thousands of AI citations reveals which content patterns get extracted most. These are not theories. They are measurable content signals.

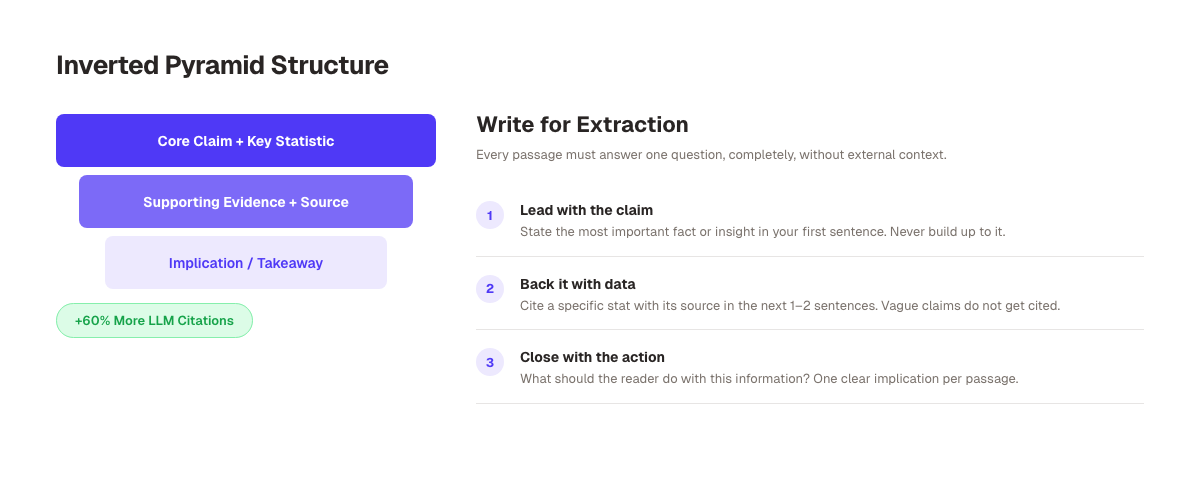

Use the Inverted Pyramid Structure

Journalistic writing puts the most important information first. LLM optimization demands the same approach. Content structured with the inverted pyramid receives 60% more citations than content that builds to a conclusion.

Open every section with your core claim. Support it with data in the next 2-3 sentences. Close with the actionable implication. Do not save the insight for the end of the section.

Weak: “There are many factors that affect how search engines work. Traditional signals like backlinks and keywords still matter. But in 2026, AI search has added new dimensions to visibility…”

Strong: “LLM citation rates increase 40% when content uses structured headers and bullet points. The model needs clear hierarchical signals to identify extractable passages. Pages without this structure get skipped regardless of domain authority.”

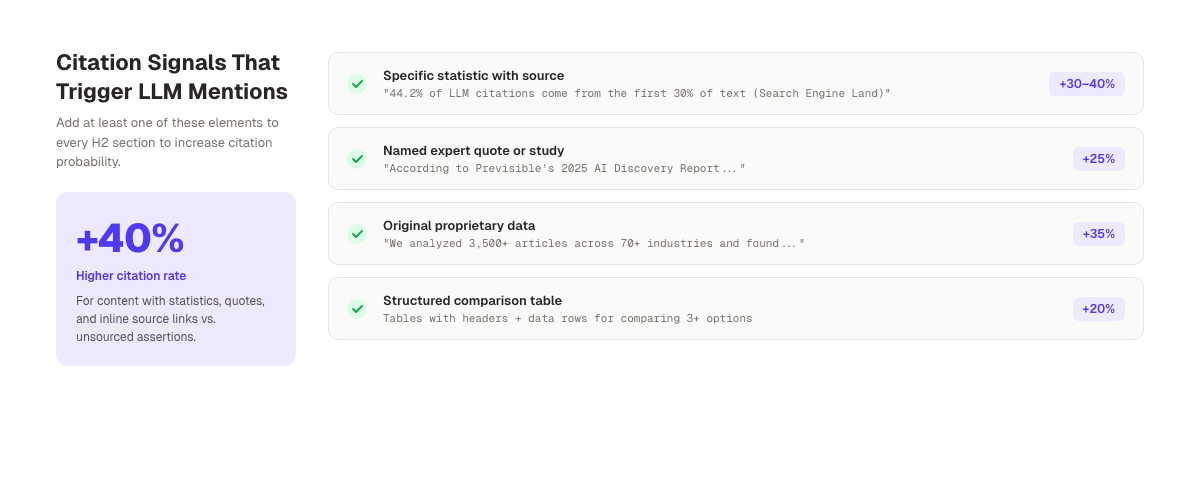

Add Citation Signals to Every Section

Content with statistics, quotes, and external citations gets cited 30-40% more often in LLM responses. Every H2 section should contain at least 1 of these elements:

- A specific statistic with a source attribution (not just the number, but where it came from)

- A direct quote from a named expert or study

- A link to an authoritative source (Google docs, Ahrefs studies, academic research)

- Original data from your own research or analysis

LLMs function like researchers. They prefer to cite content that itself cites credible sources. A page with 5 inline citations to authoritative sources is more citable than a page of unsourced assertions, even if the unsourced page ranks higher on Google.

For current data on AI search statistics and citation behavior, reference our dedicated statistics post when building your link plan.

Optimize Paragraph Length

Paragraphs of 3-5 sentences are optimal for LLM extraction. Shorter paragraphs lack enough context. Longer paragraphs make it harder for the model to isolate the key claim.

Each paragraph should form a complete thought. Start with a claim, provide evidence, state the implication. The model extracts passages at the paragraph level. So every paragraph must be self-sufficient.

Avoid paragraphs that span topics. A paragraph about content freshness should not transition into a comment about schema markup. Each paragraph answers exactly 1 question.

Schema Markup as an Explicit AI Signal

Pages with thorough schema markup are cited up to 40% more frequently by LLMs. Schema communicates structured information that HTML alone does not. LLMs parse schema to understand:

- What type of content this is (Article, HowTo, FAQ)

- What questions it answers (FAQPage schema)

- What entity it describes (Organization, Person, Product)

- When it was published and updated (datePublished, dateModified)

At minimum, add Article schema with author, publisher, datePublished, and dateModified. Add FAQPage schema to every article with a FAQ section. Use HowTo schema for step-by-step guides. The structured data for AI search guide covers the full schema implementation for LLM visibility. You can also generate schemas with our free Schema Markup Generator.

Maintain Aggressive Content Freshness

Pages updated within the past 2 months earn 28% more citations than stale content. 40-60% of LLM citations change every month as models refresh their live retrieval pools.

This means content decay is fast. An article earning citations in January may lose them by March if a fresher, better-structured article enters the indexing pool.

Maintain a content refresh cycle:

- 30-day review: Update statistics, add recent examples, fix broken links

- 90-day review: Add new sections based on emerging questions, expand thin sections

- 180-day review: Full structural audit. Restructure passages for current citation patterns

Freshness signals in AI search work differently than Google’s freshness algorithm. Read that guide for the specific signals LLMs weight most heavily.

Chapter 5: Platform-Specific LLM SEO Strategies {#ch5}

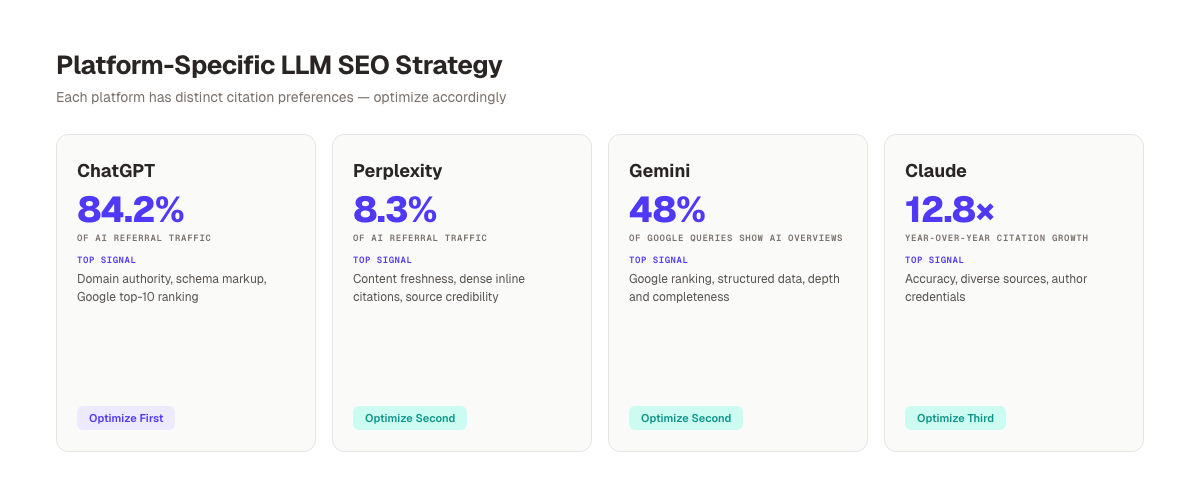

ChatGPT, Perplexity, Gemini, and Claude each have distinct citation behaviors. Treating them as identical wastes your optimization effort.

ChatGPT. Prioritize Traditional SEO Authority

ChatGPT drives 84.2% of AI referral traffic and grew 3.26 times year-over-year. It is the highest-priority platform for most content teams.

ChatGPT’s citation behavior correlates strongly with traditional SEO signals. There is a 0.65 correlation between Google page 1 ranking and ChatGPT citations. Domain authority, backlink count, and E-E-A-T signals matter more on ChatGPT than on any other LLM platform. Almost all sources cited in ChatGPT have schema markup.

Priority actions for ChatGPT: Build domain authority, achieve top-10 Google rankings for target keywords, implement complete schema markup.

Perplexity. Prioritize Freshness and Citation Density

Perplexity AI emphasizes content freshness and source credibility more than ChatGPT. It favors content published or updated within the past 2-3 months and prefers content with dense inline citations.

Perplexity is particularly valuable for B2B brands because it shows sources prominently. Users see which sites were cited, driving brand awareness even without a click. That source display creates brand recognition that compounds over time.

Priority actions for Perplexity: Publish consistently, cite sources inline, refresh high-priority articles every 30-60 days.

Google Gemini. Prioritize Structured Data and Depth

Gemini powers Google AI Overviews, which now appear in 48% of all Google queries. Google AI Overviews cite content that already performs in traditional Google search. The platform has the tightest correlation with Google rankings of any LLM.

Gemini favors deeply structured content. Pages covering a topic exhaustively, with clear H2/H3 hierarchies, comparison tables, and schema markup, outperform thin content even at equivalent ranking positions.

Priority actions for Gemini: Rank in Google top 10 for target keywords, implement complete schema, use comparison tables and structured lists. Our guide on how to rank in AI Overviews covers Gemini-specific tactics in detail.

Claude. Prioritize Accuracy and Source Diversity

Claude (Anthropic) prioritizes accuracy and source diversity over volume. It is more likely to cite content that references multiple independent sources, acknowledges nuance or limitations in a claim, and uses precise language over superlatives.

Claude has grown citations 12.8 times year-over-year and is particularly influential in technical and research-adjacent content categories. Author bios with verifiable credentials significantly improve Claude citation probability.

Priority actions for Claude: Cite primary sources (not secondary summaries), add author bios with credentials, acknowledge where data has limitations.

Platform Optimization Priority Matrix

| Platform | AI Referral Share | Top Optimization Signals | Start Optimizing |

|---|---|---|---|

| ChatGPT | 84.2% | Domain authority, schema, Google ranking | Month 1 |

| Perplexity | 8.3% | Freshness, inline citations, source credibility | Month 2 |

| Google Gemini | Via AI Overviews (48% of queries) | Google rank, structured data, depth | Month 2 |

| Claude | Growing 12.8× YoY | Accuracy, source diversity, credentials | Month 3 |

Your SEO team. $99 per month. 30 optimized articles, published automatically. Structured for Google and AI citations. Start for $1 →

Chapter 6: Distribution Tactics That Build LLM Authority {#ch6}

Content quality alone is not enough. LLMs cite content they have encountered across multiple platforms. Distribution builds the multi-source presence that triggers citation.

Reddit as an LLM Citation Signal

Reddit content appears prominently in LLM training data and RAG retrieval pools. Highly-upvoted Reddit threads signal to LLMs that a subject is discussed authentically by practitioners. When your content is referenced in popular threads, citation probability increases.

The strategy is not self-promotion. Contribute genuine answers in relevant subreddits (r/SEO, r/marketing, r/entrepreneur, r/startups). When you publish new research or guides, share them in communities where they answer a real question. Backlinko calls this approach LLM seeding. Placing your content in high-signal distribution channels before AI models index those channels.

LinkedIn and B2B Signals

LLMs increasingly distinguish B2B and B2C content. For B2B brands, LinkedIn visibility matters. Content cited in LinkedIn articles, newsletters, and posts earns social proof signals that feed into LLM authority assessments.

Publishing original research on LinkedIn. Even short data summaries with a link to the full post. Drives the kind of professional-context citations that LLMs weight for B2B queries. A 300-word LinkedIn post summarizing your study can generate more LLM authority than the study itself if the post earns substantial engagement.

Third-Party Profiles and Directory Citations

G2, Capterra, Trustpilot, Crunchbase, and Wikipedia act as high-authority nodes in LLM training data. Brands with complete, accurate profiles on these platforms receive baseline citation boosts because LLMs treat these sources as authoritative reference materials.

Claim and optimize every relevant third-party profile. Ensure consistent name, URL, and description across all profiles. Inconsistent entity information across directories confuses LLMs and reduces citation accuracy.

Internal Linking as Topical Authority Signals

Internal link networks signal topical authority to both Google and LLMs. A site with 15 interlinking articles on LLM optimization tells the model this site is an authority cluster on the topic.

Build topical authority through systematic internal linking. Every new article should link to 3-5 related articles within its topic cluster and receive links from at least 3 existing articles after publication. The blog GEO checklist includes a link audit template for this process.

Chapter 7: Measure LLM Optimization Results {#ch7}

LLM citations often do not appear in Google Analytics. Many occur as dark traffic. Users who read an AI-synthesized answer and visit your site without a visible referrer. This makes measurement harder, but not impossible.

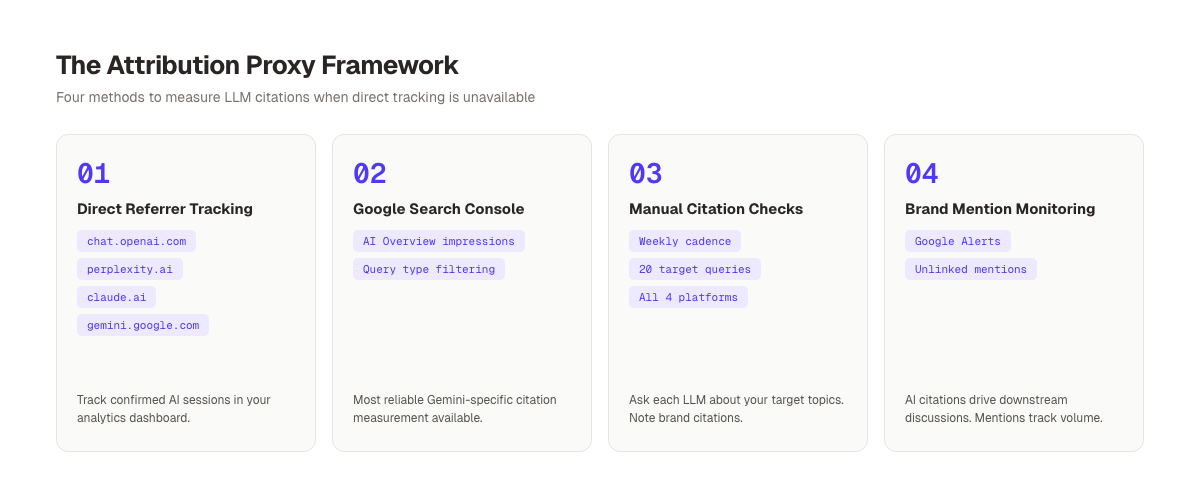

The Attribution Proxy Framework

Direct citation tracking is not available via any public API. But proxies reveal citation trends with reasonable accuracy.

Method 1. Direct referral tracking: Set up referral monitoring for these domains in your analytics:

chat.openai.comandchatgpt.comperplexity.aiclaude.aigemini.google.comcopilot.microsoft.com

Any session with these referrers is confirmed AI traffic. Track volume, pages landed on, conversion rate, and session depth.

Method 2. Google Search Console AI Overviews: Google Search Console shows impressions from AI Overview-triggered queries. Filter by query type to isolate AI Overview appearances. This is the most reliable measurement for Gemini-sourced citations.

Method 3. Manual citation checking: Search your target queries in ChatGPT, Perplexity, Gemini, and Claude directly. Ask the model to explain your primary topic and note whether your content is cited. Run these checks weekly for the 20-30 highest-value queries. The track AI search visibility guide covers the full monitoring workflow in detail.

Method 4. Brand mention monitoring: Set Google Alerts for your brand name, key products, and unique terminology. AI-cited content generates downstream discussions that mention your brand. An increase in unlinked brand mentions correlates with increasing LLM citation volume.

Tools for LLM Citation Monitoring

Three platforms provide direct LLM visibility tracking:

- Semrush AI Toolkit. Tracks brand mentions across major LLM platforms

- Profound. B2B-focused LLM citation analytics

- Peec AI. Multi-platform citation monitoring with trend analysis

These tools are newer and still evolving. Manual tracking provides signal faster while the tool category matures.

Reporting LLM Performance to Stakeholders

LLM optimization is a 6-12 month compounding strategy. First citations typically appear 3-6 months after initial optimization. Monthly volume becomes meaningful at the 9-12 month mark.

Report on 3 metrics in monthly stakeholder reviews:

- Confirmed AI referral sessions (from analytics referrer data)

- AI Overview impressions (from Google Search Console)

- Manual citation score: out of 20 target queries where brand is cited

Progress on all 3 indicates the strategy is working. Stagnation on all 3 signals a structural issue. Either technical accessibility, content quality, or authority signals need attention.

3,500+ blogs published. 92% average SEO score. See how Stacc builds AI-citable content at scale. Start for $1 →

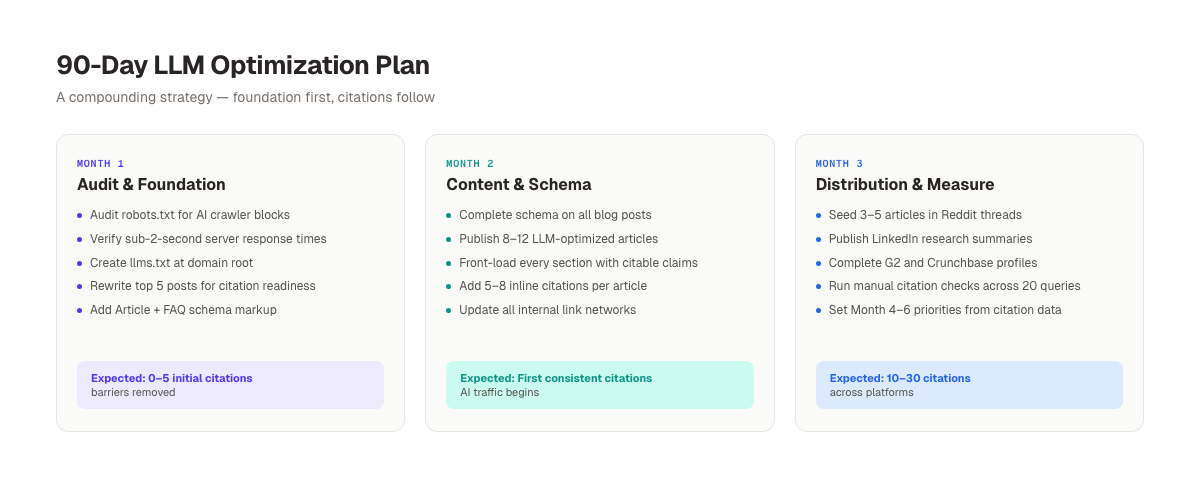

Chapter 8: The 90-Day LLM Optimization Action Plan {#ch8}

LLM optimization produces compounding results. Early months build infrastructure. Later months generate citations. Here is the exact sequence.

Month 1: Audit and Foundation

Week 1-2. Technical audit:

- Check

robots.txtfor accidental AI crawler blocks - Verify server response times under 2 seconds

- Audit JavaScript rendering. Switch to SSR or pre-rendered output for key pages

- Create

llms.txtat domain root with your site map for AI models

Week 3-4. Content audit: Run your 20 highest-traffic posts through a citation readiness checklist:

- Does the opening paragraph contain a citable claim within the first 100 words?

- Does each H2 section contain a specific stat with source attribution?

- Is the paragraph length 3-5 sentences throughout?

- Is schema markup complete (Article + FAQ where applicable)?

Prioritize the 5 posts with the highest update potential and rewrite them to meet citation standards.

Expected Month 1 outcomes: 0-5 initial citations on newly optimized content, technical barriers removed.

Month 2: Content Optimization and Schema

Week 5-6. Schema implementation:

- Add Article schema to all blog posts (author, publisher, dates)

- Add FAQPage schema to all posts with FAQ sections

- Add HowTo schema to step-by-step guides

- Generate and validate schemas with a Schema Markup Generator

Week 7-8. New content production:

- Publish 8-12 new articles targeting high-intent LLM queries

- Structure each with inverted pyramid passages in every section

- Include 5-8 inline citations per article

- Update all internal link networks to point to new articles

Expected Month 2 outcomes: First consistent citations on optimized content, measurable AI referral traffic beginning.

Month 3: Distribution and Measurement

Week 9-10. Distribution push:

- Seed 3-5 articles in relevant Reddit threads (genuine contribution, not self-promotion)

- Publish LinkedIn summaries of original research articles

- Complete G2, Capterra, and Crunchbase profiles

- Verify brand entity consistency across all third-party directories

Week 11-12. Measure and iterate:

- Run the full manual citation check across 20 target queries in all 4 LLM platforms

- Analyze which content types earned the most citations (compare blog, comparison, FAQ formats)

- Identify the top 5 uncited high-quality articles and audit for structural issues

- Set Month 4-6 priorities based on citation data

Expected Month 3 outcomes: 10-30 citations across platforms, clear attribution pattern visible, strategy iteration data in hand.

FAQ

What is the difference between LLM optimization and GEO?

LLM optimization refers specifically to optimizing for large language model search products. ChatGPT, Perplexity, Gemini, and Claude. GEO (Generative Engine Optimization) is the broader umbrella term that includes LLM optimization plus all AI-generated answer surfaces. In practice the tactics largely overlap, but GEO is the more inclusive framing.

Do I need to choose between traditional SEO and LLM optimization?

No. The 2 strategies reinforce each other. 76% of AI-cited URLs also rank in Google’s top 10, which means traditional SEO creates the foundation for LLM citations. A recommended starting split is approximately 55% effort on traditional SEO fundamentals and 45% on LLM-specific signals. Adjusted by your traffic stage and business goals.

How long does it take to see results from LLM optimization?

First citations typically appear 3-6 months after optimizing content for LLM extraction. Consistent citation volume. Enough to drive meaningful AI referral traffic. Typically develops at the 9-12 month mark. The strategy compounds: each cited article increases domain authority, which increases the likelihood that future articles get cited faster.

Which LLM platform should I prioritize first?

Start with ChatGPT. It drives 84.2% of AI referral traffic and has the strongest correlation with traditional Google SEO signals. Optimizing for Google top-10 rankings and implementing complete schema markup addresses the highest-volume platform. Add Perplexity and Gemini optimizations in Month 2-3 once the foundation is set.

Why is my top-ranking Google page not getting cited by ChatGPT?

Page rankings and LLM citations optimize for different things. Google ranks the page as a whole. ChatGPT extracts specific 100-300 token passages. Your page may rank for its title, backlinks, and keyword density without containing clearly extractable passages. Restructure the page using the inverted pyramid method. Front-load specific claims, add inline citations, and ensure each section is self-contained.

How do I measure LLM citations without paid tools?

Use 4 proxy methods: track direct AI referrals in your analytics (chat.openai.com, perplexity.ai, claude.ai, gemini.google.com), monitor AI Overview impressions in Google Search Console, run weekly manual citation checks across 20 target queries in each LLM platform, and track brand mentions via Google Alerts. The combination gives reliable signal without requiring specialized tools.

Conclusion

LLM optimization for SEO is not a separate strategy. It extends the content quality and authority signals that drive traditional search performance. The adaptation targets how large language models extract, evaluate, and cite information.

Start with the technical foundation. Ensure AI crawlers can access your content. Then optimize your existing highest-value pages for passage-level citation readiness. Build distribution across Reddit, LinkedIn, and third-party profiles. Measure with the attribution proxy framework.

The brands investing in LLM optimization now face less competition than early adopters in Google SEO faced years ago. The window for first-mover advantage is open.

Written by

Siddharth GangalSiddharth is the founder of theStacc and Arka360, and a graduate of IIT Mandi. He spent years watching great businesses lose organic traffic to competitors who simply published more. So he built a system to fix that. He writes about SEO, content at scale, and the tactics that actually move rankings.

30 SEO blog articles published every month

Keyword-optimized, scheduled, and live on your site. Automatically.

30-day trial · Cancel anytime

theStacc

Stop writing SEO content manually

30 blog articles, 30 GBP posts, and social media content. Published every month. Automatically.

Start Your $1 Trial$1 for 30 days · Cancel anytime